Language as a Window Into the Mind: How NLP and LLMs Advance Human Sciences

Can NLP predict heroin-addiction outcomes, uncover suicide risk, or simulate (and even influence) brain activity? Could LLMs one day contribute to research worthy of a Nobel Prize for advancing our understanding of human behavior? And what role do NLP scientists play in shaping that possibility? This post explores these questions, arguing that language technologies are not just tools that support scientific work (like literature search agents, writing tools, or coding assistants), but that by treating language as a window into the human mind, NLP and LLMs can actively help researchers uncover mechanisms of human behavior, cognition, and brain function.

Introduction

Deep learning and the breakthroughs it enables now reach far beyond computer science. In 2024, discoveries powered by deep learning earned recognition at the highest scientific levels, including Nobel Prizes in physics and chemistry awarded to John J. Hopfield, Geoffrey E. Hinton, Demis Hassabis, John Jumper, and David Baker. The breakthroughs celebrated so far belong largely to the “exact sciences”, rather than to human-centered fields such as psychology, psychiatry, neuroscience, behavioral economics, and cognition, fields that fundamentally rely on language as a reflection of lived experience, emotion, thought, and behavior. This is surprising, because deep learning has already proven highly effective at modeling language, powering many applications that have quickly become part of daily life. Yet this capability has not (yet) translated into comparable scientific breakthroughs in the human sciences.

While Natural Language Processing (NLP) methods, particularly Large Language Models (LLMs), are widely recognized for advancing science through workflow support (e.g., literature-search agents, writing tools, and coding assistants that facilitate large-scale analysis), or through automatic scientific discovery (e.g., Kosmos AI Scientist), we believe that this perspective overlooks their foundational potential in science. In this post, we argue that language itself is a window into the human mind. Accordingly, modeling language with NLP and LLMs can reveal mechanisms that shape human cognition, brain function, and social behavior, thereby advancing the human sciences and opening the door to breakthroughs. Indeed, over the last decade, researchers across many disciplines have begun to demonstrate how NLP and LLMs can meaningfully advance the human sciences, for example, Psychology

This post aims to achieve three goals. First, we examine the deep connection between language and the human sciences, arguing that language offers a unique entry point into the mechanisms of the mind, brain, and society. Second, we provide concrete examples of how NLP and LLMs can advance science: generating hypotheses, testing theories, simulating human processes, and extracting insights from corpora. Third, we define the responsibilities that NLP and LLM researchers must embrace to make their work scientifically credible and relevant, including framing problems correctly, rigorously evaluating results, and collaborating closely with experts in relevant fields.

Language as a Window Into the Mind

Language holds an unusual combination of systematic structure and deep human nuance

The Individual Level

Through language, we communicate our ideas, beliefs, and subjective reality. Our word choices and linguistic nuances reveal cognitive and emotional states, making language a powerful tool for understanding human consciousness and behavior. Accordingly, language-based research is central to fields such as psychology, cognitive science, and neuroscience, which are disciplines dedicated to exploring the complexities of an individual’s mind.

In psychology and psychotherapy, for example, therapeutic conversations rely on verbal expression, where word choices and narrative structures can uncover underlying thoughts, patterns, and behaviors. Persistent negative language may indicate depression

One example of assessing treatment progression is presented in a pioneering study by

The Collective Level

Language is also a model of communication within groups and societies. This perspective allows us to analyze how language reflects and shapes social interactions, choices, and cultural norms, providing insights into collective behavior across fields like social science, political science, economics, and the humanities.

In social science, language patterns provide insights into social behaviors, group dynamics, and societal trends

Computational social science has emerged as one of the most established subfields of NLP. In one Nature study

Applying NLP in Scientific Quests

In the previous section, we proposed a new way to frame language: as a window into the human mind, at both the individual and collective levels. Now, we turn our attention to practicality. For NLP practitioners seeking to pursue scientific inquiry, we present several practical approaches, along with examples, that showcase how NLP can:

- Generate scientific hypotheses.

- Validate existing theories.

- Empower simulations of human interaction, behavior, or the brain.

- Extract applicable insights from scientific corpora.

Hypothesis Generation (Bottom-Up)

As previously mentioned, NLP methods are often applied to large text datasets, where they excel at learning prediction models to forecast specific phenomena. While this capability is undoubtedly important, predictions alone do not always help us scientifically model and understand the reality around us. The alternative, following classic scientific principles, would be to start with a hypothesis. But what if we’re uncertain about where to begin? In this case, we propose using NLP models to generate novel hypotheses, a ‘proposed explanation for a phenomenon.’

Scientists typically generate hypotheses in a top-down manner, drawing from established theories or prior knowledge to guide their inquiries. This approach is powerful, but it may miss unexpected or emerging patterns hidden within large datasets. NLP models offer a complementary bottom-up path: strong predictive models can act as engines of insight, surfacing structure, explanations, and hypotheses that human researchers might not anticipate. By using explainability methods that clarify why models make certain predictions or what they encode in their internal representations, we can turn predictive power into scientific insight.

One example of this approach is presented in

Theory Validation (Top-Down)

When a scientific hypothesis already exists, NLP models can be used to validate and strengthen it. In a separate study on suicide

To do so, the authors collaborated with domain experts to translate psychiatric theories (the Interpersonal Theory

This AI-driven insight computationally validates aspects of psychological theories, supporting the hypothesis that loneliness is a significant risk factor for suicide. Importantly, it demonstrates that multi-modality (i.e., the fact that features were derived based on both image and query) can help predict suicide risk more effectively than if one were to only use standard representations from state-of-the-art vision encoding models. Moreover, by analyzing the importance of features, researchers could contextualize their findings within established psychological theories.

As this work demonstrates, incorporating NLP with other modalities, such as images, can significantly enhance the scientific insights language offers. Taking this notion a step further, it’s entirely possible to envision models that incorporate not only text, images, and videos, but also brain scans, psychological biomarkers such as blood tests, data from smartwatches, and more. Such a cohesive, NLP-centered multi-modal approach could truly transform scientific research.

Simulating Human Behavior

NLP, and especially LLMs, offers scientists a unique chance to simulate and replicate human behavior in a way that is accessible, affordable, and non-intrusive. By generating human-like responses, LLMs can enable researchers to pilot studies, test different experimental setups, examine various stimuli, and even simulate demographic groups that are difficult to recruit in real life. This, in turn, can support rapid testing of multiple hypotheses and reduce the need for resource-intensive trials with human participants.

The assumption that LLMs can emulate human behavior has been validated by multiple studies, including

Simulating the Human Brain

But LLMs can model more than human behavior. Since language is a direct conduit into the brain’s biological mechanisms, LLMs have the remarkable ability to model aspects of the human brain itself. Researchers approach this by measuring brain activity using techniques such as functional Magnetic Resonance Imaging (fMRI) and Electroencephalography (EEG), then aligning the internal representations of models such as GPT-4

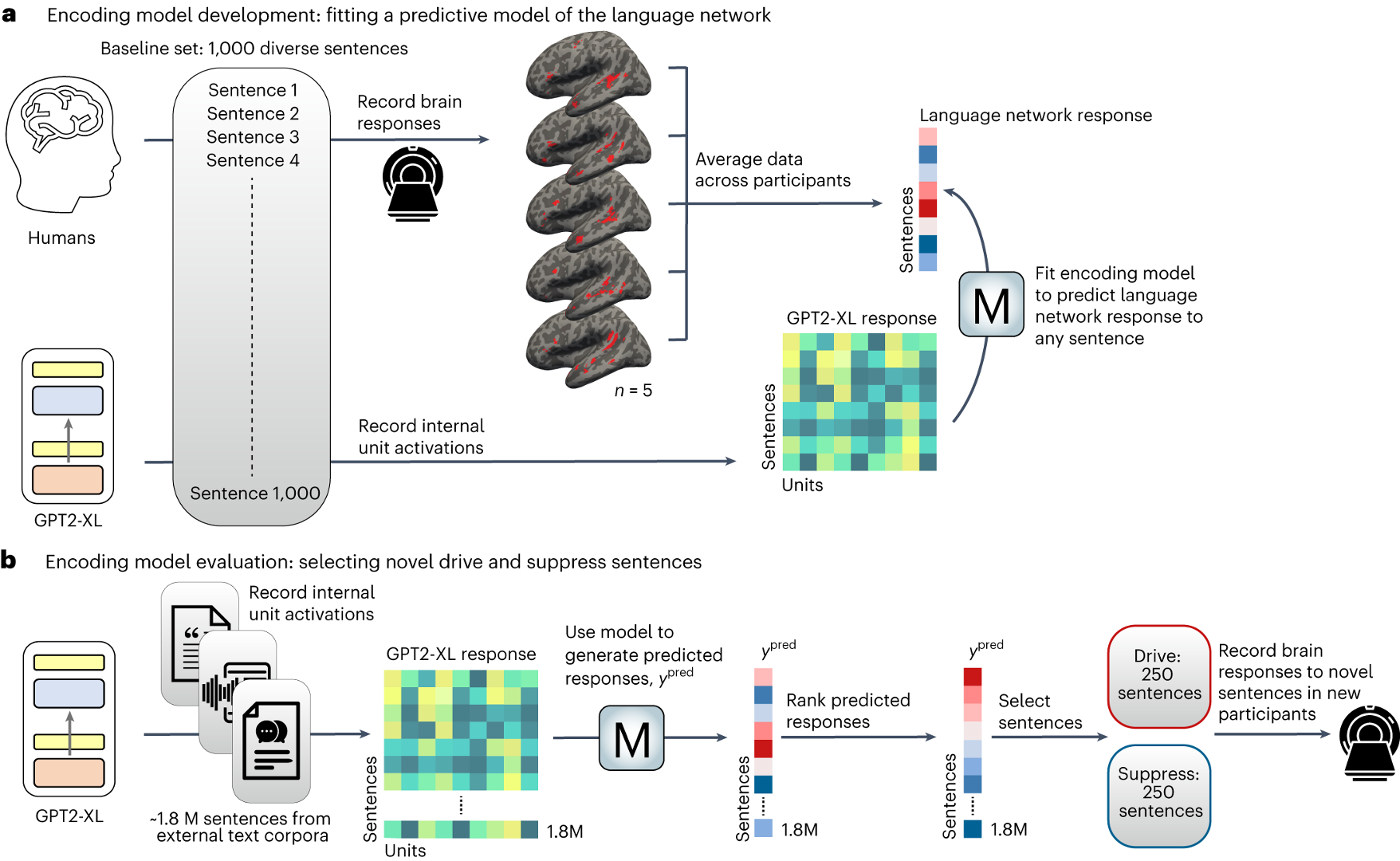

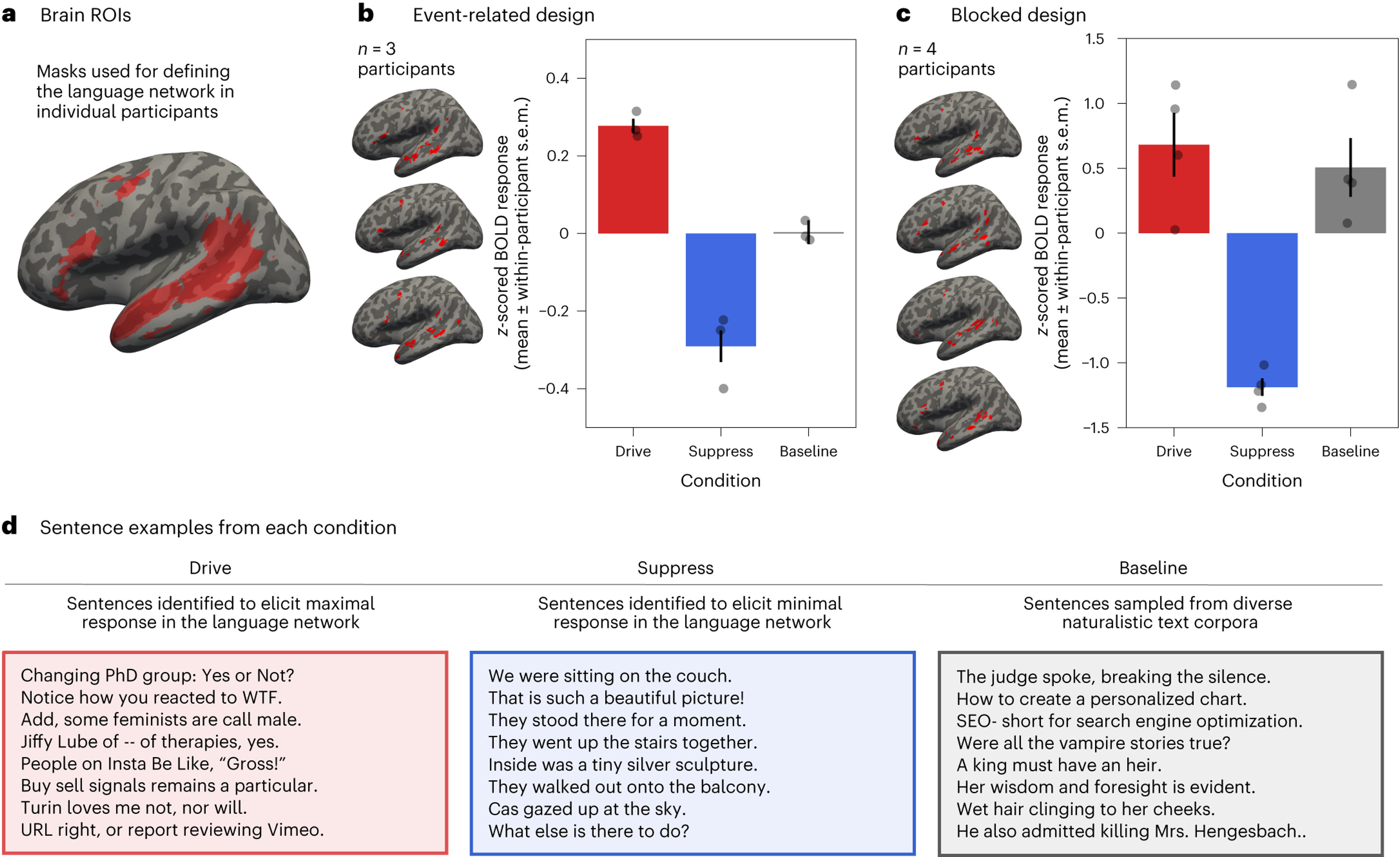

One striking example of using NLP to simulate aspects of the brain is presented in

The researchers recruited participants who underwent fMRI scans while reading 1,000 diverse sentences, then trained an encoding model by feeding GPT-2 XL sentence embeddings into a ridge regression system to predict neural activity. The model achieved a correlation of r = 0.38, demonstrating a clear alignment between its predictions and actual brain activity. In a second stage, the authors searched through roughly 1.8 million sentences to identify those predicted to maximally drive or suppress neural responses. When these sentences were presented to new participants in a held-out fMRI test, the predictions held: “high-response” sentences reliably produced stronger activation in the language network, while “low-response” sentences produced weaker activation. In short, the study shows that NLP models can predict brain activity and even influence it.

This line of research holds promise for the future, enabling simulations with NLP models instead of humans and overcoming traditional neuroscience limitations, such as invasive procedures, high costs, and limited access to specialized equipment.

This indicates that less predictable sentences generally elicited stronger responses in the language network, a phenomenon interestingly captured by the language modeling task. Such findings have implications not only for understanding language processing in the brain but also for showcasing the potential of LLMs to non-invasively influence neural activity in higher-level cortical areas.

Extracting Applicable Insights from Corpora

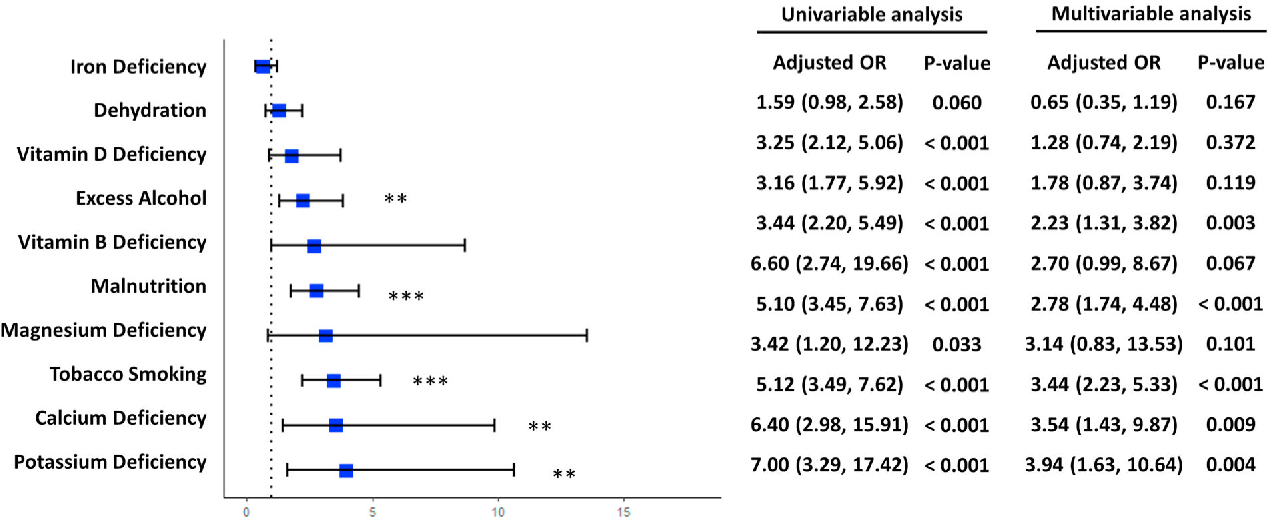

Finally, NLP can fulfill one of its original purposes: extracting insights from large corpora, in this case, scientific texts addressing human sciences. For example,

Their analysis confirmed the prevalence of well-known risk factors, such as tobacco use and malnutrition, in over 50% of Alzheimer’s cases. However, it also called into question certain popular medical hypotheses. Despite extensive research linking cardiovascular risk factors, such as high-fat and high-calorie diets, to Alzheimer’s disease,

This study highlights NLP’s potential as a powerful tool in medicine for several reasons: (1) it enables large-scale analysis of expert-curated textual knowledge, (2) it can both validate and challenge established medical hypotheses, and (3) it yields insights that can directly inform clinical decisions, such as blood work and patient care protocols. Equally important, the study communicates its conclusions with rigor and transparency: the authors conducted comprehensive statistical analyses and reported results only for risk factors that showed statistically significant associations in the Alzheimer’s group. While this may sound straightforward, the engineering-oriented world of NLP often focuses primarily on performance and does not always prioritize statistical significance; even in clinical tasks such as dementia detection from text, fewer than one-third of studies report it

This brings us to our final topic: what is required of an NLP scientist who aims to apply linguistic AI to diverse scientific endeavors, and the responsibilities and challenges that come with this role.

Responsibility of the NLP Scientist

The role of NLP scientists in scientific collaborations goes beyond selecting a strong model and reporting its performance. Contributing meaningfully often requires a solid understanding of the problem space, the research goals, and the limitations of the data, so that modeling choices are well-informed. In practice, an NLP scientist must keep three essential elements in mind: how they model the problem (especially with an emphasis on interpretability); how they rigorously evaluate their results; and who they collaborate with to strengthen and validate their research.

Interpretability-Aware Modeling

It’s up to the NLP scientist to recognize that, in scientific settings, the goal is no longer to achieve the highest possible performance. Instead, the emphasis shifts toward gaining interpretable insights that help explain the underlying human phenomena, even if this comes at the cost of a few performance points. In practice, this involves examining the model’s parameters, behaviors, and predictions to understand which patterns it captures from the data and how those insights relate to the scientific questions at hand, i.e., turning predictive power into meaningful scientific knowledge through interpretability (and explainability). Importantly, interpretability in NLP serves a dual purpose: it can improve model performance through interpretability by revealing failure points and guiding refinement, and it can extract scientific insights that deepen our understanding of the phenomena being studied.

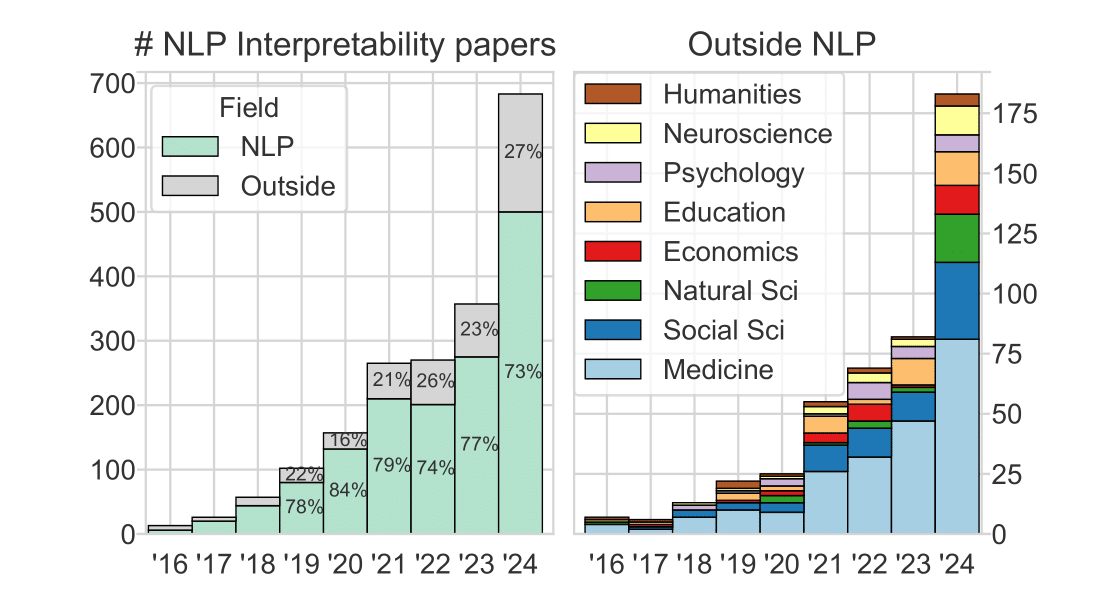

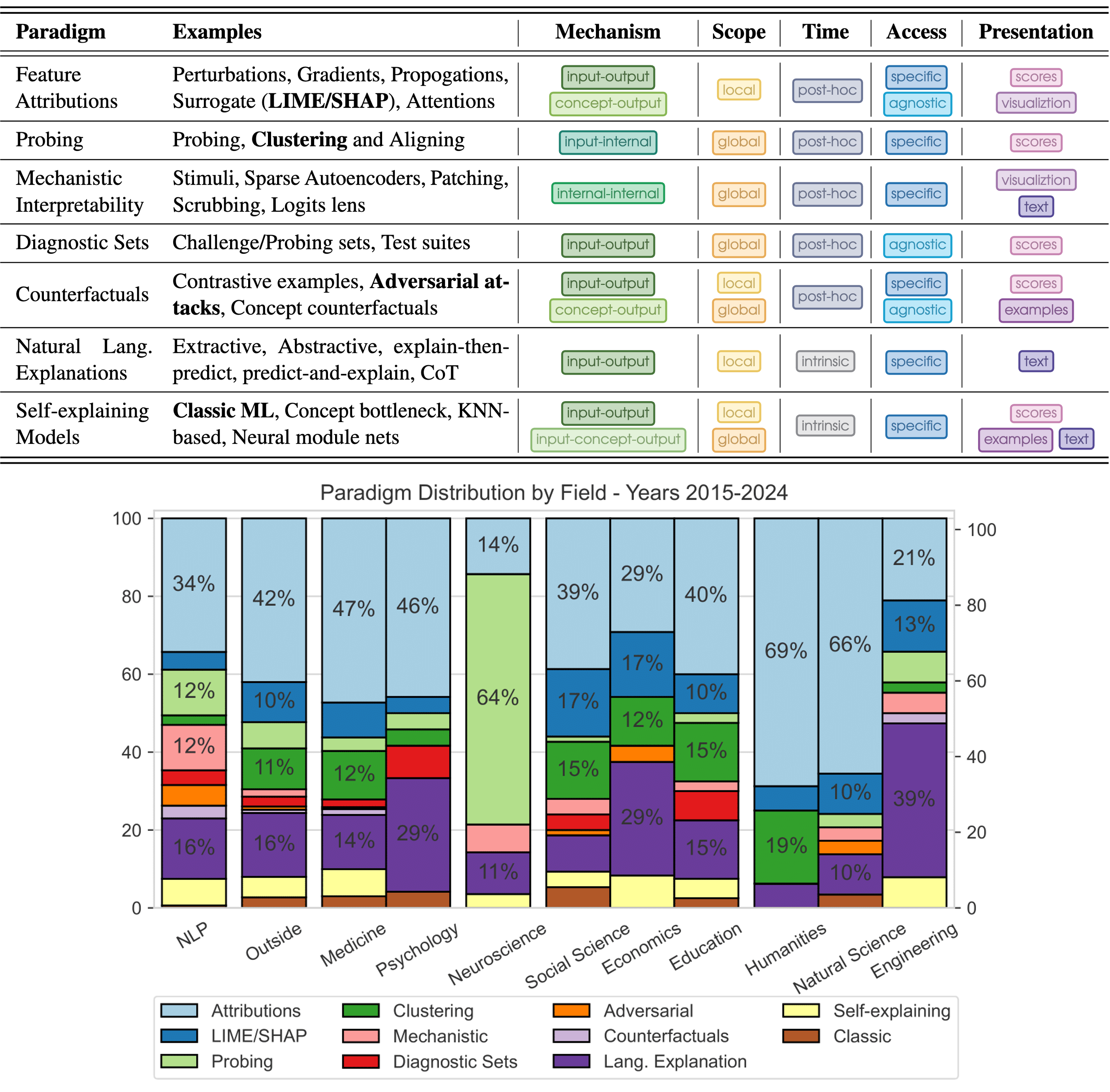

Interpretability in NLP can take many forms, and

In addition to interpretability, NLP scientists should prioritize techniques that help distinguish correlation from causation to ensure that their conclusions genuinely reflect a model’s decision-making process, referred to as “faithful explanations”

Meeting Scientific Standards

Certain standards and practices common in the scientific literature are often overlooked in AI studies. For example,

A rigorous scientific methodology is imperative if NLP practitioners want to cement it as a credible scientific tool. Statistical tests, whether general or tailored to NLP

The data used for NLP algorithms should also comply with scientific standards. In NLP research on linguistic risk factors for suicidal tendencies, for instance, data sources and annotation methods can vary significantly. Many NLP researchers might use Reddit posts labeled by subreddit (e.g., posts from r/SuicideWatch vs. r/general) or crowd-sourced annotations (e.g., taking posts from those subreddits and manually annotating them as positive if the post indicates the author is at risk of suicide). However, data with stronger scientific credibility might include posts from individuals who completed a standardized suicidal ideation questionnaire or, ideally, from those who have actually attempted suicide. Such choices profoundly affect the validity and applicability of the findings, particularly when seeking publication in non-CS journals.

Even popular LLM-based approaches, such as using LLMs as annotators, can now be statistically validated to limit measurement errors, i.e., mismatches between the true gold label and the LLM annotation. After all, biases could be introduced, for example, from inherent biases within the LLM (such as gender, racial, or social biases) and sensitivity to variations in prompts or setups.

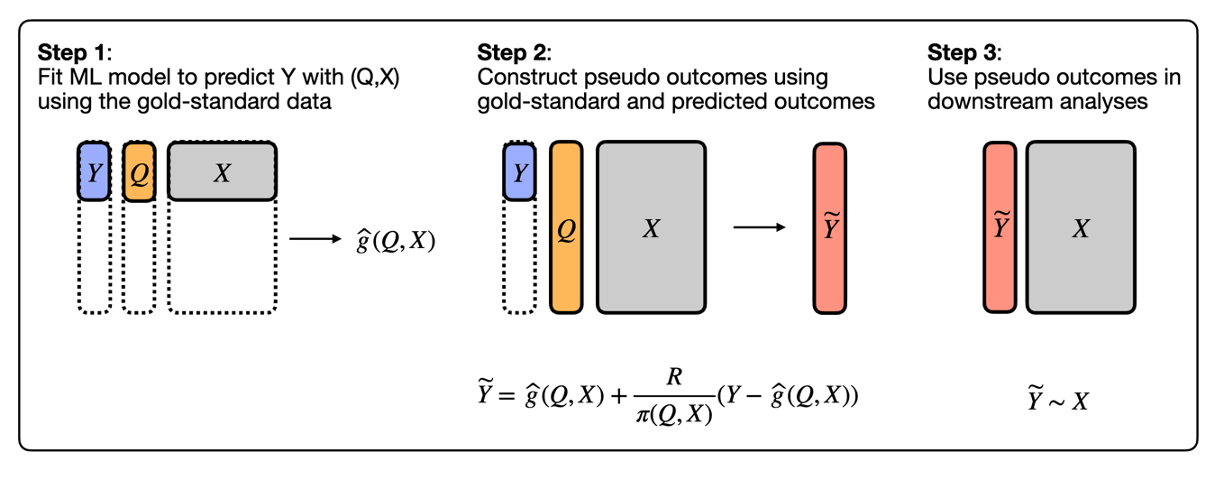

One example of statistically validating LLMs as annotators appears in

Interdisciplinary Collaboration

Finally, for NLP to empower research, at least two experts are required: one on the NLP side and one on the scientific side. Without consulting with domain experts, the NLP scientist can find themselves investing in efforts that, despite being interesting, may not drive true impact. To illustrate this, let’s consider the task of NLP Dementia Detection (as approached by dozens of researchers

"If the data used for training is collected from individuals already diagnosed with noticeable dementia, it wouldn't be particularly helpful or impactful. When someone has already been diagnosed, the signs are easily detectable, so a predictive model wouldn't add much value. The real challenge lies in identifying subtle, early signals that go unnoticed – detecting the Mild Cognitive Impairment (MCI) phase. That's where you should focus."

No one but a domain expert could have provided this invaluable advice. This insight prompts reflection on the hundreds of existing studies on dementia detection

On the other side of the equation, we should consider what scientific domain experts can gain from collaborating closely with NLP scientists. As NLP (and LLMs in particular) become increasingly prevalent as research tools, NLP scientists have a vital responsibility to guide their counterparts on the strengths and limitations of their methods. An interesting example is provided by

NLP scientists should always remember the unique expertise they bring to the table: they’re the specialists who understand the strengths of NLP, designing technical solutions, running experiments, and interpreting results. However, in “NLP for science”, close collaboration with domain experts is essential for success. These scientific counterparts contribute invaluable insights, whether in shaping the initial problem definition, guiding data collection and annotation, or gauging the broader impact of results. By working together from the outset, NLP scientists and domain experts can ensure that the research is not only technically robust but also genuinely meaningful and impactful.

Summary

With this blog post, we aim to inspire NLP experts to leverage their expertise and push the boundaries of human sciences. We highlighted NLP’s unique capabilities across fields such as neuroscience, psychology, behavioral economics, and beyond, illustrating how it can generate hypotheses, validate theories, run simulations, and more. The time is ripe for NLP to drive scientific insights and go beyond mere prediction, positioning language as a lens into human cognition and collective behavior. Moving forward, interdisciplinary collaboration, scientific rigor, and a commitment to meaningful research will be essential to make NLP a cornerstone of human-centered scientific discovery.

PLACEHOLDER FOR ACADEMIC ATTRIBUTION

BibTeX citation

PLACEHOLDER FOR BIBTEX