FANS - Frequency-Adaptive Noise Shaping for Diffusion Models

Diffusion models have transformed generative modeling, powering breakthroughs like Stable Diffusion in images and Sora in video. Yet despite their success, diffusion models share a key limitation - spectral bias; they learn broad, low-frequency structure well but struggle to recover fine, high-frequency details. This happens because the standard uniform noise schedule adds the same Gaussian noise to every frequency band, even though real datasets have very different frequency characteristics. When high-frequency components are overwhelmed with noise early in the forward process, the model learns to regenerate them last, and often poorly leading to the blurred textures and softened edges we observe in many diffusion outputs. Frequency-Adaptive Noise Shaping (FANS) offers a potential way to address this limitation. Instead of treating all frequencies equally, FANS dynamically reshapes the noise distribution based on the true frequency importance of the dataset. This simple yet principled modification plugs directly into existing DDPM architectures and improves denoising where it matters most. Across synthetic datasets (with controlled spectral properties) and real-world benchmarks—including CIFAR-10, CelebA, Texture, and MultimodalUniverse—FANS consistently outperforms vanilla DDPMs, with sharper high-frequency details, lower FID, higher PSNR on reconstruction tasks, and marked gains on texture-rich domains (e.g., up to significant relative improvements in perceptual sharpness and detail fidelity). And crucially, it achieves these benefits without sacrificing performance on standard natural-image datasets.

Motivations

Diffusion models (DDPM) have achived state of the art performances on data modalities like Stable diffusion for images, Sora for videos, RFdiffusion for protiens and Mattergen for materials

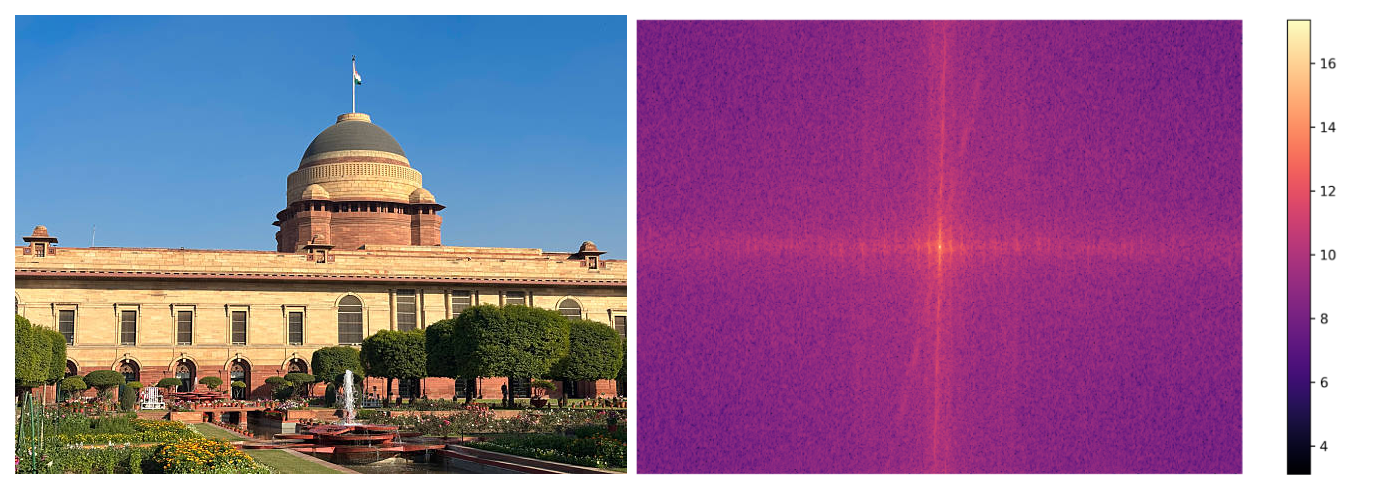

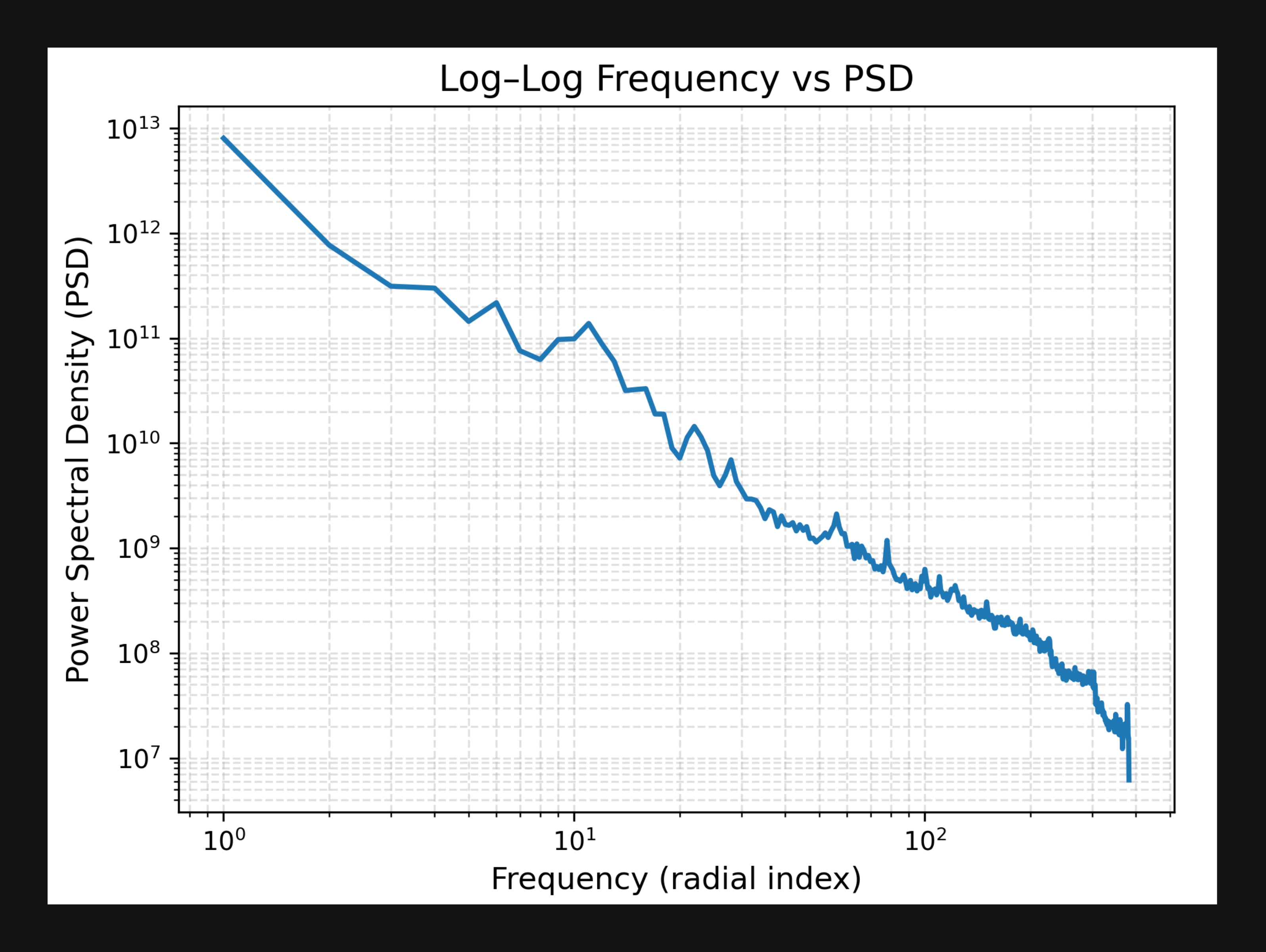

A closer look at these modalities reveals something important: their Fourier representations follow a power-law distribution. Low-frequency components carry much higher variance and describe global structure, while high-frequency components capture finer details

But datasets are not spectrally uniform. Natural images place most of their power in low frequencies, while domains such as astronomy and texture design distribute power very differently. This naturally leads to a question for us: if datasets have distinct spectral fingerprints and diffusion models already denoise from coarse to fine, why rely on the same white noise across all frequencies and all timesteps? And how well does this uniform schedule generalize across such varied domains?

In this work, we explore whether explicitly shaping the noise spectrum (i) to reflect a dataset’s actual frequency distribution and (ii) to introduce a principled time-frequency annealing schedule can improve sample quality and stability, all without altering the UNet architecture or the DDPM objective.

Quick Overview

Let’s start with a quick overview of the two frameworks we will be working with: Diffusion models and Frequency domain of Images.

Diffusion Models

Diffusion models generate data by learning to reverse a gradual noising process. The core idea is elegantly simple: start with real data and progressively corrupt it by adding Gaussian noise over many steps until it becomes pure random noise. This forward process requires no learning and follows a predefined schedule. A neural network then learns to invert this process, starting from random noise and iteratively denoising it to recover clean, structured data. This reverse process is governed by stochastic differential equations (SDEs) that describe how probability distributions evolve over time, with the model learning to approximate the score function which guides the denoising steps.

Forward Process

It defines a markov chain that progressively add gaussian noise to the data sample \(\mathbf{x}_0 \sim q(\mathbf{x}_0)\) over \(T\) timesteps. Each timestep adds a slight noise to the data resulting in a increasingly noisy samples \(\mathbf{x}_1, \mathbf{x}_2, \dots, \mathbf{x}_T\), where $\mathbf{x}_T$ approximates an isotropic Gaussian distribution. The forward process is formally defined as :

\[q(\mathbf{x}_t | \mathbf{x}_{t-1}) = \mathcal{N}(\mathbf{x}_t; \sqrt{1 - \beta_t} \, \mathbf{x}_{t-1}, \beta_t \mathbf{I}),\]where $\beta_t \in (0,1)$ controls the amount of noise added to the data.

By applying the reparameterization reccursively we obtain the closed form experssion

\[q(\mathbf{x}_t | \mathbf{x}_0) = \mathcal{N}(\mathbf{x}_t; \sqrt{\bar{\alpha}_t} \, \mathbf{x}_0, (1 - \bar{\alpha}_t)\mathbf{I}),\]where \(\alpha_t = 1 - \beta_t\) and \(\bar{\alpha}_t = \prod_{s=1}^t \alpha_s\).

Thus the noisy data at any given time \(t\) in the forward proccess

\[\mathbf{x}_t = \sqrt{\bar{\alpha}_t}\mathbf{x}_0 + \sqrt{(1 - \bar{\alpha}_t)}\epsilon\]where \(\epsilon \in \mathcal{N}(0,\mathbf{I})\).

A useful notation in this is the log signal-to-noise ratio \(\lambda_t = log(\bar{\alpha}_t / (1 - \bar{\alpha}_t))\) which increases monotonically from 0 ( clean data) to 1 (noise)

Reverse Process

The Reverse process tries to invert the forward process, transforming Gaussian noise \(\mathbf{x}_T \sim \mathcal{N}(\mathbf{0}, \mathbf{I})\) back into a data sample resembling the data distribution \(q(\mathbf{x}_0)\). Since the true reverse transitions \(q(\mathbf{x}_{t-1} | \mathbf{x}_t)\) are intractable, a parameterized model \(p_\theta(\mathbf{x}_{t-1} | \mathbf{x}_t)\) is trained to approximate them.

The reverse process is modeled as:

\[p_\theta(\mathbf{x}_{t-1} | \mathbf{x}_t) = \mathcal{N}(\mathbf{x}_{t-1}; \boldsymbol{\mu}_\theta(\mathbf{x}_t, t), \boldsymbol{\Sigma}_\theta(\mathbf{x}_t, t)),\]where \(\boldsymbol{\mu}_\theta\) and \(\boldsymbol{\Sigma}_\theta\) are outputs of a neural network conditioned on \(\mathbf{x}_t\) and the timestep \(t\). The model learns to predict the mean of the denoised sample at each step.

Using the forward process derivation, the true mean of \(q(\mathbf{x}_{t-1} | \mathbf{x}_t, \mathbf{x}_0)\) can be expressed as:

\[\boldsymbol{\mu}_q(\mathbf{x}_t, \mathbf{x}_0) = \frac{1}{\sqrt{\alpha_t}} \left( \mathbf{x}_t - \frac{\beta_t}{\sqrt{1 - \bar{\alpha}_t}} \boldsymbol{\epsilon} \right),\]where \(\boldsymbol{\epsilon}\) denotes the Gaussian noise added at timestep \(t\).

On way of learning is during training the model is optimized to predict this noise directly using a loss of the form:

\[L(\theta) = \mathbb{E}_{t, \mathbf{x}_0, \boldsymbol{\epsilon}} \left[ \left\| \boldsymbol{\epsilon} - \boldsymbol{\epsilon}_\theta( \sqrt{\bar{\alpha}_t}\mathbf{x}_0 + \sqrt{1 - \bar{\alpha}_t}\boldsymbol{\epsilon}, t ) \right\|^2 \right],\]which corresponds to a reweighted variational bound on the data likelihood.

Fourier Domain

Natural Images have rich structures in the frequency domain that is obscured in the pixel space. Understanding this frequency domain perspective is crucial for our investigation.

Let’s say we have an image \(x \in \mathbf{R}_{H \times W\times C}\). Its pixels are coeffcient of a standard basis \(\mathcal{B} = \{ \mathbf{e}_1, \mathbf{e}_2, \dots, \mathbf{e}_n \} \subset \mathbb{R}^n\). In the spatial domain, the image is defined as a linear combination of standard basis vectors, where each pixel represents a local real-valued intensity. When we apply the Discrete Fourier Transform(DFT) to this image, it performs unitary change of basis giving us the frequency domain equivalent of the image. The dimension of the fourier and the pixel image remains the same but in fourier domain the coefficients of the basis becomes complex valued.

For each channel of an image the DFT can be formulated as

\[F(u, v) = \sum_{x=0}^{H-1} \sum_{y=0}^{W-1} f(x, y) e^{-j 2\pi \left( \frac{ux}{H} + \frac{vy}{W} \right)}\]where \((u,v)\) aer spatial frequency indices. The radial frequency \(f = \sqrt{u^2 + v^2}\) measure the distance from the DC ( zero frequency component)

Now, let’s sort the Fourier coeeficient \(f(u,v)\) from low to high frequency. To do so, we start from the center of our Fourier Space and walk in spirals. That is, sort them using the Manhatten distancen of the indices \((uv,)\) from the center (0,0) of the Fourier representation. From the Figure 2 It’s very evident that signal variance decreases rapidly with increasing frquency.

Power Spectral Density(PSD): This quantifies how the signal enery is distributed across frequencies. For an image with Fourier Transform \(F(u,v)\), PSD is defined as :

\[P(u,v) = |F(u,v)|^2\]representing the power at each frequency component. To get one dimensional spectral profile we compute the radially-average PSD by integrating over annular regions at constant radial frequency \(f\) (simply put we compute average power in ring-shaped zones moving outward from the center). Formally put

\[P(f) = \frac{1}{|B_f|} \sum{}_{(u,v) \in B_f} |F(u,v)|^2\]Where \(B_f\) is the radial frequency band defined as \(B_f = {(u,v): f \leq \sqrt{u^2+v^2} \le f+ \delta f}\) and \(\|B_f\|\) is the number of frequencies in that band.

The PSD reveals how much each frequency contributes to the overall signal. For a dataset of images, we compute per-image PSDs and average them to obtain a dataset-level spectral profile. This aggregate PSD characterizes the typical frequency distribution of the data and captures domain-specific properties

Real-world image datasets exhibit characteristic frequency distributions. The power spectral density of natural images typically follows a power-law decay:

\[P \propto f^{-\alpha}\]Emperical studies on natural image statistics shave shown that \(\alpha\) typically resides in the interval \([1,3]\)

To quantify dataset frequency importance, we divide the spectrum into \(B\) radial frequency bands and compute the normalized band power \(\pi_b\) for each band \(B\):

\[\pi_b = \frac{1}{N}\sum{N}_{i=1} \frac{\sum{}_{f \in B_i}P_i(f)}{\sum{}_{f} P_i(f)}\]where \(N\) is the number of images in the dataset and \(P_i(f)\) is the PSD of image \(i\). These band powers \({\pi_b}^B_{b=1}\) form a probability distribution over frequency bands, representing how the dataset’s signal energy is distributed across the spectrum.

Diffusion in Frequency Domain

As we are trying to investigate how the DDPMs inductive bias in the forward process

Here, \(y_t\) is our fourier-transformed intermediate step at time step \(t\) of the forward process.

Taking the quantification of Signal-to-noise Ration from

Where \(\varsigma_i = Var((x_0)_i)\) represents the signal variance of requency \(i\).

Tying these all together, we see in Figure 3, that standard DDPM corrupts high-frequencies faster than low frequencies. This bias is carry forwarded to the reverse process as well.

Thus we want to study an alternate noising schedule that respects the datasets spectral signature. To put simply a Frequency Adaptive Noise Scheduler ( FANS ).

FANS

In the previous sections we saw the inductive bias of DDPM towards high frequency components both in forward and reverse process. We want to investigate if instead of isotropic gaussian noise scheduler (irrespective of the frequency distribution of the dataset), we use a scheduler that is adaptive to the intrinsic frequency characteristic of the dataset by constructing spectrally-shaped noise. Our key insight is that different frequency bands contribute unequally to perceptual quality and should be treated accordingly during both training and generation.

This proposed approach, operates through three complementary mechanism :

- Dataset importance profiling: We analyze the spectraal distribution of the data to compute frequency band importance weight \(g_b\) that quantifies the relative contribution of each band of the overall data.

- Time-Frequency Scheduling: We introduce a temporal ramping function \(\phi(t)\) that smoothly transitions noise allocation from low to high frequencies as the diffusion process evolves, enabling coarse-to-fine generation.

- Variance-Compensated Weighting: We apply inverse-power weighting to band importances, ensuring that underrepresented high-frequency bands receive compensatory emphasis during training.

We can now formalize these mechanism:

Radial Frequency Band Decomposition

Recalling from previous discussion, let’s \(x_0 \in \mathbb{R}^{H \times W \times C}\) denote a clean image, and let \(\mathcal{F}\) denote the real-valued FFT used in the implementation. To characterise the dataset’s spectral structure, we partition the \(B\) radial frequency bands \(\{B_b\}_{b=1}^B\) in the discrete \(r\)FFT layout (shape \(H \times W/2 + 1\)) using linear radial boundaries. For each band \(b\) we define :

- Band mask : \(B_b \in \{0,1\}^{H \times W/2 + 1}\) indicating membership.

-

Band size : \(B_b\) denotes the nuber of frequency coefficients in band \(b\).

Bands are constructed using radial frequency \(F(u,v) = \sqrt{u^2 + v^2}\) with edges uniformly spaced between a small positive \(f_{min}\) (to exclude DC component) and \(f_{mac}\) ( Nyquist frequency = 0.5)

Intuition : Excluding the DC component (zero frequency) is critical because it represents the global mean intensity, which dominates the spectrum but carries minimal perceptual information. Including DC in band 0 would artificially inflate its importance \(g_0\) and distort the learned weighting.

Dataset Importance Profiling

For each image \(x\) in the training set, we compute the normalized band power \(\pi_b(x)\) as ;

\[\pi_b(x) = \frac{\sum{}_{k \in B_b} |F(x - \bar{x})(k)|^2 }{\sum^{B-1}_{b^\prime = 0 }\sum{}_{k \in B_{b^\prime}} |F(x - \bar{x})(k)|^2 }\]wher \(\bar{x}\) is the per image mean ( removing DC ). This gives the fraction of total power residing in band \(b\) for image \(x\).

We then compute the dataset-level band distribution by averaging over \(N\) samples:

\[\bar{\pi} = \frac{1}{N} \sum^{N}_{i=1} \pi_b(x_i)\]Note that \(\sum^{B-1}_{b=0} \bar{\pi_b} = 1\) by construction.

Finally, we compute importance weights via inverse-power scaling rule :

\[g_b = \frac{(\bar{\pi_b} + \epsilon )^{-\alpha}}{\frac{1}{B}\sum^{B-1}_{b^\prime=0}(\bar{\pi_{b^\prime}} + \epsilon)^{-\alpha}}\]wher \(\alpha\) controls the strength of variance compensation. The standardization ensures \(g_b\) has zero mean and unit variance, provising a stable range for the softmax reweighting.

Intuition: Bands with low power \(\bar{\pi_b}\)(e.g., high frequencies) receive higher importance \(g_b\) , compensating for their underrepresentation in the data. This prevents the model from neglecting high-frequency reconstruction.

Time-Dependent Soft Band Weighting [check once]

At each timestep \(t∈[0,1]\)t \in [0,1]\(, we compute soft band weights\)w_b(t)$$ via a temperature-scaled softmax with time-frequency ramping:

\[w_b(t) = \frac{exp(\beta.g_b - \gamma.\phi(t).\lambda_b)}{\sum^{B-1}_{b^\prime = 0}exp(\beta.g_b^\prime - \gamma.\phi(t).\lambda_b^\prime)}\]where:

- \(\lambda_b = b/(B-1)\) is the normalized band index \(\lambda_0 = 0, \lambda_{B-1}=1\)

- \(\pi(t) \in [0,1]\) is a temporal ramp.

- \(\beta,\gamma \ge 0\) are the invese temperature hyperparameter.

Early in the diffusion process, the term \(\beta. g_b\) dominates, emphasizing frequencies according to dataset statistics. As \(t\) increases, the ramp \(\phi(t)\) gradually increases the influence of $\gamma\lambda_b$, making the spectrum increasingly uniform.

Stabilization: White Noise Guardrail To ensure stable training during the earliest timesteps, we introduce a white noise mixing schedule:

\[w_b^{\text{mix}}(t) = \begin{cases} \frac{1}{B} & \text{if } t < t_{\text{knee}} \\ (1 - \alpha_{\text{mix}}(t)) \cdot \frac{1}{B} + \alpha_{\text{mix}}(t) \cdot w_b(t) & \text{if } t \geq t_{\text{knee}} \end{cases}\]where \(\alpha_{\text{mix}}(t) = \frac{t - t_{\text{knee}}}{1 - t_{\text{knee}}}\) is a linear blend coefficient and \(t_{\text{knee}} = 0.15\) by default.

Intuition: At very small \(t\), the noised samples \(x_t \approx \beta_t \epsilon\) are nearly pure noise. Enforcing strong spectral shaping here can destabilize training because the model has insufficient signal to learn meaningful structure. By using uniform weights early, we ensure the model first learns to denoise white noise (as in standard DDPM), then gradually transitions to FANS-shaped noise.

FANS Noise Generation

Tying the above mechanisms together to generate the FANS-Noise we get.

Given a sample \(x \in \mathcal{R}^{N \times C \times H \times W}\) and normalised time \(t \in [0,1]\) FANS-Noise \(\epsilon_{FANS}\) is generated as :

Step 1. Compute band weights: \(\{w_b(t)\}^{B-1}_{b=0}\) as discussed above. Step 2. Allocate spectral power:

The total power available in Fourier space must account for the Parseval Relation (It states that total power is time domain must be equal to the total power in the fourier domain). For the forward process, the noise component has pixel-space variance \(\beta_t^2\). We set the \(\sigma_t = 1\), giving us

\(P_{total} = N_p.\sigma^2_t = H.W.1 = H.W\).

Why we go for \(N_p\) (not \(N_p^2\)): The power spectral density relates to the sum of squared Fourier coefficients, not their squared sum. For an \(H \times W\) image, the rFFT produces \(H \times (W/2 + 1)\) complex coefficients. By Parseval’s theorem

\[\sum_{i=1}^{H \cdot W} x_i^2 = \frac{1}{H \cdot W} \sum_{k} |F(x)(k)|^2\]where the factor \(1/(H \cdot W)\) comes from the DFT normalization convention.

We then allocate power to each band according to the learned weights:

\[P_b(t) = w_b(t) \cdot P_{\text{total}} = w_b(t) \cdot H \cdot W\]The per-frequency variance within band \(b\) is obtained by distributing \(P_b\) uniformly across its members:

\[\Sigma_b(t) = \frac{P_b(t)}{|\mathcal{B}_b| + \epsilon}\]where \(\epsilon = 10^{-6}\) prevents division by zero as a numerical safeguard.

Step 3. Draw Complex Gaussian Noise in Fourier Space:

We generate base noise \(Z \in \mathbb{C}^{N \times C \times H \times (W/2+1)}\) by sampling independent real and imaginary components:

\[Z_{\text{real}} \sim \mathcal{N}(0, I), \quad Z_{\text{imag}} \sim \mathcal{N}(0, I)\] \[Z(n, c, h, w) = \frac{1}{\sqrt{2}}(Z_{\text{real}}(n,c,h,w) + i \cdot Z_{\text{imag}}(n,c,h,w))\]The \(1/\sqrt{2}\) factor ensures

\[\mathbb{E}[|Z(k)|^2] = \mathbb{E}[Z_{\text{real}}^2 + Z_{\text{imag}}^2]/2 = 1\]Step 4: Construct Spectral Variance Mask

We build a spatial variance map \(\Sigma_t \in \mathbb{R}^{N \times C \times H \times (W/2+1)}\)that specifies the desired variance at each frequency:

\[\Sigma_t(n,c,h,w) = \sum_{b=0}^{B-1} \Sigma_b(t) \cdot \mathbb{1}_{(h,w) \in \mathcal{B}_b}\]where \(\mathbb{1}_{(h,w) \in \mathcal{B}_b}\) is the indicator function for band membership.

Step 5: Apply Frequency-Dependent Scaling The shaped noise in Fourier space is obtained by element-wise multiplication:

\[E_{\text{shaped}}(n,c,h,w) = \sqrt{\Sigma_t(n,c,h,w) + \epsilon_{\text{safe}}} \cdot Z(n,c,h,w)\]where \(\epsilon_{\text{safe}} = 10^{-12}\) ensures numerical stability when \(\Sigma_t \approx 0\).

Why square root? We are scaling the amplitude of Fourier coefficients. Since power is amplitude squared, to achieve variance \(\Sigma_t(k)\), we need amplitude \(\sqrt{\Sigma_t(k)}\). This follows from:

\[\mathbb{E}[|E_{\text{shaped}}(k)|^2] = \mathbb{E}[|\sqrt{\Sigma_t(k)} \cdot Z(k)|^2] = \Sigma_t(k) \cdot \mathbb{E}[|Z(k)|^2] = \Sigma_t(k)\]Step 6: Inverse Fourier Transform to Pixel Space We apply the inverse real FFT to recover a real-valued noise image:

\[\epsilon_{\text{FANS}}^{\text{raw}} = \text{irfft2d}(E_{\text{shaped}}, s=(H, W))\]where \(s=(H, W)\) specifies the desired output shape and irfft2d is the 2D inverse real FFT.

Step 7: Enforce Unit Variance via Normalization While the Fourier-space construction theoretically preserves variance, discretization effects, numerical precision, and band edge artifacts can cause the pixel-space variance to deviate from unity. To ensure exact compatibility with the forward process \(x_t = \alpha_t z + \beta_t \epsilon\) where \(\mathbb{E}[\epsilon \epsilon^T] = I\), we enforce unit variance per sample and per channel:

\[\mu(n,c) = \frac{1}{H \cdot W} \sum_{h,w} \epsilon_{\text{FANS}}^{\text{raw}}(n,c,h,w)\] \[\sigma^2(n,c) = \frac{1}{H \cdot W} \sum_{h,w} (\epsilon_{\text{FANS}}^{\text{raw}}(n,c,h,w) - \mu(n,c))^2\] \[\epsilon_{\text{FANS}}(n,c,h,w) = \frac{\epsilon_{\text{FANS}}^{\text{raw}}(n,c,h,w) - \mu(n,c)}{\sqrt{\sigma^2(n,c) + \epsilon_{\text{safe}}}}\]Critical importance: This normalization is not optional. Without it, we observed training instabilities where the effective noise magnitude drifted over time, breaking the assumptions of the forward SDE. The normalization ensures:

- Zero mean: \(\mathbb{E}[\epsilon_{\text{FANS}}] = 0\) exactly (not just approximately)

- Unit variance: \(\text{Var}(\epsilon_{\text{FANS}}) = I\) exactly

-

Consistency: \(x_t = \alpha_t z + \beta_t \epsilon_{\text{FANS}}\) has the correct conditional distribution $$p_t(x z)$$

Why per-channel normalization? Color channels may have different effective powers after Fourier shaping due to:

- Boundary effects in rFFT (asymmetric handling of Nyquist)

- Floating-point rounding errors accumulated differently per channel

- Non-uniform distribution of salient features across RGB

Normalizing each channel independently ensures that the model sees noise with identical statistics in all color channels, preventing the network from learning color-dependent denoising biases.

Step 8: Return Shaped Noise The final output \(\epsilon_{\text{FANS}} \in \mathbb{R}^{N \times C \times H \times W}\) satisfies:

- \(\mathbb{E}[\epsilon_{\text{FANS}}] = 0\)(zero mean)

- \(\mathbb{E}[\epsilon_{\text{FANS}} \epsilon_{\text{FANS}}^T] = I\) (unit covariance)

- Frequency band \(b\) contains fraction \(\approx w_b(t)\) of total power

Low frequencies dominate at small \(t\), high frequencies at large \(t\)

This noise can now be used in the forward process:

\[x_t = \alpha_t z + \beta_t \epsilon_{\text{FANS}}\]and in the loss computation:

\[\mathcal{L} = \|u_\theta^t(x_t) - (\dot{\alpha}_t z + \dot{\beta}_t \epsilon_{\text{FANS}})\|^2\]Sampling/ Inference

This algorithm shows the sampling method we used for our proposed approach.

Now that we have the mechanism to get spectral aware shape, we need to see how performs against a baseline. To test this we simulate experiments where high frequency and fine grained information is of primary interest. For this simulation we do a synthetic study. We designed two synthetic datasets PLTB and EGM each constructed to emphasize a distinct spectral profile. All three datasets are generated programmatically as 512×512 images. We discuss these synhtetic datasets in details in the following section

We use this synthetic data because controlled synthetic data provides the ability to vary the distribution of Fourier energy across bands in a principled way and isolate the effect of FANS.

Synthetic Data

PLTB: Power-Law Texture Bank

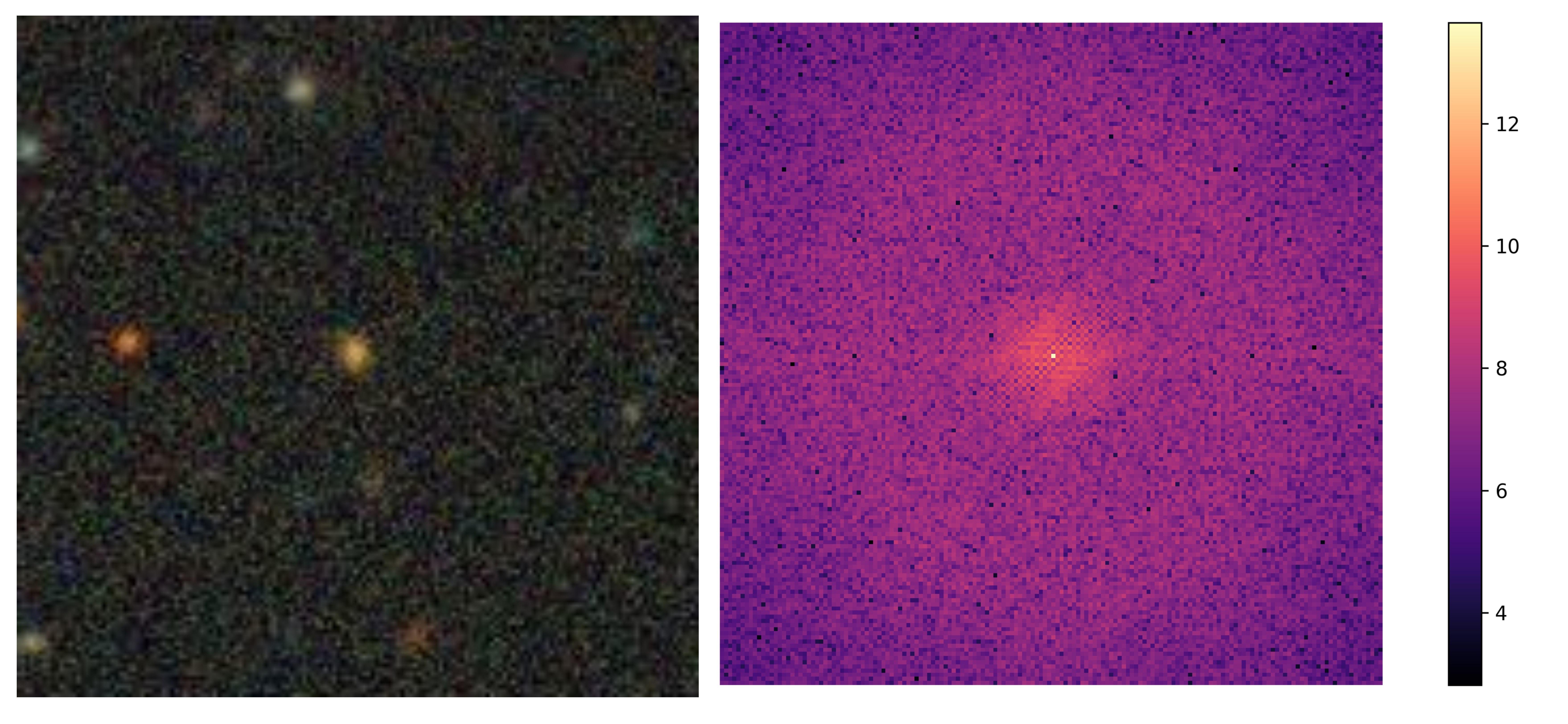

Motivation: PLTB targets the high-frequency regime. In this the entire image is defined through its radial power spectrum. We can see the distribution of spectral mass across bands can be observed in the figure.

Genertation Procedure: Each PLTB sample is generated by:

- Sampling an i.i.d. complex Gaussian field \(Z(k)\) on the half-spectrum

- Applying an amplitude mask:

with slope \(\alpha = 1\)

- Multiplying \(Z(k)\) by \(A(k)\) and applying an inverse FFT to obtain the spatial image.

- Finally, Normalizing brightness and contrast per-sample.

This enables us to isolate testure learning capability of dissusion models. Models with insufficient high-frequency produce visibly smoother samples. A sample is given in figure above

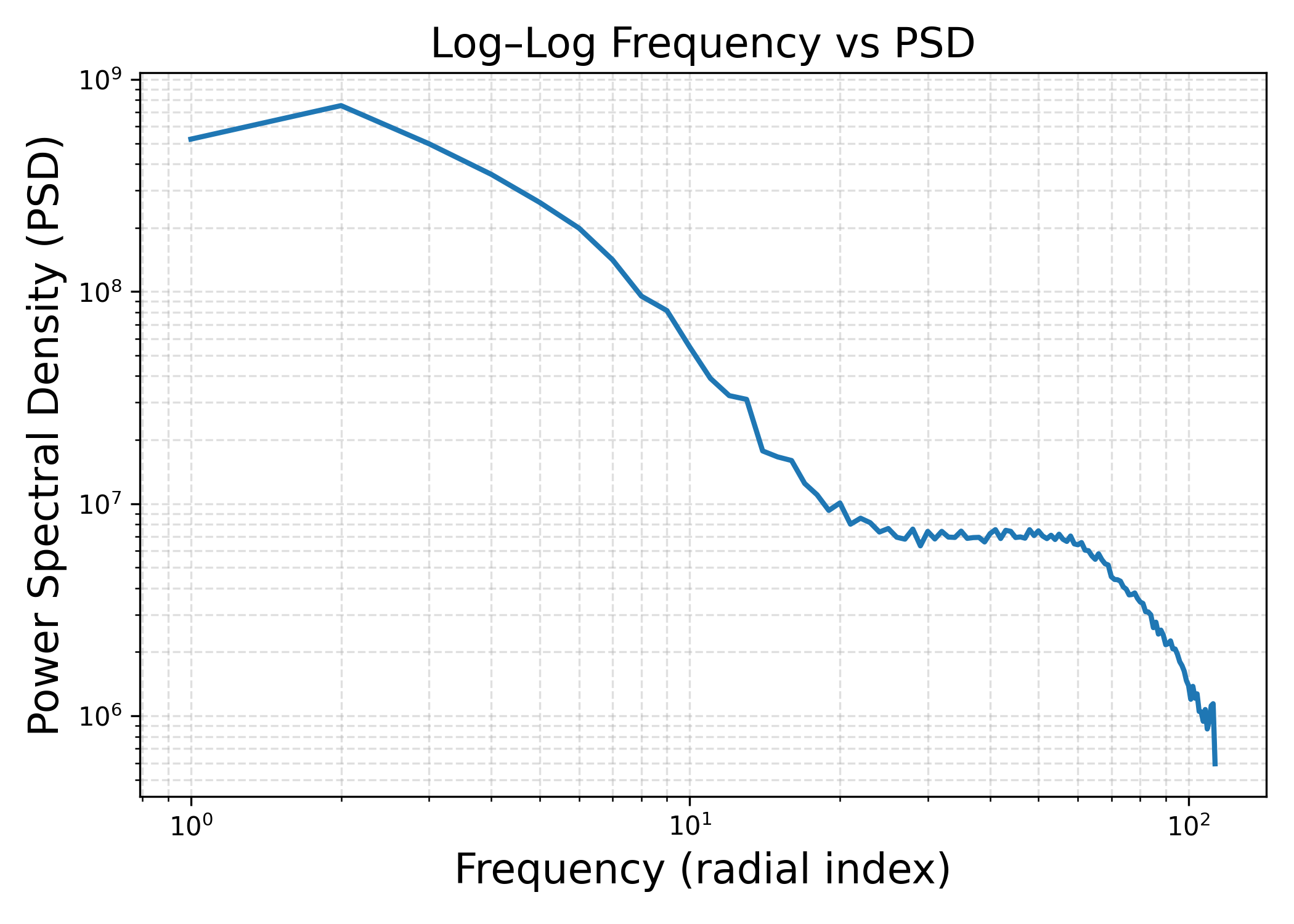

EGM: Edges–Gratings Mixture

Motivation: EGM introduces structured high-frequency content—oriented gratings, checkerboards, and sharp edges—that resemble real-image conditions where fine geometric detail matters. This allows discrete orientation content and mixed spatial primitives, providing a more realistic stress test of whether a model can faithfully reproduce high frequency geometry rather than only stochastic texture.

Generation Procedure: Each EGM image is a mixture of:

- Sinusoidal gratings with random frequency (0.04 − 0.22 cyc/px), orientation, amplitude, and phase.

- Checkerboard patterns, producing orthogonal high-frequency peaks.

- Sparse straight-line segments, introducing broadband edge energy.

Components are randomly combined, and the resulting image is normalized to unit variance. EGM reflects scenarios common in natural images edges, periodic patterns, corner-like junctions—while still enabling controlled frequency manipulation.

The controlled spectral mass distribution across bands of these synthetic datasets allows us to benchmark how FANS perform compared to a standard DDPM when the spectral signature of an image deviates from that of a natural image.

To ensure that adapting to the spectral signature of an image doesn’t affect the performance in natural image, we benchmark FANS against standard DDPM in datasets like CIFAR10 and CelebA.

To show that the synthetic dataset we generated are not entirely an imaginary usecase, we analyse the spectral signature of two real world datasets:

- Multimodal Universe: An astronomy based Dataset

- Texture dataset: A dataset consist of textures.

Results.

When we compare this Frequency Aware method against standard DDPM, we found some interesting results.

Slope Estimation:

A key advantage of FANS over standard DDPM models lies in its ability to accurately capture the spectral characteristics of the data distribution. To quantify this improvement, we evaluate both methods on their capacity to estimate the power-law decay exponent (slope) of the data’s power spectral density.

| Dataset | Original Slope | FANS | DDPM |

|---|---|---|---|

| PLTB | 1.002 | 1.394 | 3.566 |

| EGM | 0.989 | 1.121 | 2.566 |

| Multimodal Universe | 1.229 | 1.454 | 3.515 |

| Texture Data | 1.121 | 1.618 | 2.178 |

| CIFAR10 | 2.848 | 2.512 | 2.733 |

we compute the mean absolute error (MAE) between the estimated and ground-truth spectral slopes across all datasets in Table above. FANS achieves a substantially lower MAE (0.3165) compared to DDPM (1.5197). This difference is stable across datasets spanning texture-rich synthetic domains (PLTB, EGM) and real natural image statistics (CIFAR-10).

To quantify statistical reliability, we treat each dataset entry as an independent paired comparison between FANS and DDPM errors. A paired t-test on the absolute errors yields a significant difference (t = −4.92, p < 0.01). Instead, they reflect a systematic reduction in spectral-slope distortion.

Spectral Band Analysis: As FANS is designed to schedule noise based on the spectral property of the dataset, we perform a spectral analysis between the dataset and the images generated by the baseline and FANS.

Figure: Shows the PSD over the frequency bands of three datasets (a) Multimodal Universe Dataset, (b) PLTB Dataset ad (c) EGM Dataset. It also shows how the PSD of the real datasets (green) and generated images are distributed over the frequency bands for both the FANS (Blue) and Standard DDPM ( Baseline ) model (Red)

The plot shows the Power Spectral Density per Frequency Band for two synthetic dataset (PLTB and EGM) and a real world dataset ( Multimidal Universe). It shows that both the method could capture the spectral signature of the dataset, and understand how the PSD is distributed across the frequency bands. However the baseline ( Standard DDPM ) tends to concentrate the PSD within few frequency bands, while FANS ** distribute the PSD as per the dataset characteristics** and are more in agreement with the dataset spectral characteristic.

Metrics. To quantify spectral fidelity we use: (1) Jensen-Shannon (JS) divergence between generated and real PSD distributions (lower is better), (2) per-band correlation.

Using Jensen-Shannon divergence (JSD), we can see that the PSD distribution across frequency bands of FANS are much closer to the actual dataset as compared to the baseline on all the three datasets.

| Dataset | JSD(FANS) | JSD(Baseline) |

|---|---|---|

| EGM | 0.0308 | 0.1276 |

| PLTB | 0.0103 | 0.0804 |

| Universe | 0.0088 | 0.1041 |

Across the datasets FANS shows a stronger correlation bandwise compared to the basseline. This shows the correlation between the bands of the real dataset and the samples generated. FANS has much higher bandwise correlation across both the synthetic and real dataset.

| Dataset | Correlation(FANS) | Correlation(Baseline) |

|---|---|---|

| EGM | 0.911 | 0.672 |

| PLTB | 0..968 | 0.522 |

| Universe | 0.877 | 0.532 |

Qualitative Analysis:

On PLTB, standard DDPM exhibits catastrophic failure, generating samples visually indistinguishable from Gaussian noise, while FANS produces recognizable structures matching the data distribution. Quantitative analysis shows DDPM samples have near-zero correlation with real data features.

For EGM, both methods generate stable images, but FANS preserves fine-grained textures absent in DDPM outputs. Specifically, the characteristic cross-hatched patterns present in training data are preserved by FANS but smoothed out by DDPM. This suggests FANS better captures high-frequency components critical for texture fidelity.

To maintain the validity of our experiment all the hyperparameter setting for both the baseline and FANS training and sampling are kept identical. All experiments were performed with T = 1000 sampling steps

To show that, the performance gains translates to real world setting as well, we show the performance comparison on real world datasets.

Figure: Qualitative samples for Multimodal Universe Dataset

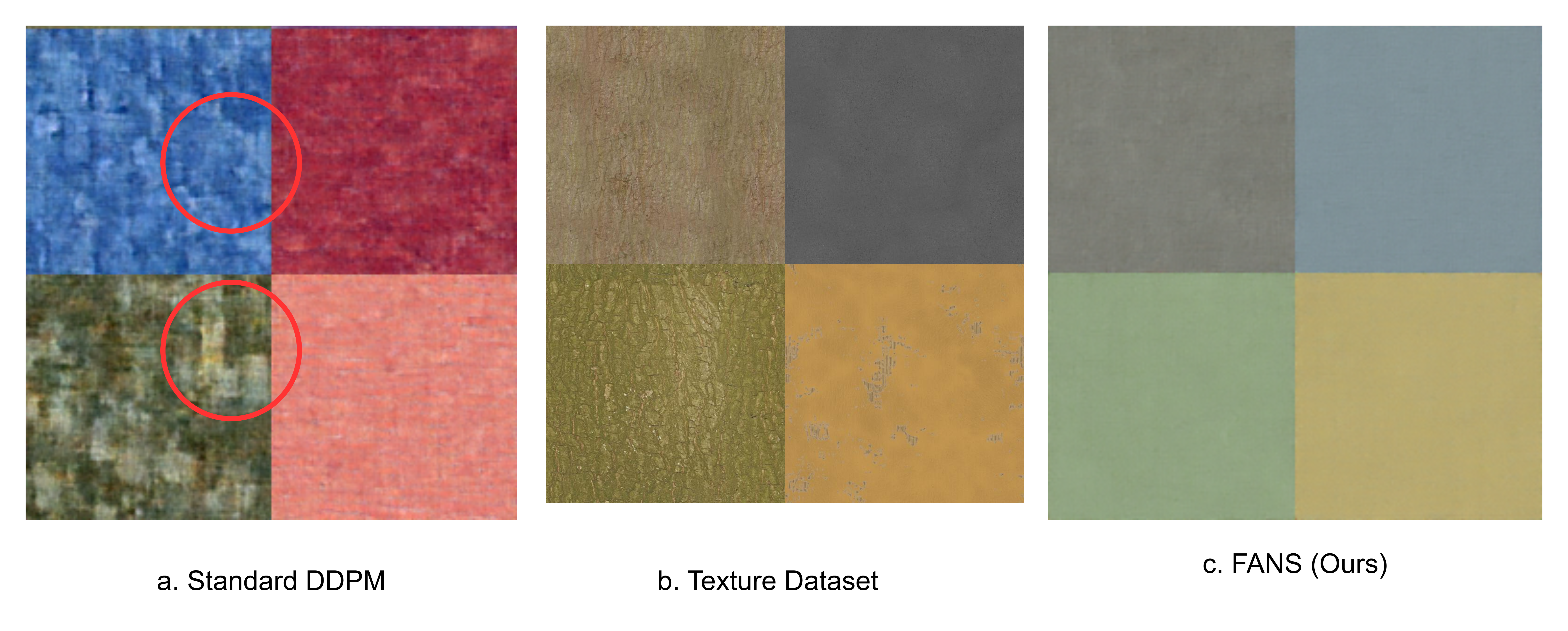

Figure: Qualitative samples for Texture Dataset

From the Figure we can see that FANS could capture the intricate details of the Multimodal Universe Dataset (FID: 10.003) while Baseline method couldn’t capture such intricacies (FID: 24.012). Similar observation can be made for the texture dataset. The baseline model intriduces a lot of atrifacts in the attempt to capture the texture details, while FANS could easilyt capture the intricate details of the dataset.

To ensure that FANS is not only adapting to these high frequency dominated datasets, we capre the FID score of FANS with stand DDPM ( Baseline ) models on CIFAR10 and CelebA datasets.

| Schedule | CIFAR10 (50) | CIFAR10 (100) | CIFAR10 (200) | CIFAR10 (1000) | CelebA (50) | CelebA (100) | CelebA (200) | CelebA (1000) |

|---|---|---|---|---|---|---|---|---|

| DDPM | 17.36 | 17.10 | 16.82 | 16.07 | 11.01 | 8.27 | 8.11 | 8.26 |

| FANS | 16.08 | 16.11 | 15.04 | 14.19 | 13.18 | 12.10 | 10.15 | 10.10 |

Conclusion

In this blog we aimed to analyse and present a principled approach to approach to addressing spectral bias in diffusion models through dynamic, dataset-aware noise scheduling. By leveraging the spectral characteristics of training data to construct frequency-dependent noise distributions, FANS enables models to allocate denoising capacity more efficiently across the frequency spectrum. Through rigorous experiments on synthetic datasets with known spectral characteristics (PLTB and EGM), we demonstrate that FANS consistently improves sample quality compared to vanilla DDPM baselines, particularly for datasets with pronounced high-frequency content. The method’s ability to learn dataset-specific frequency priorities and dynamically adjust noise shaping over time represents a meaningful step toward more adaptive and efficient diffusion training.

However, our work also reveals important limitations and directions for future investigation. In the current implementation the computational overhead of spectral profiling and per-sample noise generation, while manageable, adds complexity to the training pipeline.

Future work should focus on several key areas: extending FANS to high-resolution natural images and validating its benefits on large-scale datasets like ImageNet, exploring integration with modern architectures like diffusion transformers, and investigating the interplay between FANS and other recent advances such as flow matching and consistency models. Additionally, theoretical analysis of FANS’s convergence properties and its relationship to other forms of adaptive noise scheduling would strengthen the mathematical foundations of the approach.

Despite these challenges, FANS demonstrates that incorporating dataset-specific spectral information into the noise generation process can meaningfully improve diffusion model training. As the field continues to push toward higher-resolution, higher-fidelity generation, methods that efficiently allocate model capacity across frequency bands will become increasingly important. We hope this work inspires further exploration of adaptive, data-driven approaches to noise scheduling in generative models.

Enjoy Reading This Article?

Here are some more articles you might like to read next: