Dynamic Parameter Reuse Augments Reasoning via Latent Chain of Thought

Standard language models often rely on massive parameter counts for their performance, utilizing each parameter only once per inference pass. This prompts consideration of recurrent structures, where models reuse parameters across sequential time, depth, or training progression to achieve improved performance and reduced training cost. We draw connections in the landscape of parameter reuse, from growing models via stacking to recurrent looping, and postulate that these architectural priors act as a form of Latent Chain of Thought (LCoT), allowing models to reason in a continuous state space. By shifting towards deeper and dynamic computation, grown and recurrent architectures offer a path toward improved reasoning in compact networks, ascending beyond scaling laws of standard architectures.

Recur, Refine, Reason.

Introduction

In the current AI era, progress has been dominated by scale: increase the model size and thus the cost in exchange for improved performance

Decoupling parameter count from computation, parameter reuse within the architecture allows the training process to occur in a reduced search space, forcing the model to learn generalizable functions within the repeated modules. A classic example is Convolutional Neural Networks (CNNs)

We are now seeing a similar renaissance of reuse, both in architectural elements and in training methods, burgeoning in sequence modeling, but in different and thus far unconnected flavors. In the taxonomy of AI architectures, we focus this blog post on the order containing Transformers and (Time) Recurrent Architectures, including State Space Models (SSMs), for sequence modeling. Broadly, such models process and generate sequences of data through a stack of layers, often autoregressively. These layers follow repeated block patterns across the depth but are individually parametrized. We will discuss how blocks of these layers may be looped, grown, and reused to unlock efficiency and, crucially, reasoning capabilities. The intent of this blogpost is to discuss the parallels of such flavors of recurrence and related methods across various axes: both those which are inherent to architectural genuses such as time recurrence as well as those which can augment any models within this order that follow the blocked layer structure. These strategies have thus far mostly only been considered in isolation, but exploring their analogues from a unified lens may unlock further synergies.

Reuse in Time vs. Depth

To understand recent advances in looping and reuse, we first distinguish between two orthogonal axes of recurrence: over the input sequence (Time) and over the computation (Depth).

The Time Axis (RNNs and SSMs)

This is the classical domain of Recurrent Neural Networks (RNNs), including models containing Long Short-Term Memory modules (LSTMs) and Gated Recurrent Units (GRUs). Here, the model reuses the same function $f_\theta$ at every position in the sequence. A latent state $h_{t-1}$, representing a compressed memory of the past, is fed along with the current token $x_t$ into the network to produce $h_t$ (possibly in addition to an output $y_t$).

While Transformers largely overtook RNNs due to the latter’s training inefficiencies such as the inability to parallelize, the concept has returned in State Space Models (SSMs)

We note that the model is still performing a fixed amount of compute per token. This limitation may be bypassed via Chain-of-Thought (CoT)

The Depth Axis (Looping)

Instead of recurring across time, looped models portray recurrence in computation depth on the current token. The most basic approach loops the entire model (or specific blocks) on the same input representation multiple times.

This traces back to Universal Transformers

Generally, most methods either loop immediately from initialization or only loop after the full pretraining as a method of inference-time scaling. Next, we will explore related methods that yield similar effects to looping but utilize dynamically deepening architectures across their training lifecycle.

Implicit Looping

If depth is the goal, how else can we reach it without the apparent training instability and cost of massively deep networks?

At initialization, signal propagation in a very deep network is hindered compared to a shallow one. While residual connections solve the gradient vanishing problem to an extent

Equating training steps or FLOPs, smaller models tend to train much more efficiently at the beginning but plateau early. Intra-training model growth strategies try to follow the rapid training curve of a small model, then expand the loss landscape dimensionality to surpass the plateau towards the training curve of the larger architecture as if it were trained from scratch yet saving training costs.

For the context of depth recurrence, we focus the following subsection on depth growth (and particularly on one established strategy for it), although models may also be grown in other dimensions

Depth Growth via Stacking

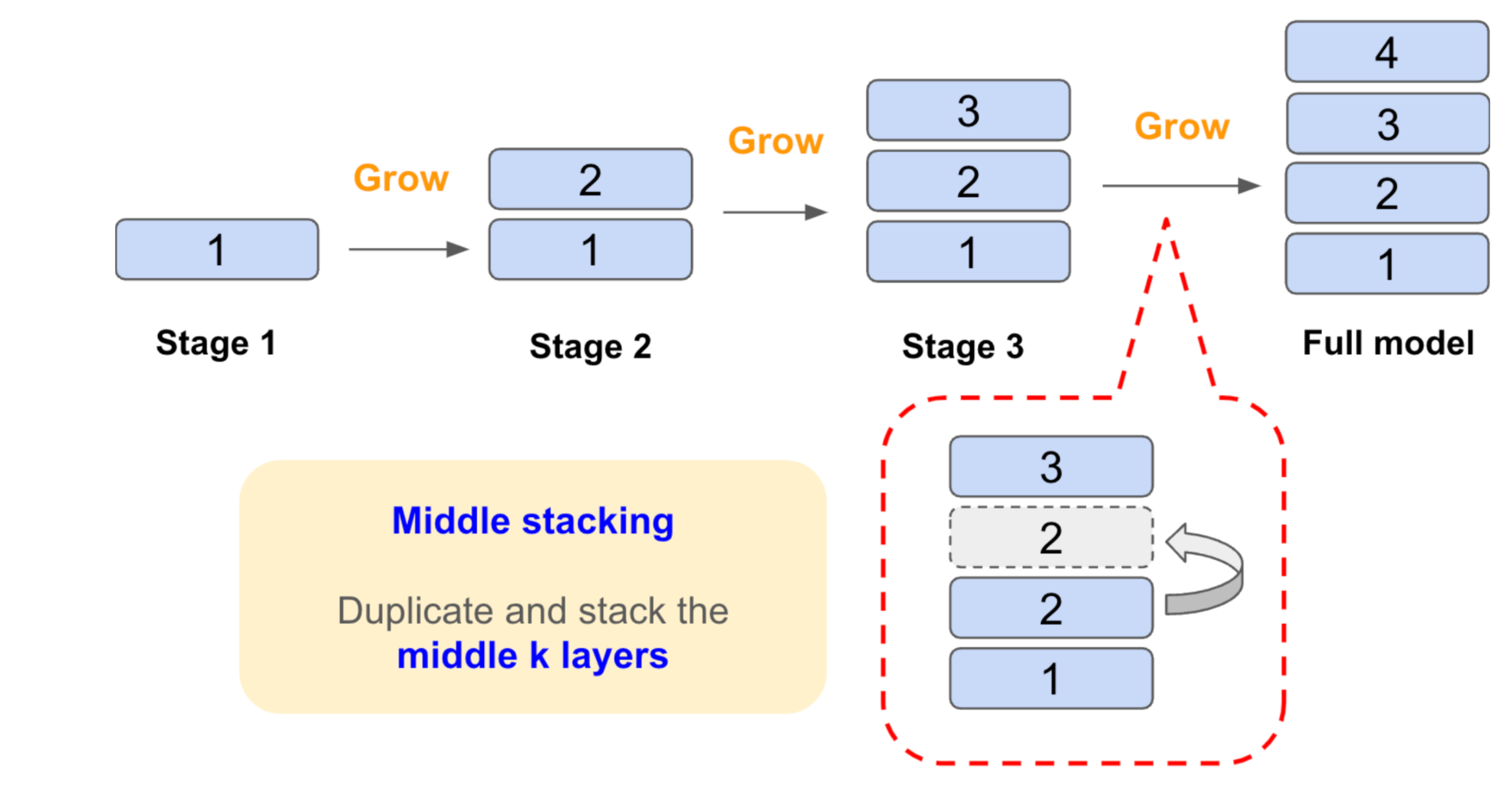

Depth growth, when accomplished by stacking gradually during training, can be viewed effectively as “progressive initialization via looping”. MIDAS (Middle Gradual Stacking) proposed by Saunshi et al.

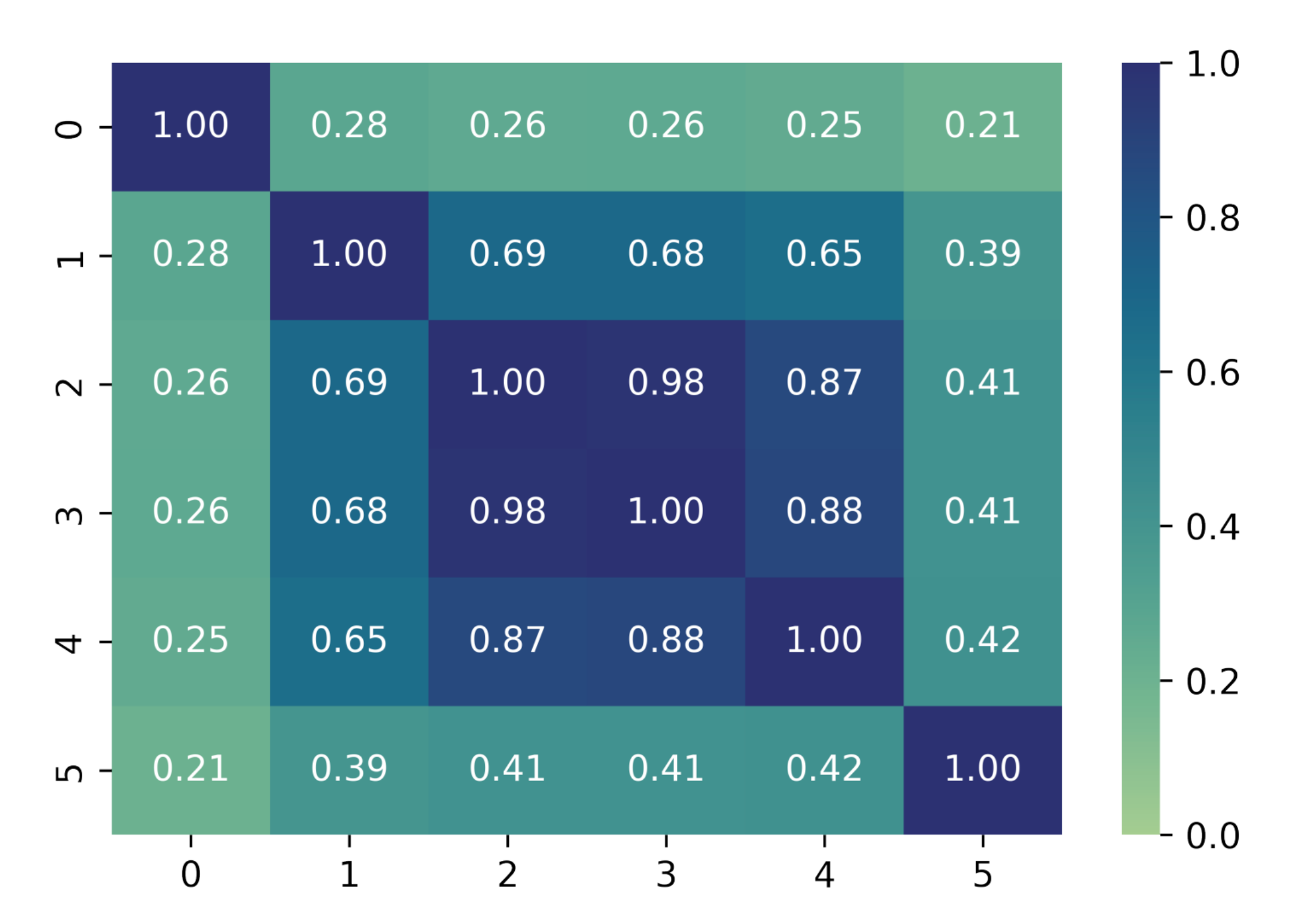

Because parameters are deep-copied instead of merely shared, they may diverge upon further training. Each block thus shares at least part of its training history with other blocks throughout the depth of the network. The weight similarity between blocks of a MIDAS-grown model are depicted above: blocks near the middle of the network were forked more recently than at the beginning and end, thus yielding more similar weights.

Such initialization of each newly inserted block provides an inductive bias as the model continues training at the increased depth. The final model is a standard single-pass network (albeit trained more efficiently), but the initialization scheme simulates soft looping with refinement due to the shared training histories. Thus, this style of depth stacking yields a model that is akin to a looped model, where the loop depth is grown over the course of training, but each loop has a parametrization that is most similar between middle blocks and most customized for the initial and final blocks. This patterning is also seen in looping methods that utilize a prelude and/or coda

Other depth-growth methods stack the entire sequence of layers, multiplying the depth at each growth

Induced Looping via Surgery

Depth growth via stacking is not the only strategy to achieve this loop-like effect. Bae et al.

These surgery techniques induce (soft) looping across the depth axis, where intermediate layers now share weights. During subsequent finetuning, the model trains within the constrained parameter space, enforcing this recurrence in the final architecture and at inference time. Other surgery techniques perform surgery to initialize deeper models from trained shallow ones, but without weight tying constraints during further training

Both progressive stacking and parameter surgery are effective strategies for imposing a recurrent prior onto models: stacking achieves this by gradual growth during pre-training, while surgery does so by structurally modifying a pre-trained vanilla model.

Depth Recurrence as Latent Chain of Thought

Beyond efficiency, a rather exciting implication of looping and parameter reuse is reasoning.

In standard Chain-of-Thought (CoT)

Looping enables the model to reason entirely within the continuous embedding space, avoiding the ‘lost in translation’ effect caused by the discretization noise inherent in token generation. In this regime, the input to the repeated block comes directly from the previous iteration’s latent state. Through superposition and uncertainty, this may simultaneously represent multiple conflicting reasoning paths, refining the probability distribution continuously rather than committing to a single, potentially erroneous branch early in the derivation. The ability to hold multiple hypotheses simultaneously within the vector space is a key advantage, circumventing the information loss associated with discrete token sampling.

Saunshi et al.

Depth growth via stacking and induced soft looping present an even more powerful evolution of LCoT: By initializing layers as copies (stacking) during depth growth or sharing parameters with learned offsets (soft-looping), we start with the useful inductive bias of a loop but allow for computational specialization throughout the depth of recurrence. This adaptive approach means the model can dynamically learn specialized transformations, such as early layers focusing on feature extraction and later layers focusing on logical inference, to different stages of the softly recurrent reasoning process, which is otherwise impossible in a strictly weight-tied looped model. The weight similarity pattern of MIDAS portrays this depth-wise specialization.

Ultimately, this suggests that depth recurrence is not just a compression trick, but a functional analogue to time recurrence operating in a richer, continuous, and potentially adaptive design space.

Sequential Refinement

To complement refinement in the depth axis, how can refinement be useful in the token axis? Most models are autoregressive: they process and generate one token at a time. This “hard commit” nature of autoregressive decoding means that earlier errors cannot be corrected based on later context, limiting the quality of sequential generation. To overcome this, sequential refinement strategies may be employed to allow the model to review and refine tokens. This is particularly effective on latent depth-recurrent models

Geiping et al.

Hierarchical Reasoning Models

In between sequential refinement and explicit CoT, a simple approach lies in the use of a “pause” token

Collectively, these methods demonstrate that the token axis need not be a one-way street: allowing the model to ‘look back’ or ‘pause’ introduces a temporal buffer that enables a similar refinement ability in the sequential axis as depth-stacking and soft-looping methods do in the computational depth axis.

Recursion and Hierarchicality

While sequential refinement allows a model to “polish” its recent output by looking back, it typically remains constrained to the flat, linear granularity of the token stream. However, complex reasoning is rarely linear but rather structural: a reasoning model may benefit from processing and organizing information hierarchically rather than purely sequentially. This is already demonstrated by the “step-by-step” fashion of explicit CoT. In the same way LCoT internalizes the computational depth benefit of CoT, this motivates a shift from recurrence over raw tokens to recursion over levels of abstraction.

Hierarchical sequence models break the monotony of the single token stream. The Hierarchical Joint-Embedding Predictive Architecture (H-JEPA)

This philosophy extends to language and continuous sequence modeling through architectures that explicitly decouple global context from local generation

As discussed previously, Hierarchical Reasoning Models

Establishing such hierarchies naturally raises the question of resource allocation: if the model possesses different levels of abstraction, must every input generically pass through every level? This leads us directly to the concept of conditional computation.

Dynamic Routing

Another axis that breaks the hard-coded paradigm is dynamic routing. This family of techniques customizes the computational path for each input based on its content, seeking to enhance both efficiency and capacity via specialization. One of the most well-known dynamic routing techniques is portrayed by Mixture-of-Experts (MoEs)

The concept of input-dependent layer-dropping pushes dynamic routing further by aiming to customize the computational depth per token. Early-exit strategies aim to accelerate inference in large autoregressive models by allowing tokens to skip later blocks. Depth-recursive models are even more naturally adept at modulating the effective depth per token.

This optimization introduces significant complications particularly related to maintaining the Key-Value (KV) cache, a mechanism utilized by autoregressive attention-based models that stores the computed keys and values from attention layers corresponding to previously generated tokens to accelerate sequence generation at inference time. When a token exits early, the KV caches for all subsequent (skipped) layers are missing, complicating the generation of future tokens that might require a deeper path. Solutions include duplicating the cached items from current token’s exit layer to subsequent layers

While dynamic routing optimizes where extra computation happens, hierarchicality optimizes how it happens, and sequential refinement optimizes when it happens, a lucrative path forward lies in combining these mechanisms with the structural priors of recurrence discussed earlier.

In-Context Learning and Implicit Optimization

Parameter reuse via looping extends the capability of transformer architectures to execute in-context learning (ICL) algorithms. In standard, unlooped models, emulating an iterative learning process typically requires the network depth to scale proportionally with the number of optimization steps. Conversely, looped transformers can emulate these iterative optimization algorithms using a constant-depth architecture. For instance, a looped transformer with a fixed number of layers can execute stochastic gradient descent (SGD) and full backpropagation for a neural network entirely within its forward pass

Towards Compositional Recurrence

Many of the high-level methods presented are orthogonal and thus composable, perhaps even synergistically, offering a flexible toolkit for designing resource-efficient reasoning models. One such example is Zamba

Conclusion

With the desire towards deeper reasoning, the rigid, single-pass transformer architecture appears increasingly insufficient and ineffective. By reusing parameters through looping and simulating recurrence depth through growing, models can effect deeper computation without necessarily becoming larger or more costly to train. The synergies of depth growth, parameter sharing, and looping may be utilized across the training cycle of a model to achieve reasoning capabilities that may surpass scaling laws of standard architectures.

Enjoy Reading This Article?

Here are some more articles you might like to read next: