JustRL: Scaling a 1.5B LLM with a Simple RL Recipe

Training small reasoning models with RL has become a race toward complexity, using multi-stage pipelines, dynamic schedules, and curriculum learning. We ask whether this complexity necessary? We show that JustRL, a simple recipe with fixed hyperparameters, achieves state-of-the-art performance on two different 1.5B base models (54.5% and 64.3% across 9 math benchmarks) while using 2× less compute than sophisticated approaches. The same hyperparameters transfer across both models without tuning, and training remains stable over thousands of steps without intervention. This suggests the field may be adding complexity to solve problems that disappear with a stable, scaled-up baseline.

Perfection is achieved, not when there is nothing more to add, but when there is nothing left to take away.

— Antoine de Saint-Exupéry, Airman’s Odyssey

Introduction

Recent advances in Large Language Models (LLMs), such as OpenAI’s o1

This proliferation of techniques raises an important question: Is this complexity necessary? The accumulated “best practices” may be fighting each other rather than the fundamental challenges of RL

Our goal is not to argue against all techniques or claim we’ve found the optimal approach. Rather, we provide evidence that simpler baselines deserve more attention than they’ve received. We offer a simple practice with a minimum set of tricks that can enhance the performance of models that are approaching their distillation limits. The field may benefit from establishing what’s fundamentally sufficient before layering on additional complexity. By open-sourcing our models and evaluation scripts, we hope to provide a reliable foundation that others can build upon, whether for practical deployment or as a baseline for developing and validating new techniques.

The Landscape: RL for Small Reasoning Models

Since DeepSeek-R1’s release in early 2025, the community has rapidly advanced RL for small language models in mathematical reasoning. The past year has seen a flourishing of approaches, each introducing techniques to stabilize training and push performance boundaries. These works fall into three main families based on their foundation models: DeepSeek-R1-Distill-Qwen-1.5B, OpenMath-Nemotron-1.5B, and Qwen3-1.7B, all starting from distilled bases.

The evolution reveals a clear trend toward increasing sophistication. Early works like STILL

| Model | Backbone | Entropy Control | Tune Hyperparameters | Tune Training Prompt | Reset KL Reference Model | Length Control | Adaptive Temperature | Rollout Rescue | Dynamic Sampling | Split Training Stages | Date |

|---|---|---|---|---|---|---|---|---|---|---|---|

| STILL-3-1.5B-Preview | DeepSeek-R1-Distill-Qwen-1.5B | ❌ | ✅ | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ | Jan, 2025 |

| DeepScaleR-1.5B-Preview | DeepSeek-R1-Distill-Qwen-1.5B | ✅ | ❌ | ❌ | ❌ | ✅ | ❌ | ❌ | ❌ | ✅ | Feb, 2025 |

| FastCuRL-1.5B-V3 | DeepSeek-R1-Distill-Qwen-1.5B | ❌ | ✅ | ❌ | ❌ | ✅ | ❌ | ❌ | ❌ | ✅ | Mar, 2025 |

| ProRL-Nemotron-Qwen-1.5B-v1 | DeepSeek-R1-Distill-Qwen-1.5B | ✅ | ✅ | ❌ | ✅ | ✅ | ❌ | ❌ | ✅ | ✅ | May, 2025 |

| e3-1.7B | Qwen3-1.7B | ✅ | ✅ | ❌ | ❌ | ✅ | ❌ | ❌ | ✅ | ✅ | Jun, 2025 |

| Polaris-1.7B-Preview | Qwen3-1.7B | ✅ | ✅ | ❌ | ❌ | ✅ | ✅ | ✅ | ✅ | ✅ | Jul, 2025 |

| Archer-Math-1.5B | DeepSeek-R1-Distill-Qwen-1.5B | ✅ | ❌ | ❌ | ❌ | ✅ | ❌ | ❌ | ✅ | ❌ | Jul, 2025 |

| ProRL-Nemotron-Qwen-1.5B-v2 | DeepSeek-R1-Distill-Qwen-1.5B | ✅ | ✅ | ❌ | ✅ | ✅ | ❌ | ❌ | ✅ | ✅ | Aug, 2025 |

| QuestA-Nemotron-1.5B | OpenMath-Nemotron-1.5B | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | ✅ | ✅ | Sept, 2025 |

| BroRL | DeepSeek-R1-Distill-Qwen-1.5B | ✅ | ✅ | ❌ | ✅ | ✅ | ❌ | ❌ | ✅ | ✅ | Oct, 2025 |

| JustRL-DeepSeek-1.5B (Ours) | DeepSeek-R1-Distill-Qwen-1.5B | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | Dec, 2025 |

| JustRL-Nemotron-1.5B (Ours) | OpenMath-Nemotron-1.5B | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | Dec, 2025 |

The pattern is striking: nearly every work employs multiple techniques from a growing toolkit—multi-stage training, adaptive hyperparameters, length penalties, dynamic sampling, and various stabilization mechanisms. While these methods achieve strong results, each represents a different combination of design choices, making it difficult to isolate which elements truly matter. The engineering complexity also raises a practical question: Is there a simpler path that still achieves competitive performance?

JustRL: Simplicity at Scale

Our approach is deliberately simple. We constrain ourselves to the fundamentals of RL, avoiding the multi-stage pipelines, dynamic schedules, and specialized techniques that have become common in recent work. The goal is to establish what’s sufficient before adding complexity.

Training Setup: What We Use (and Don’t Use)

Core algorithm: We use standard GRPO with binary outcome rewards—nothing more. The reward signal comes from a lightweight rule-based verifier from DAPO

What we keep simple:

- Single-stage training: No progressive context lengthening, no curriculum switching, no stage transitions. We train continuously from start to finish.

- Fixed hyperparameters: No adaptive temperature scheduling, no dynamic batch size adjustments, no mid-training reference model resets.

- Standard data: We train on DAPO-Math-17k

without offline difficulty filtering or online dynamic sampling strategies. - Basic prompting: A simple suffix prompt without tuning: “Please reason step by step, and put your final answer within \boxed{}.”

- Length control: We simply cap the maximum context length at 16K tokens, rather than using explicit length penalty terms.

The one technique we do use: We employ “clip higher”, a well-established practice for stability in long-horizon RL training. This is our concession to practical stability, and we view it as part of the baseline rather than an added technique.

We train this recipe on two 1.5B reasoning models using veRL: DeepSeek-R1-Distill-Qwen-1.5B and OpenMath-Nemotron-1.5B, each with 32 A800-80GB GPUs for ~15 days. The same hyperparameters work for both, without per-model tuning, and remain fixed throughout training—no schedules, no adaptation, no manual intervention, detailed as in the table below.

| Advantage Estimator | Use KL Loss | Use Entropy Regularization | Train Batch Size | Max Prompt Length | Max Response Length | PPO Mini Batch Size | PPO Micro Batch Size Per GPU | Clip Ratio Range | Learning Rate | Temperature | Rollout N | Reward Function |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| GRPO | No | No | 256 | 1k | 15k | 64 | 1 | [0.8, 1.28] | Constant 1e-6 | 1.0 | 8 | DAPO |

Evaluation: Comprehensive Benchmarking

We evaluate nine challenging mathematical reasoning tasks based on reproducible evaluation scripts from POLARIS

- Benchmarks: AIME 2024, AIME 2025, AMC 2023, MATH-500, Minerva Math, OlympiadBench, HMMT Feb 2025, CMIMC 2025, and BRUMO 2025.

- Evaluation protocol: We report Pass@1 accuracy, averaging over N sampled responses per problem (N=4 for MATH-500, Minerva Math, and OlympiadBench; N=32 for others). We use temperature 0.7, top-p 0.9, and allow up to 32K tokens for generation.

We observe that current rule-based verifiers may produce false negatives when a model generates a correct answer in an unexpected format. To address this, we augment existing systems with CompassVerifier-3B

Experiment Results

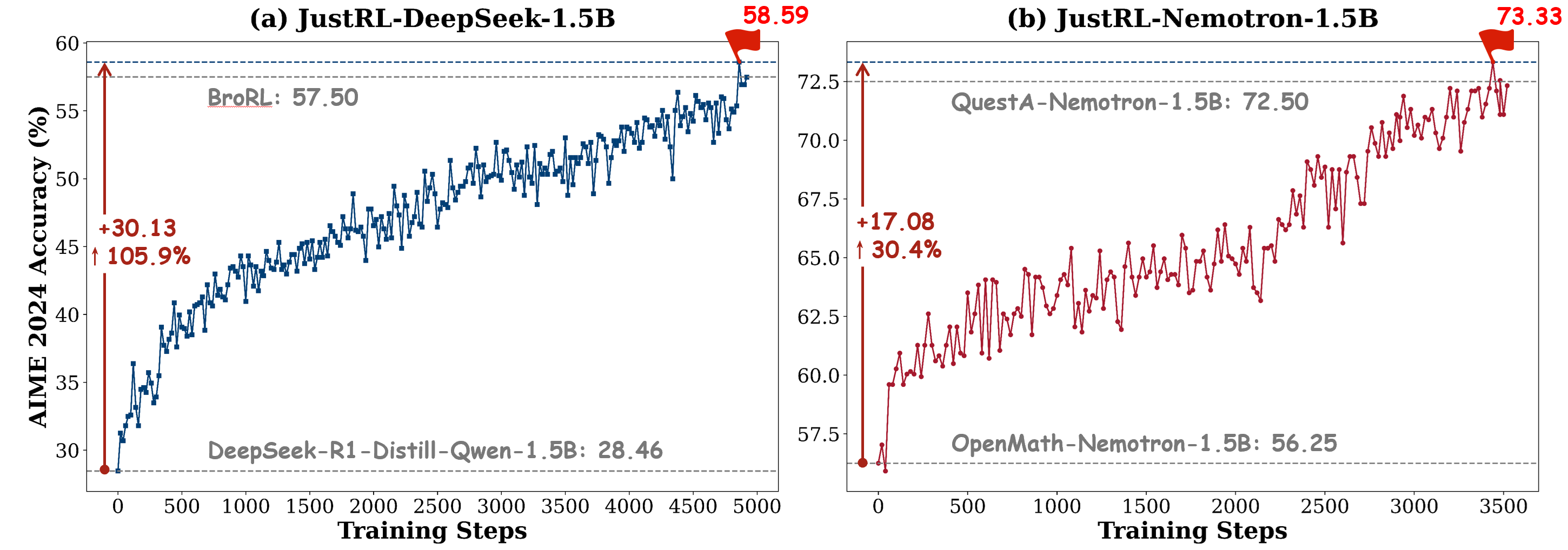

We apply JustRL on two popular 1.5B reasoning models to demonstrate that our minimal recipe achieves competitive performance with notably stable training dynamics. Both experiments use identical hyperparameters without per-model tuning.

Scaling a Weaker Base: JustRL-DeepSeek-1.5B

We train DeepSeek-R1-Distill-Qwen-1.5B for 4,380 steps using our simple, single-stage recipe. We report the avg@32 results across nine mathematical benchmarks as follows:

| Model | AIME24 (@32) | AIME25 (@32) | AMC23 (@32) | MATH-500 (@4) | Minerva (@4) | OlympiadBench (@4) | HMMT25 (@32) | BRUMO25 (@32) | CMIMC25 (@32) | Avg |

|---|---|---|---|---|---|---|---|---|---|---|

| DeepSeek-R1-Distill-1.5B | 29.90 | 22.40 | 63.82 | 84.90 | 34.65 | 45.95 | 13.44 | 30.94 | 12.89 | 37.65 |

| DeepScaleR-1.5B-Preview | 40.21 | 28.65 | 73.83 | 89.30 | 39.34 | 52.79 | 18.96 | 40.00 | 21.00 | 44.88 |

| ProRL-V2 | 51.87 | 35.73 | 88.75 | 92.00 | 49.03 | 67.84 | 19.38 | 47.29 | 25.86 | 53.08 |

| BroRL$^†$ | 57.50 | 36.88 | / | 92.14 | 49.08 | 61.54 | / | / | / | / |

| JustRL-DeepSeek-1.5B | 52.60 | 38.75 | 91.02 | 91.65 | 51.47 | 67.99 | 21.98 | 52.71 | 25.63 | 54.87 |

$^†$ BroRL results are officially reported but models not released; some benchmarks unavailable.

Our model (JustRL-DeepSeek-1.5B) achieves 54.87% average across benchmarks, outperforming ProRL-V2’s 53.08% despite ProRL-V2’s nine-stage training pipeline with dynamic hyperparameters and more sophisticated techniques. We also lead on six of nine benchmarks, demonstrating broad improvements rather than overfitting to a single task. However, the real question is whether our simplicity comes at a computational cost. It doesn’t:

| w/ Dynamic Sampling? | Training Steps | Train Batch Size | Rollout N | Max Context Length | Estimated Total Token Budget | |

|---|---|---|---|---|---|---|

| DeepScaleR-1.5B-Preview | ❌ | 1,750 | 128 | 8 | 8k → 16k → 24k | $(1040×8k + 480×16k + 230×24k) × 128×8 ≈ 2.2×10^6k$ |

| ProRL-V1 | ✅ Filter Ratio ≈50% | 2,450 | 256 | 16 → 32 → 16 | 8k → 16k | $\frac{1}{50\%}(1700×16×8k + 550×32×8k + 200×16×16k) × 256 ≈ 2.1×10^8k$ |

| ProRL-V2 | ✅ Filter Ratio ≈50% | +1,000 | 256 | 16 → 32 → 16 | 8k → 16k → 8k | $2.1×10^8k + \frac{1}{50\%} × 1000×16×8k × 256 ≈ 2.8×10^8k$ |

| BroRL | ✅ Filter Ratio ≈50% | +191 | 128 | 512 | 16k | $2.8×10^8k + \frac{1}{50\%}×191×512×16k×128 ≈ 6.8×10^8k$ |

| JustRL-DeepSeek-1.5B | ❌ | 4,380 | 256 | 8 | 16k | $4380×256×8×16k ≈ 1.4×10^8k$ |

We match half of ProRL-V2’s compute budget while using a single-stage recipe with fixed hyperparameters. BroRL requires 4.9× more compute by increasing rollouts to 512 per example, essentially exhaustively exploring the solution space. Our approach achieves competitive performance without this computational overhead.

Note on dynamic sampling: Models marked with ✅ use dynamic sampling to filter examples. Following POLARIS

Training stability: Figure 1(a) shows our training curve for JustRL-DeepSeek-1.5B, showing smooth and monotonic improvement without the oscillations or plateaus that typically require intervention. The stability itself suggests we’re not fighting against our training setup.

As of this writing, we’ve continued training beyond 4,380 steps:

| Training Steps | AIME24 (@32) | AIME25 (@32) | AMC23 (@32) | MATH-500 (@4) | Minerva (@4) | OlympiadBench (@4) | HMMT25 (@32) | BRUMO25 (@32) | CMIMC25 (@32) | Avg |

|---|---|---|---|---|---|---|---|---|---|---|

| 4,380 | 52.60 | 38.75 | 91.02 | 91.65 | 51.47 | 67.99 | 21.98 | 52.71 | 25.63 | 54.87 |

| 4,520 | 51.15 | 37.71 | 90.78 | 91.20 | 50.55 | 68.40 | 21.77 | 53.54 | 24.77 | 54.43 |

| 4,720 | 52.45 | 38.02 | 91.09 | 91.80 | 48.62 | 66.95 | 21.04 | 53.33 | 25.16 | 54.27 |

| 4,860 | 54.06 | 38.44 | 90.16 | 91.40 | 49.63 | 66.62 | 21.88 | 53.54 | 25.86 | 54.62 |

Performance appears to plateau around 54-55% average, with AIME 2024 continuing to improve (54.06% at step 4,860). This plateau might represent the ceiling for this foundation model without additional techniques, or it might simply need more training.

Scaling a Stronger Base: JustRL-Nemotron-1.5B

We train OpenMath-Nemotron-1.5B for 3,440 steps using the identical recipe, without hyperparameter changes. We report the evaluation results across nine challenging mathematical benchmarks as follows:

| Model | AIME24 (@32) | AIME25 (@32) | AMC23 (@32) | MATH-500 (@4) | Minerva (@4) | OlympiadBench (@4) | HMMT25 (@32) | BRUMO25 (@32) | CMIMC25 (@32) | Avg |

|---|---|---|---|---|---|---|---|---|---|---|

| OpenMath-Nemotron-1.5B | 58.75 | 48.44 | 90.55 | 92.40 | 26.93 | 71.70 | 30.10 | 61.67 | 30.08 | 56.74 |

| QUESTA-Nemotron-1.5B | 71.56 | 62.08 | 93.44 | 92.95 | 32.08 | 72.28 | 40.94 | 67.50 | 41.48 | 63.81 |

| JustRL-Nemotron-1.5B | 69.69 | 62.92 | 96.02 | 94.15 | 30.24 | 76.59 | 40.63 | 66.88 | 41.72 | 64.32 |

We achieve 64.32% average, slightly outperforming QuestA’s 63.81% and leading on five of nine benchmarks. The gap is narrow, which makes sense—both approaches are pushing the boundaries of what’s achievable at 1.5B scale. The key difference is in how we get there.

QuestA

| w/ Dynamic Sampling? | Training Steps | Train Batch Size | Rollout N | Max Context Length | Estimated Total Token Budget | |

|---|---|---|---|---|---|---|

| QUESTA-Nemotron-1.5B | ✅ Filter Ratio ≈50% | 2,000 | 128 | 16 | 32k | $\frac{1}{50\%}×2000×128×16×32k ≈ 2.6×10^8k$ |

| JustRL-Nemotron-1.5B | ❌ | 3,440 | 256 | 8 | 16k | $3440×256×8×16k ≈ 1.1×10^8k$ |

We use 2× less compute while achieving slightly better average performance without designing a complex curriculum as used in QuestA.

Training stability: Figure 1(b) shows another smooth training curve. The fact that the same recipe works for both models without hyperparameter tuning suggests genuine robustness rather than lucky optimization for a single model.

These results don’t diminish QuestA’s contribution—question augmentation is a clever technique that clearly helps. Rather, they demonstrate that competitive performance is achievable through simpler means. If you’re building on these foundations, you can start with our baseline and add techniques like question augmentation if needed, rather than assuming complexity is required from the start.

Training Dynamics Analysis

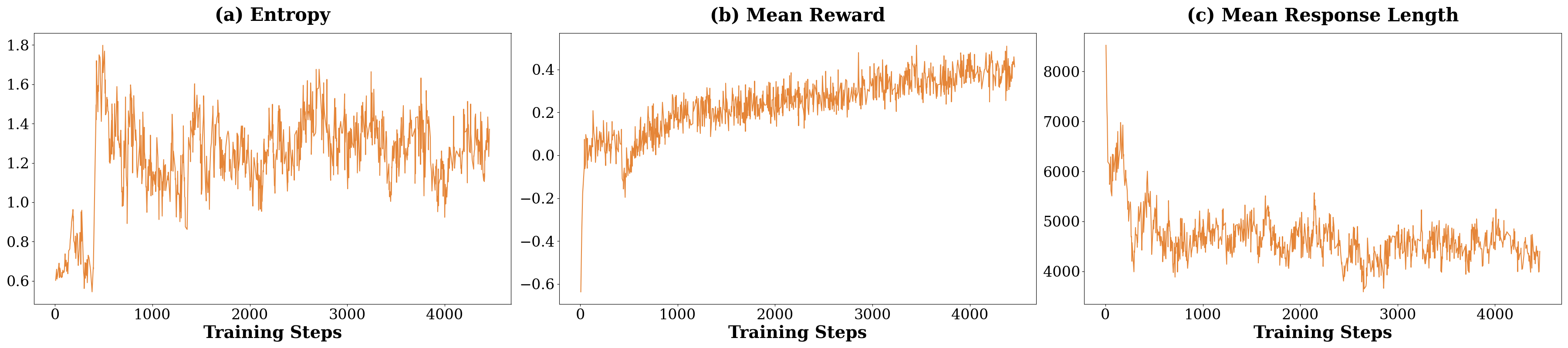

The ultimate test of a training recipe isn’t just the final numbers; it’s whether you can get there reliably. Complex techniques often emerge as responses to training instability: oscillating rewards, collapsing policies, or runaway response lengths. If a simpler approach can avoid these failure modes entirely, it suggests we may have been treating symptoms rather than causes. We examine the training dynamics of JustRL-DeepSeek-1.5B in detail, tracking three key dynamics over 4,000 training steps: mean training reward, policy entropy, and mean response length. These dynamics reveal whether the model is learning stably or requires constant intervention.

- Entropy: Figure 2(a) shows policy entropy oscillating naturally between 1.0 and 1.6 at later training steps, with no systematic drift upward (exploration collapse) or downward (premature convergence), indicating that the simple “clip higher” technique is well-performed for large-scale RL.

- Mean Reward: Figure 2(b) shows the mean reward climbing from around -0.6 to +0.4 over training. The curve is noisy but the trend is unmistakably upward. More importantly, there are no extended plateaus or sudden drops that would typically trigger intervention in multi-stage approaches. The signal is consistent enough that the model can learn continuously.

- Mean Response Length: The model starts verbose, generating responses averaging ~8,000 tokens. Without any explicit length penalty in our objective, it naturally compresses to 4,000-5,000 tokens by step 1,000 and maintains this range thereafter. This organic compression may be more robust than explicit penalties, which can create adversarial pressure that models learn to game, aligned with DLER

.

The contrast with typical RL: While we don’t have the computational resources to run extensive controlled comparisons, the literature provides context. Many recent works explicitly cite training instabilities as motivation for their techniques: ProRL-v2

What we can’t claim: These smooth curves don’t prove that simpler approaches are always more stable, or that techniques never help. We can’t isolate which specific complex techniques cause instability versus which ones solve it. But the contrast is striking: a minimal recipe produces training dynamics that simply don’t require the interventions that have become standard practice.

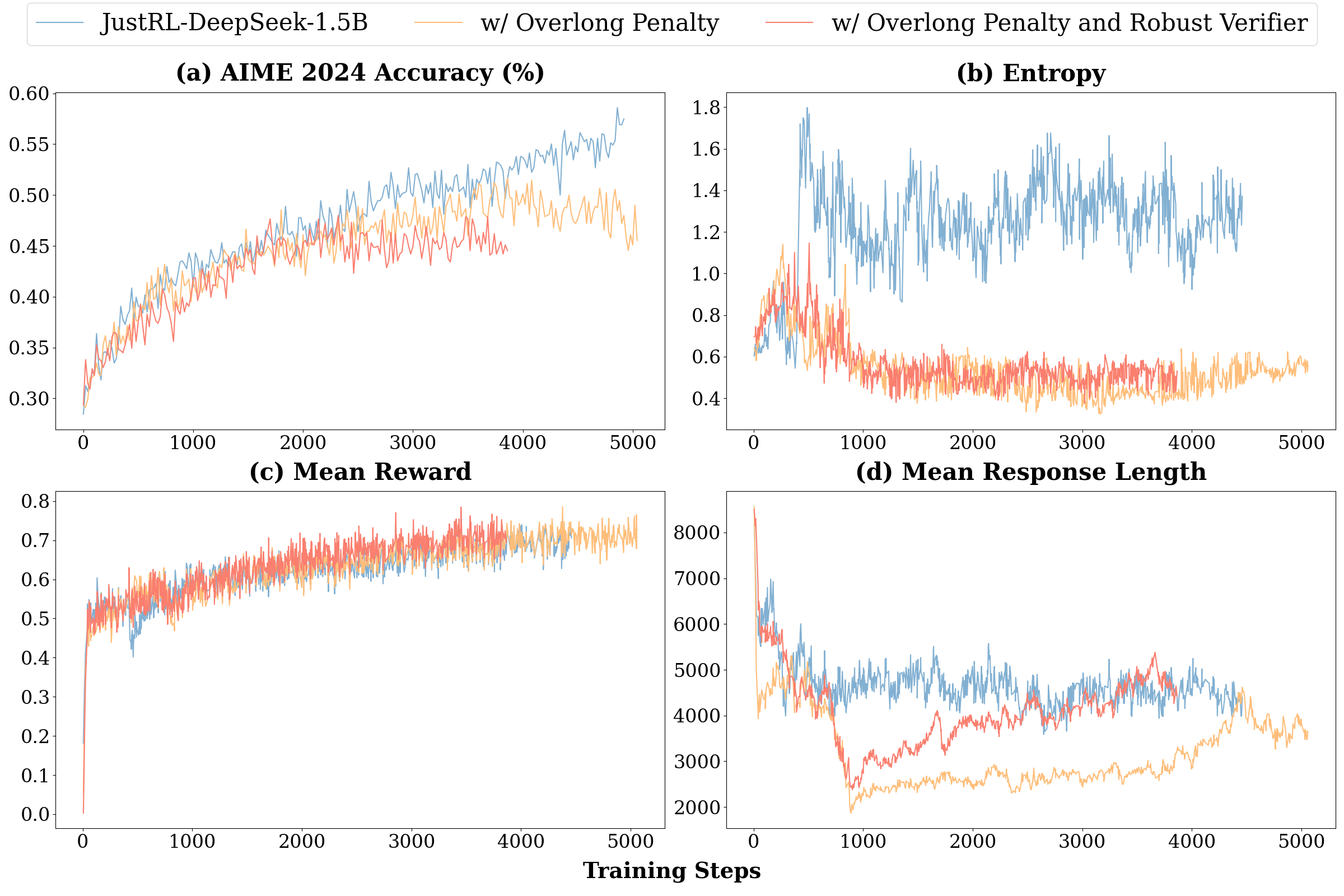

Ablation Studies: When Adding Techniques Doesn’t Help

We conduct two ablation studies starting from our base recipe on JustRL-DeepSeek-1.5B, both trained for 3,000+ steps on the same data:

- w/ Overlong Penalty: Add an explicit length penalty term for the last 4k tokens (as used in DAPO

) to actively discourage verbose responses - w/ Overlong Penalty and Robust Verifier: Further add a more sophisticated verifier from DeepScaleR

to reduce false negatives (correct solutions misclassified as incorrect)

-

On the overlong penalty: We hypothesized that explicitly penalizing verbose responses might improve training efficiency by pushing the model toward conciseness faster. Instead, performance degraded significantly as a trade-off. The entropy plot in Figure 3(b) reveals why: the explicit penalty collapses exploration, driving entropy down to 0.5-0.6 compared to the 1.2-1.4 range in our base approach. The explicit penalty appears to create pressure that conflicts with the learning objective, forcing premature convergence to shorter responses before the model has explored what actually works.

-

On the robust verifier: We further hypothesized that reducing false negatives (correct solutions marked wrong) would provide a cleaner learning signal. However, even after normalizing reward scales, its use leads to worse final performance, plateauing at 45% AIME 2024. Why? We offer two possible explanations: first, the stricter base verifier creates a richer spectrum of learning signals by reducing “perfect” scores, whereas the robust verifier’s permissiveness offers less nuanced guidance. Second, the stricter verifier’s reliance on precise formatting may pressure the model to develop more robust internal computations, an incentive lost when the verifier corrects errors externally. Thus, a forgiving verifier might fail to encourage the precision required for optimal generalization.

- Not all "standard tricks" transfer: The overlong penalty works in DAPO's context

but hurts in ours. Techniques aren't universally beneficial; they interact with other design choices in complex ways. - Simpler isn't always easier to improve: We tried two seemingly reasonable modifications and both made things worse. This suggests our base recipe is achieving some balance that's easy to disrupt.

We want to be clear about the limits of these ablations. We tested two specific modifications, but there are many other techniques we haven't explored: curriculum learning, adaptive temperature, reference model resets, different verifier designs, etc. Some of these might help. Our point isn't that techniques never work—it's that they need to be validated empirically rather than assumed to be beneficial.

Discussion

Our experiments provide clear evidence: competitive RL performance for small language models doesn’t require complex multi-stage pipelines or sophisticated techniques. A minimal recipe with fixed hyperparameters achieves strong results across two foundation models while maintaining stable training dynamics.

-

What this suggests: The smooth training curves with healthy entropy, monotonic rewards and natural length convergence stand in contrast to instabilities often cited as motivation for complex techniques. Our negative ablations show that adding “improvements” (explicit length penalties, more permissive verifiers) actively degrades performance. This suggests complexity may sometimes address symptoms created by other design choices rather than fundamental RL challenges.

-

What we don’t know: We demonstrate that simple RL works well, but can’t isolate why. Is it the hyperparameters? The training dataset? The verifier design? Our results are also limited to two backbones in mathematical reasoning at 1.5B scale. Generalization to other domains, model sizes, and tasks remains an open question.

-

When might complexity help? We don’t advocate simplicity as dogma. Additional techniques may be valuable under extreme compute constraints, when encountering specific failure modes we didn’t face, when pushing beyond current performance ceilings, or in domains with noisier reward signals. Our argument is methodological: establish simple baselines first, then add complexity only when you identify specific problems it solves.

Conclusion: Simplicity as a Starting Point

The debate over RL for small models has been clouded by assumptions that complexity is necessary for stability and performance. We set out to answer a straightforward question: What happens if we apply RL to small language models without the multi-stage pipelines, dynamic hyperparameters, and specialized techniques that have become standard practice? By stepping back to a cleaner, simpler approach, our findings provide a clear answer: adequate scale with stable fundamentals can match sophisticated techniques.

Starting from two foundation models, we achieved comparable or even better performance, respectively, using single-stage training with fixed hyperparameters. These results match or exceed approaches that employ eight-stage training, adaptive penalties or curriculum learning. More striking than the final numbers is the path: smooth, stable improvement over thousands of steps without the interventions typically required to prevent training collapse.

We’re not claiming simpler is always better or that techniques never help. We advocate a methodological shift: start simple, scale up, and only add complexity when a simple, robust baseline demonstrably fails. If the field can establish clearer baselines, we’ll better understand when techniques actually matter versus when they compensate for other issues.

We release our models and evaluation scripts as a baseline for the community. Use it, build upon it, critique it. If simple is enough more often than current practice assumes, that seems worth paying attention to.

Enjoy Reading This Article?

Here are some more articles you might like to read next: