The Illusion of Mastery: Breaking the Cycle of Benchmark Memorization with Generative Evaluation

Modern AI models that score perfectly on standardized benchmarks often fail in real-world applications. In this post, we first examine why current evaluation paradigms increasingly fail to capture how models perform in real-world scenarios, leading to an illusion of competence. Then, we introduce generative evaluation that automatically creates novel, diverse tasks every time a model is tested, and explain how it offers a more realistic way to measure what AI systems can actually do.

1. Introduction

The development of Large Language Models (LLMs) is accelerating at a breakneck pace

Yet a critical question remains: why do models that score “perfectly” on standardized benchmarks often “fail” in real-world applications? Why, for instance, can GPT-4 solve Olympiad-level math problems but sometimes get stuck in a loop while debugging simple code? What explains this persistent gap?

In a recent interview

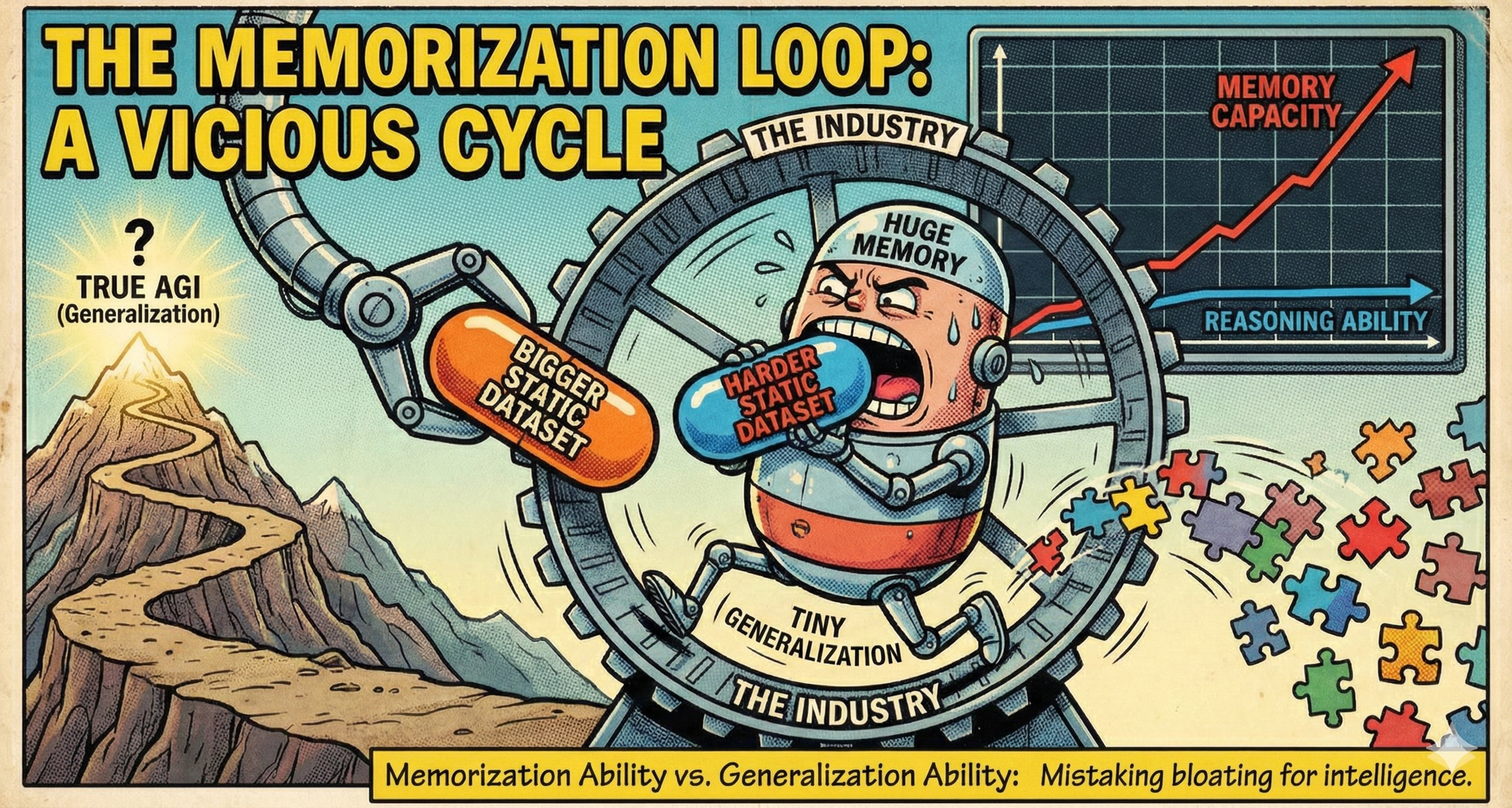

This points to an important issue: the fragility of models stems not merely from data or training limitations, but from systemic overfitting to the specific reasoning paradigms present in current static evaluations. Every time a static benchmark is “solved”, the field falls into a cycle: Propose a harder static dataset $\rightarrow$ Scale up the model to overfit the new structure pattern $\rightarrow$ Propose an even harder dataset. This is a race of “memorization capacity vs. reasoning ability.” We mistakenly believe the model is getting smarter, but it is often just expanding its capacity to memorize patterns, thereby missing the opportunity to discover genuine reasoning algorithms and moving further away from the goal of AGI. As a result, in real-world applications, models frequently fail when users introduce novel “reasoning patterns” that are simple for humans but inaccessible through memorization.

In this post, we argue that the path to AGI requires a fundamental shift in how we measure intelligence. We will examine how current static evaluation misleads the industry and introduce generative evaluation—not merely as a new metric, but as a dynamic engine for discovering novel reasoning patterns that remain challenging for models to generalize.

2. The Failures of Static Evaluation

The current reliance on fixed, static evaluation benchmarks is actively misleading the industry. It fosters an “illusion of mastery,” where improving scores on stagnant datasets does not translate to reasoning intelligence. This systematic flaw is rooted in the following key issues:

2.1 The Contamination Illusion

The acceleration of data collection and model training has created a race we are losing: human benchmark design cannot keep pace with data crawler speed. A benchmark considered challenging upon release often sees a rapid, dramatic performance leap within months

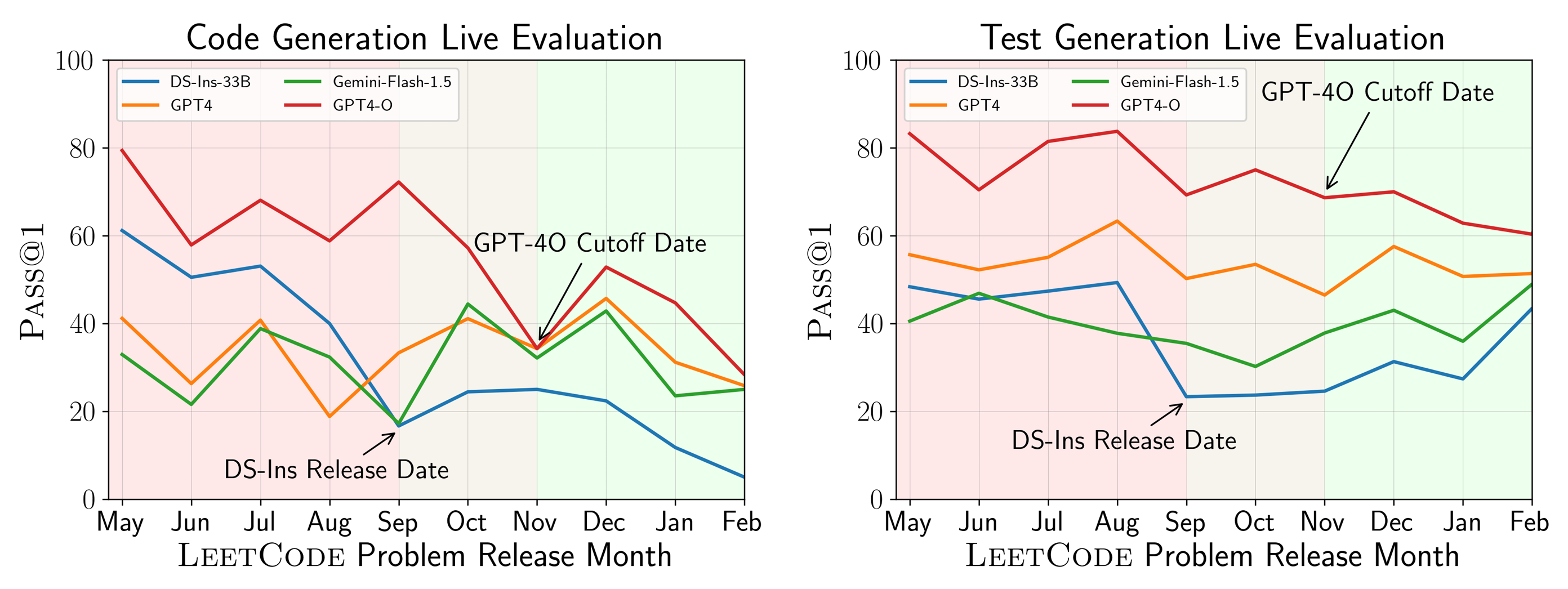

- The Misleading Result: This data contamination results in deceptively inflated scores. This is evidenced by the significant performance gap observed on benchmarks like LiveCodeBench

. As shown in Figure 2, models excel on problems released before their training cutoff dates but show a marked drop in performance on problems released afterward. This gap strongly suggests that the pre-release data was likely included in the model’s training corpus.

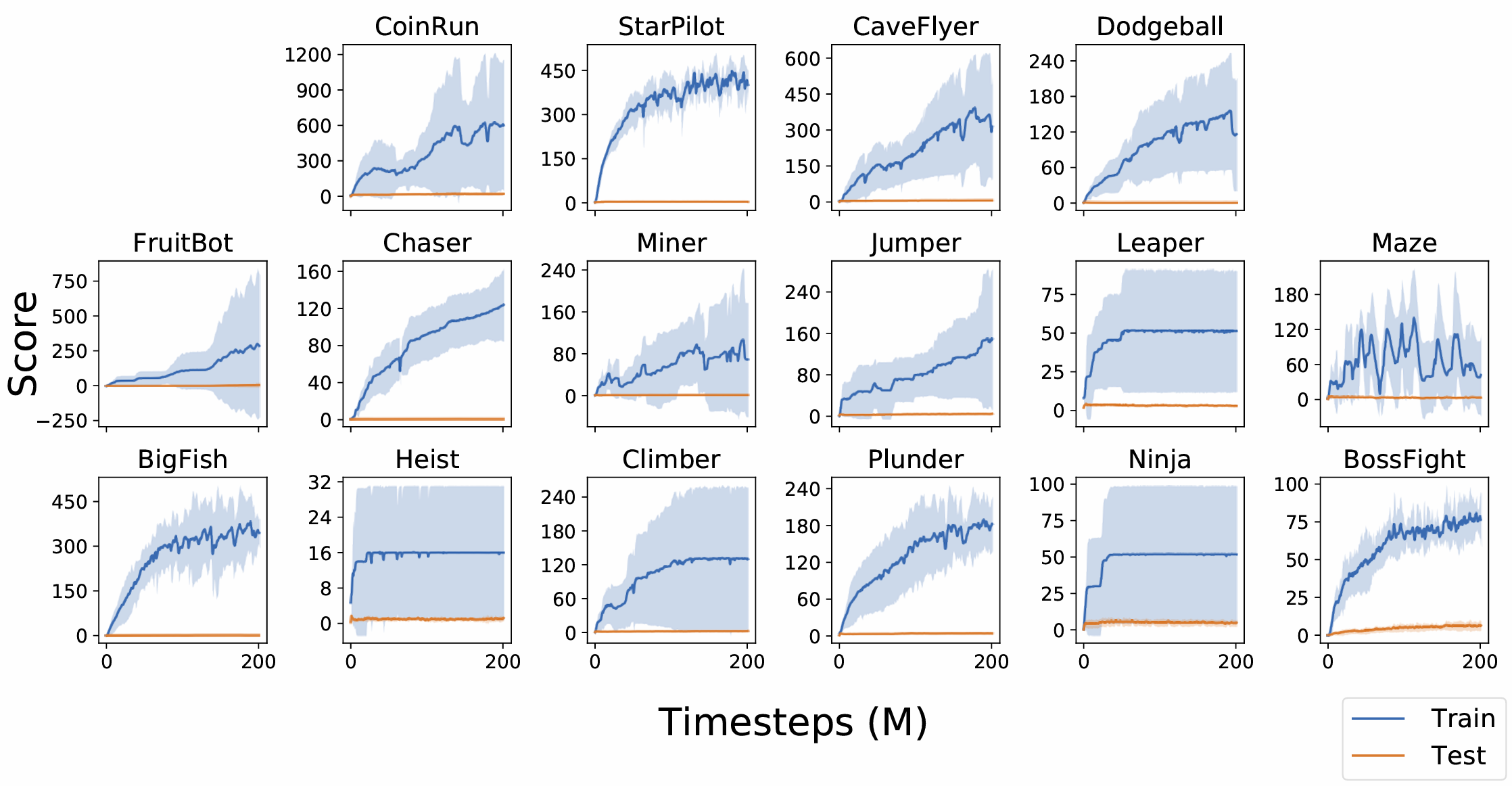

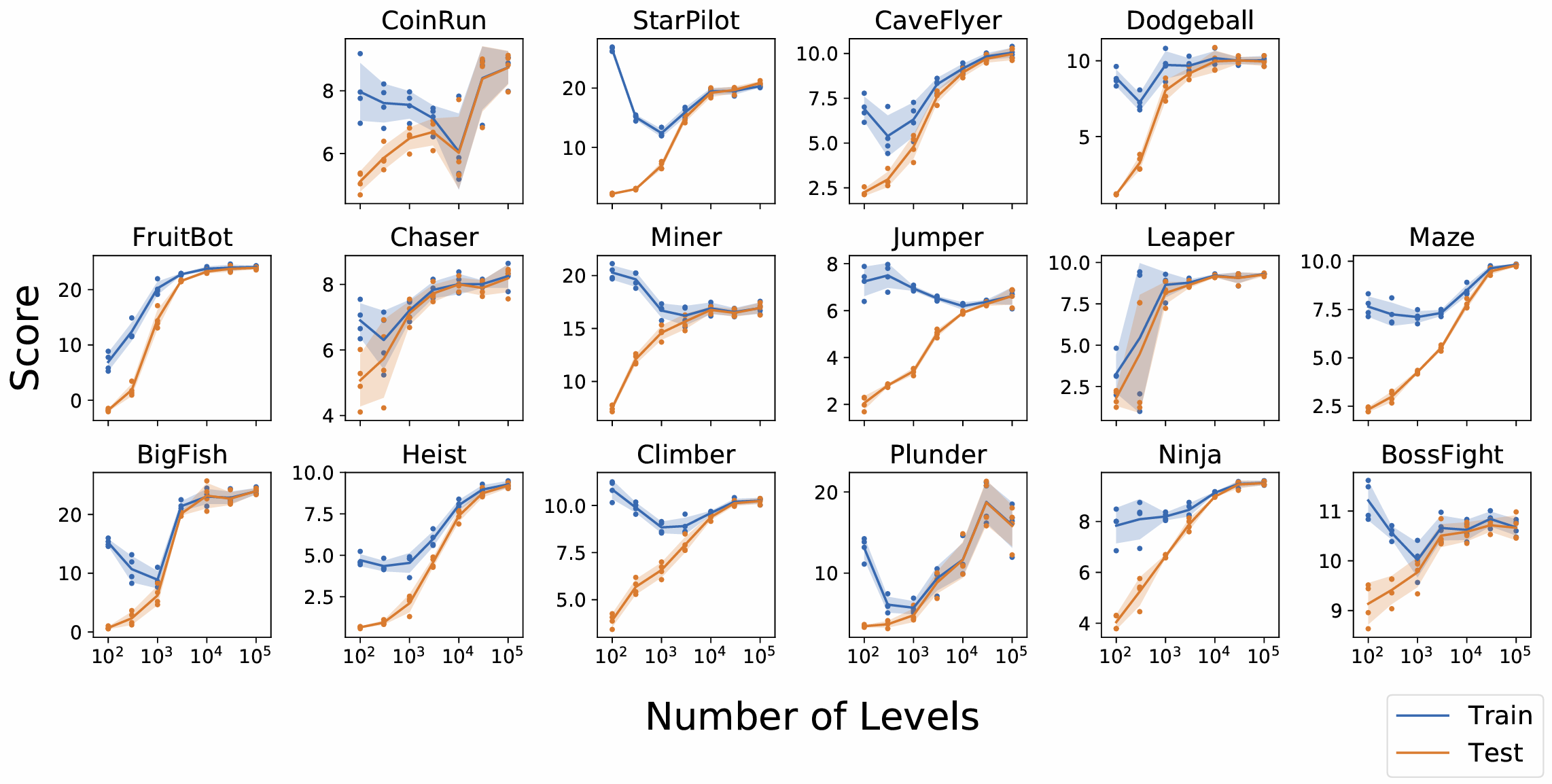

- The Memorization Trap: When test data leaks into the training corpus, models exploit those leaks to reproduce surface patterns instead of learning transferable reasoning strategies. This is exemplified by OpenAI’s Procgen test

: models trained on a fixed order of levels (progressing only upon success) perform perfectly. However, at test time, when the level order is randomized, they fail completely. This strongly suggests that the agents did not acquire a generalizable policy for the game, but rather memorized action sequences specific to the fixed level order.

2.2 The Stagnant $80\%$ Crisis

While increasing model and dataset sizes have equipped current models with a degree of reasoning (e.g., solving unseen math problems), the “memorization trap” persists. It has simply evolved into a higher-level fixed pattern matching. Models memorize the fixed path to solve a specific set of problems but lack the ability to dynamically adjust reasoning path on novel context. A clear symptom of this trend is the widespread $80\%$ crisis, where models excel at the majority of common tasks but performance sharply drops on the remaining $20\%$ of novel challenges.

Early models like BERT

This leads to a resource paradox: according to scaling laws, improving performance on these sparse long-tail examples requires exponentially more parameters and data. Scaling laws describe how model loss $L$ decreases as we scale up model size $N$ and dataset size $D$. A common form is:

\[L \propto \alpha N^{-\beta} D^{-\gamma}\]Here:

- $L$ is the model’s loss (lower is better)

- $N$ is the number of model parameters

- $D$ is the dataset size

- $\alpha, \beta, \gamma$ are constants (typically less than 1)

As $N$ or $D$ increases, loss decreases but at a slowing rate. Early gains are rapid; later improvements become far more expensive. We are now spending billions for each marginal gain, chasing perfection via fixed pattern matching instead of developing reasoning algorithms for future challenges. Relying only on scaling is inefficient and unsustainable.

2.3 The High-Impact Blind Spot

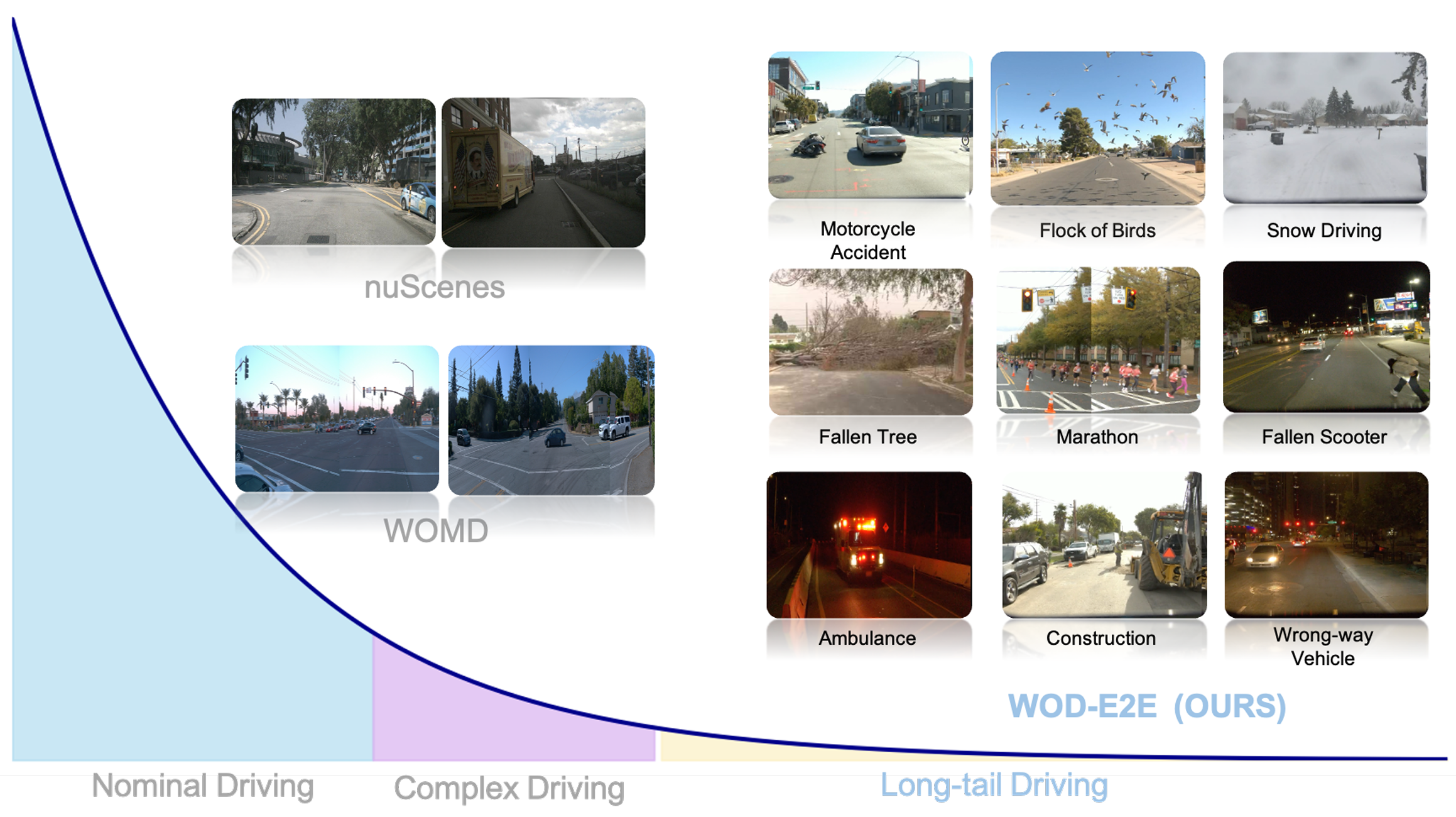

Static benchmarks typically mirror real-world data distributions, which makes them appear representative but also introduces a hidden bias: they underweight the most consequential failures. A substantial body of work documents that corner cases follow a long-tail distribution and are therefore extremely rare in collected logs

2.4 The Mismatch on the Path to AGI

If our final destination is AGI, we have a fundamental problem: we are currently using finite sets to evaluate an AGI that is defined by its ability to solve unlimited diverse tasks. There is a mismatch between our target and our actual evaluation methods, creating a gap between AGI and current SOTA models. We want agents to be open-ended, possessing the capacity to generate endless solutions for scenarios that may not yet exist

3. The Blueprint of Generative Evaluation

As we have discussed, static benchmarks are facing an existential crisis due to their inability to assess true reasoning capabilities. To escape this cycle, the industry is gradually shifting toward a new paradigm: generative evaluation. Here, the benchmark is not a fixed dataset but an intelligent engine capable of producing an infinite, dynamic stream of novel tasks.

This shift is already visible in several research threads. OpenAI’s Procgen

3.1 Core Concepts

Building on the related work above, we distill the common objective of generative evaluation. Crucially, this objective addresses the issues of pattern memorization and probes true reasoning capabilities through three key mechanisms:

- Infinite Diversity: By generating an unbounded stream of diverse and novel tasks, the system ensures test cases are truly unseen during training, making rote memorization mathematically impossible. This forces models to rely on genuine reasoning and generalization.

- Targets Novel Reasoning Pattern: Unlike static benchmarks biased toward high-frequency patterns, generative evaluation can be deliberately engineered to probe high-impact corner cases and “sensible factors” that challenge a model’s true generalization limits. Thus, evaluation shifts from measuring average performance to stress-testing critical weaknesses.

- Scalability and Efficiency: It replaces costly, slow human labor with an automated pipeline that continuously generates and verifies tasks. Since tasks are generated programmatically, the time and financial costs are often orders of magnitude lower than manual curation, making sustainable, long-term progress feasible.

3.2 Generating Diverse, Contamination-Resistant Tasks

Simply instructing an LLM to “generate 100 new questions” often yields repetitive, low-quality output. A robust generative framework must follow a structured pipeline ensuring both diversity (to prevent memorization) and validity (to ensure fairness). Based on recent cutting-edge research in generative evaluation

3.2.1 Inter-task Diversity: The Breadth of Knowledge

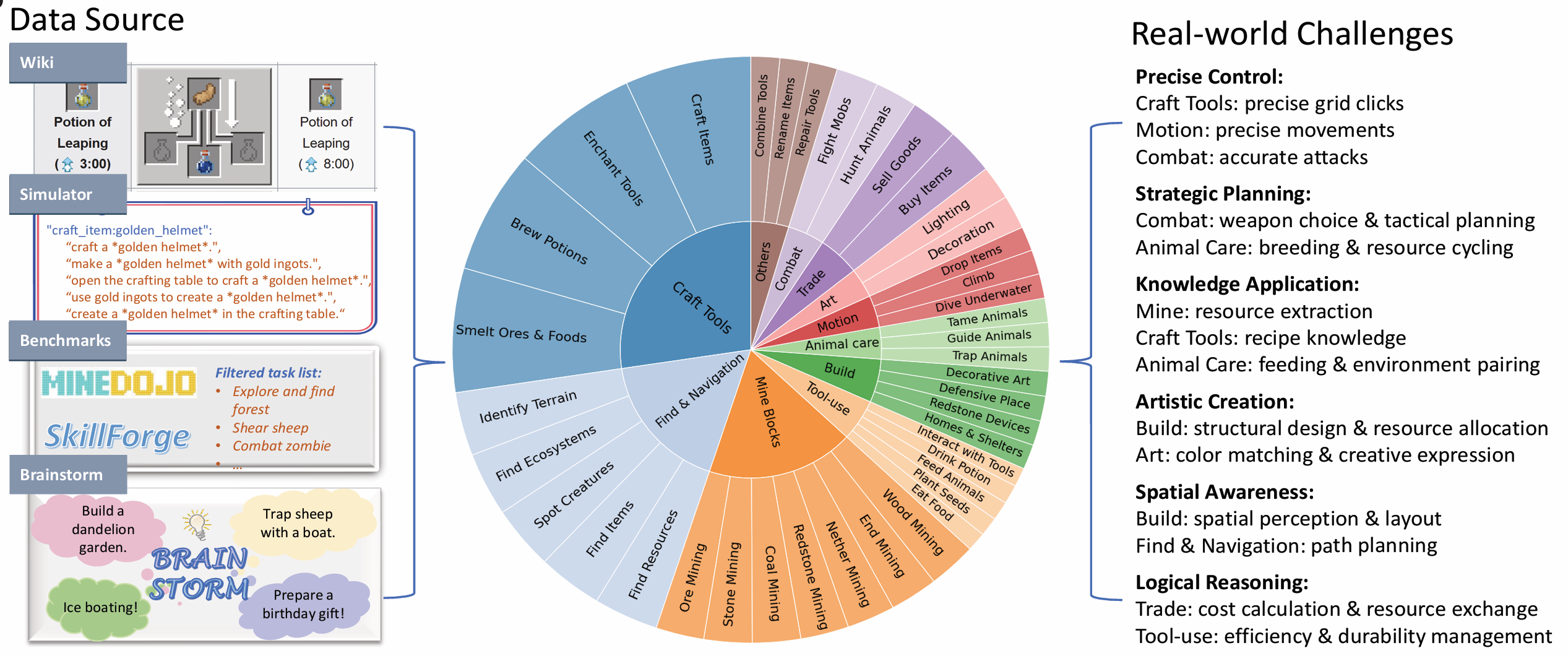

This dimension represents coverage across distinct domains. Just as a student must study Math, History, and Science, an AI agent must be tested across different domains. Inter-task diversity has long been valued in static datasets (e.g., the ALE benchmark

3.2.2 Intra-task Diversity: The Depth of Variation

This often-overlooked dimension refers to generating variations within a single task type—tasks that share a goal but differ in their initial states or parameters. Using ALE

3.3 Discovering Novel Reasoning Patterns

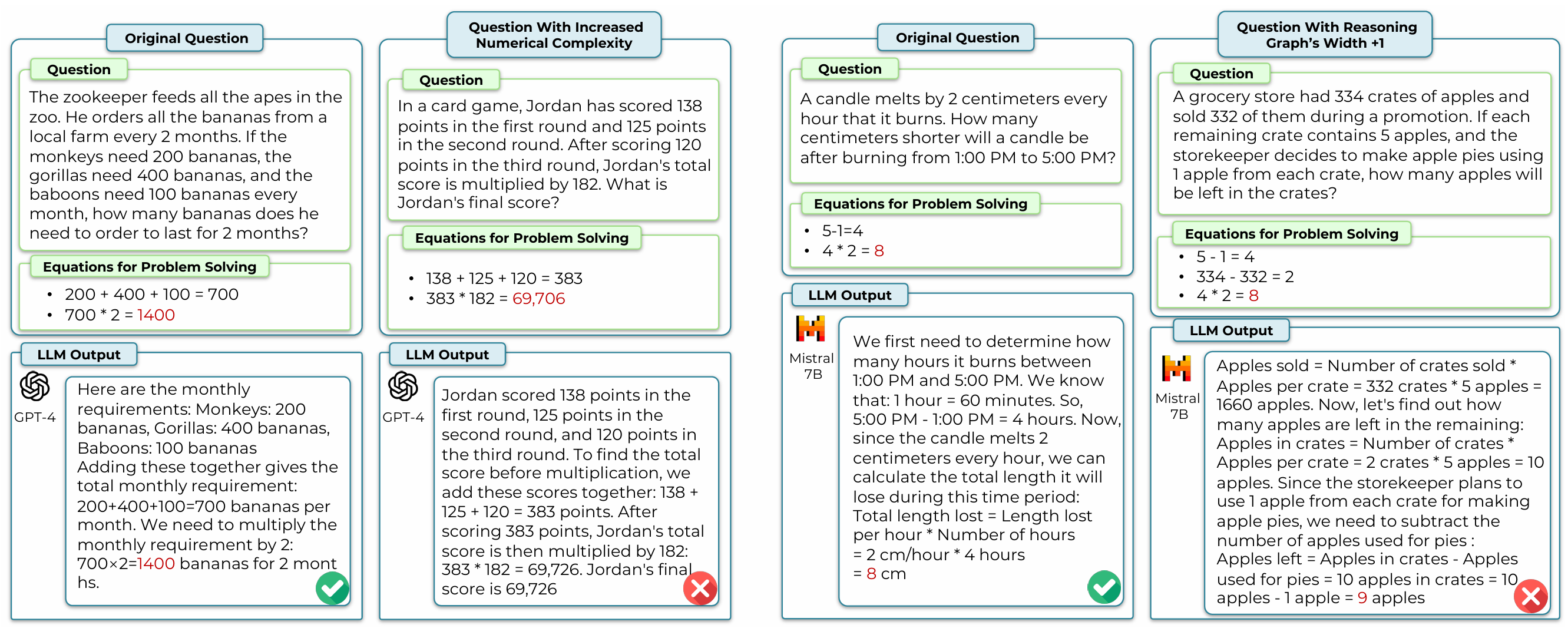

However, not all task variables are effective. A common pitfall is altering superficial variables of a task without introducing novel reasoning challenges. Research from UniCode

Therefore, the key is to identify “effective variables”—factors where a model’s generalization is prone to break down. Current approaches often use expert intuition: decompose a task into candidate variables, and adjust one at a time while keeping others fixed. If a variable causes significant performance variation, it signals incomplete reasoning and qualifies as effective. By identifying the right set of such variables, we unlock an infinite array of unique test cases, each embodying a novel reasoning pattern. As shown in Figure 8, DARG introduces three effective complexity variables: numerical complexity, depth of the reasoning graph, and width of the reasoning graph. It compares the robustness of state-of-the-art models across these three dimensions when solving mathematical problems.

3.4 Ensuring Reliability in the Generative Pipeline

The biggest risk in generative evaluation is producing “garbage”—unsolvable problems or incorrect metrics. Since we cannot rely on human annotators for infinite tasks, we must automate the verification process.

3.4.1 Ensuring Solvability

We must guarantee that the generated preconditions allow for a solution. Domain-specific tools are often used for verification. Here are two examples:

- Symbolic Guarantees: KUMO

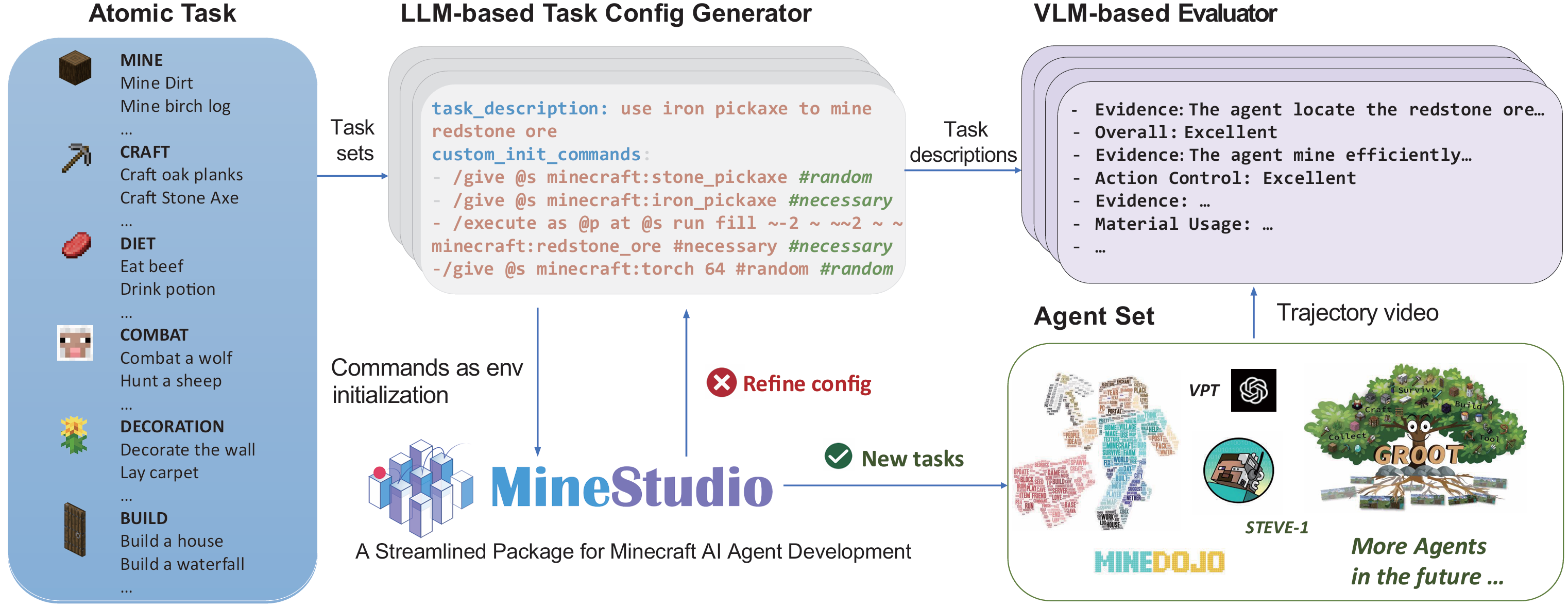

employs a SAT Solver (Boolean Satisfiability) during the generation phase. This mathematically enforces that every generated game board has a valid logical path to the truth, preventing impossible scenarios. - Simulator Verification: MCU

utilizes the MineStudio simulator, a popular test bed for the Minecraft platform, as a ground-truth verifier. The LLM-generated initialization commands are executed in the game engine; if the engine throws an error (e.g., Spawning a mob type that doesn’t exist.), the system detects it and triggers a self-reflection loop to correct the initial configuration.

3.4.2 Ensuring Label Correctness

In the absence of human-provided labels, the scoring of model outputs must also be automated. The method depends on the nature of the task:

- Programmatic Signals: For tasks on platforms with clear objectives (e.g., “mine a diamond” in Minecraft), the environment itself provides inherent success or failure signals.

- Model-as-Judge: For open-ended, creative tasks (e.g., “build a scary-looking house”), advanced LLMs or Vision-Language Models (VLMs) are employed as judges

. For instance, MCU uses GPT-4V, providing it with specific, generated evaluation criteria (e.g., “Does the structure have a roof?”), achieving 91.5% alignment with human raters . - Algorithmic Oracles: For tasks grounded in formal logic or mathematics, deterministic algorithms serve as the ground truth. KUMO computes an optimal search policy as its oracle

, while Unicode uses brute-force computation to verify solutions .

The reliability of both the solvability check and the labeling process can be further validated by periodically sampling generated tasks for human review.

3.5 Generative Evaluation as a Self-Improvement Engine

Generative evaluation need not be only a measurement mechanism — it can also provide a scalable training signal that enables models to improve continuously. A striking recent example is DeepSeek’s release of DeepSeekMath-V2

4. Discussion

Here is the English Markdown version with the clarified notation.

4.1 Managing Errors in Generative Frameworks

One might worry: “Is an automated evaluation pipeline as accurate as human evaluation?” In practice, as data scales up, 100% accuracy becomes an impractical goal. When the test set is uncontaminated, the total error primarily comes from two sources:

- Sampling error, influenced by the number of tasks;

- System error, introduced by the generative evaluation process.

Human-curated evaluation carries zero system error, but due to the limited number of tasks, sampling error can be high. For example, if Model A and Model B perform equally well overall but excel in different areas, a small task set biased toward Model A’s strengths may misleadingly show it as superior.

In contrast, a generative evaluation system, while having some inherent system error, allows that error to be estimated and corrected. For instance, by testing the model on a small set of known wrong examples, we can measure its false pass rate $q_e$. Then, using the formula:

\[p = \frac{p_{\text{obs}} - \varepsilon \cdot q_e}{1 - \varepsilon}\]we can recover a calibrated estimate $p$ of the model’s true performance. Here, $p_{\text{obs}}$ is the observed pass rate, and $\varepsilon$ is the error rate of the generative process.

Moreover, with a sufficiently large and diverse set of tasks, the sampling error of the generative system becomes negligible. Therefore, by combining scalable task generation with systematic error correction, we can achieve a more reliable evaluation framework, even if it requires embracing a small amount of controlled noise.

4.2 Potential Influence on Society

Static datasets inevitably suffer from inherent human bias, conflicts of interest, and financial incentives

Generative Evaluation offers a critical path to mitigate these external biases by automating and standardizing the task creation process, potentially utilizing multiple LLM generators to further diversify and neutralize output biases.

4.3 Limitations & Future Work

Generative evaluation still heavily rely on human priors to pick variables. Previously, datasets like Dynabench used human annotators to manually flag adversarial examples where models failed

Enjoy Reading This Article?

Here are some more articles you might like to read next: