Don't Look Up (Every Token): Escaping Quadratic Complexity via Geometric Patterns and Algorithms

Large Language Models (LLMs) have brought about a significant change in the field of artificial intelligence, where they have transitioned in scope from being specialized research tools to common resources that drive the next generation of software. With increasing model parameters and training data, LLMs demonstrate new abilities in reasoning, code generation, and solving complex problems that were once considered unattainable. However, scaling these models effectively for long-context applications uniquely poses a challenge. This is primarily due to the inherent limitations of the self-attention mechanism, which has quadratic time complexity. This quadratic bottleneck hinders applications for long documents, high-resolution images, and large codebases, among others. However, what is interesting to observe is that effectively only a few parameters are used in token computation, and most calculations are sparse. Hence, sparsity emerges as an effective solution to this problem. Rather than relying on the entire attention matrix, one can utilize an approximate or sparse version of attention to achieve almost the same results much faster. The backbone of this approach is the idea that tokens do not require the entire context; they only need local context, and thus, most of the computation carried out is wasteful. In this blog, we analyze the types of attention patterns that emerge and how to use them to our advantage for faster and efficient LLMs.

Introduction

The foundation of modern Large Language Models is the Self-Attention mechanism, first introduced in Attention Is All You Need

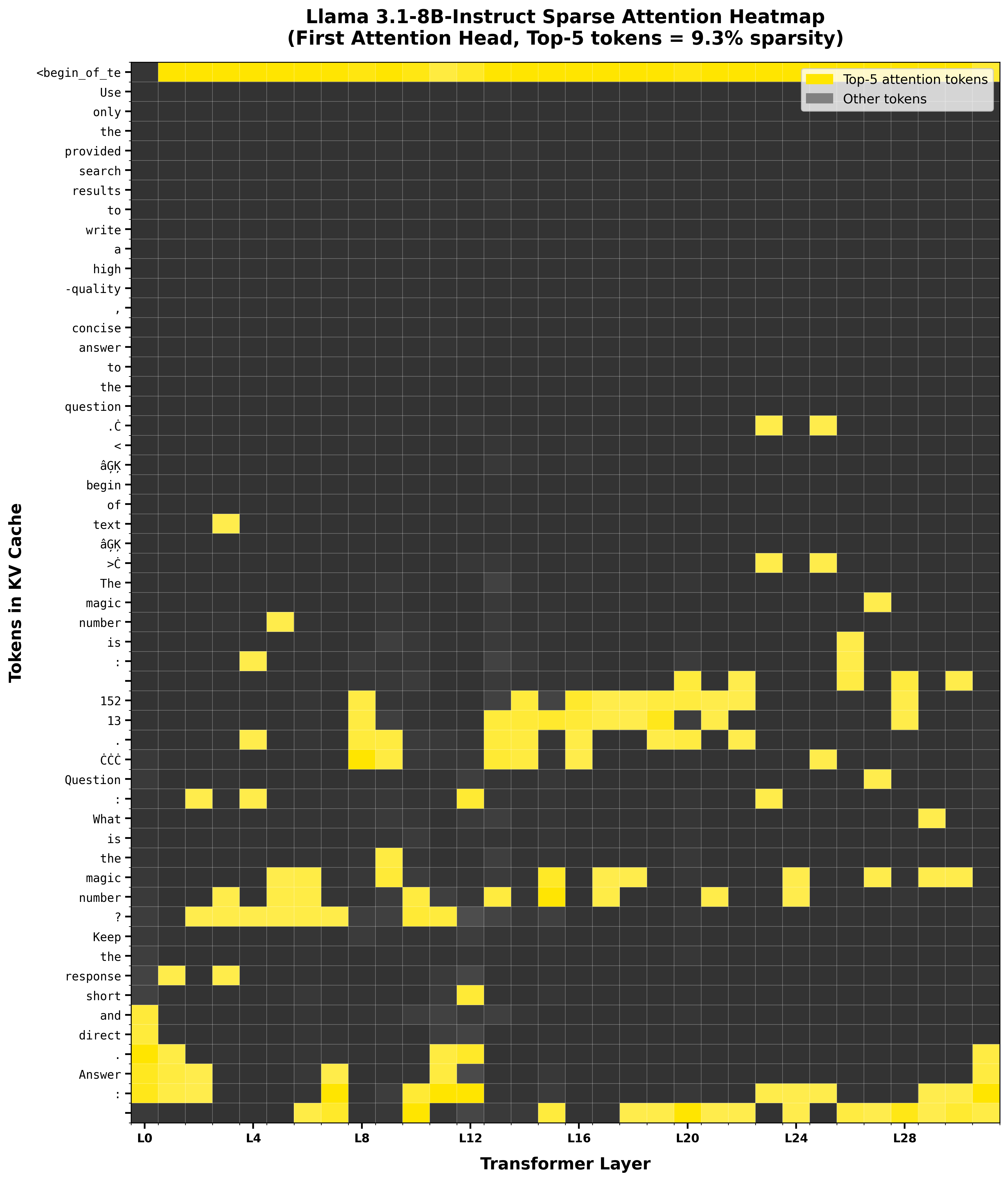

Figure 1: Attention heatmaps showing naturally sparse patterns in dense models. Even with a full attention budget, models learn to concentrate attention on specific tokens rather than distributing it uniformly.

However, recent observations suggest that token generation relies on a sparse subset of effective interactions rather than the whole parameter space. Consequently, sparsity presents a robust solution to the scaling bottleneck. By substituting the exhaustive $N \times N$ attention matrix with sparse approximations, one can maintain performance fidelity while significantly reducing latency. We can verify this hypothesis by examining the token heatmaps. When we visualize the attention layers of standard, dense models, they often reveal a fascinating insight: even when given the budget to look everywhere, these models learn naturally sparse patterns as observed in figure 1. Attention is not uniformly distributed, but rather concentrated on a few specific tokens. This finding serves as the foundation for the efficacy of sparse attention methods. If regular self-attention is inherently sparse in long contexts, then methods that rely on sparse attention serve as a more efficient architecture that relies on established patterns.

Preliminaries

Self-Attention

Simple attention (i.e. a single attention layer) processes the entire sequence simultaneously, such that every element attends to every other element

The self-attention output Z is a weighted sum of the value vectors, where weights are determined by the compatibility of the query and key.

\[Z = \text{Attention}(Q, K) \cdot V\]For individual tokens, the attention score $a_{m,n}$ represents the relevance of token $n$ (key) to token $m$ (query):

\[a_{m,n} = \frac{\exp(q_m \cdot k_n / \sqrt{d_k})}{\sum_{j=1}^{L} \exp(q_m \cdot k_j / \sqrt{d_k})}\]

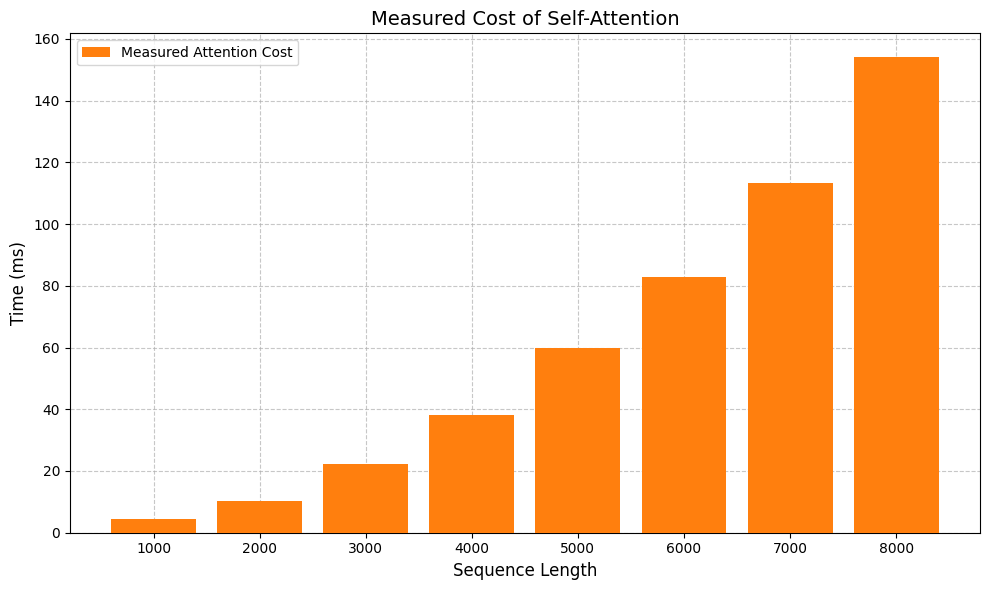

Figure 2: Latency scales super linearly with sequence length

This mechanism has a computational complexity of $O(L^2)$, as it computes interactions between all $L \times L$ pairs

The mask $M$ is defined as:

\[M_{ij} = \begin{cases} 0 & \text{if } i \geq j \\ -\infty & \text{if } i < j \end{cases}\]The masked attention is then computed as:

\[\text{Attention}_{\text{causal}}(Q, K, V) = \text{softmax}\left( \frac{QK^T}{\sqrt{d_k}} + M \right) V\]This mask zeroes out the upper triangular portion of the attention matrix. Consequently, 50% of the possible connections in the matrix are empty excluding the diagonal. This means we are performing $O(L^2)$ computations but discarding half of the results. The information propagates through the depth of the network i.e. a token at layer l aggregates information from layer $l−1$, which in turn aggregated from $l−2$.

Attention Sinks

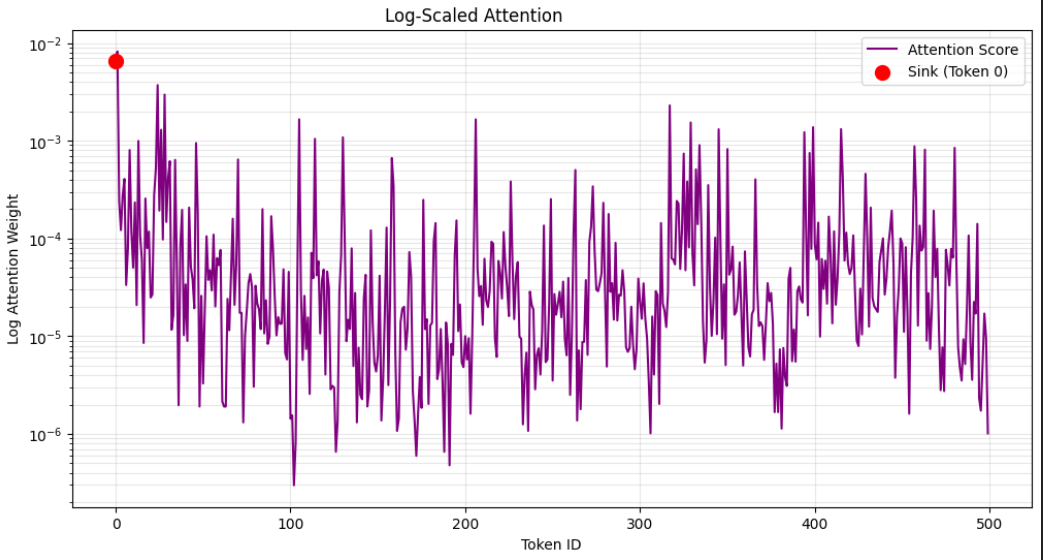

Figure 3: Graph showing the unusually high attention score for the <bos> token as compared to the other tokens, the attention scores are in log scale

We observe that a large amount of attention score is allocated to the beginning tokens as seen in Figure 3, especially the <bos> token. Upon experiments, it was further observed that this pattern sustains regardless of the relevance of the tokens to the language modeling task. These tokens were thereby termed as “attention sinks”

The Problem

The core issue addressed by attention sinks is “over-mixing”. A Transformer operates on the principle of repeatedly mixing information between tokens at every layer. While this is a necessary principle for understanding context, it becomes a problem in very deep models or when processing long sequences or a large context. After too many layers of mixing, the unique information for each token can become “smoothened out”, and all the tokens start to look the same to the model. This exhibits the phenomena of Rank collapse (or Over-smoothing, in case of GNNs), i.e., representations of all tokens in a sequence become too similar to each other as they pass through the model’s layers

- The Sink as a neutral target: The model learns that the first token is a reliable, ever-present, and neutral target.

- Low-Information value: The value vector associated with this token is often learned to have a very small norm, indicating that it contains very little information.

- Skipping the update: When an attention head wants to avoid mixing more information into a token, it simply directs all its attention to the sink. It picks up the low-information value, and when that is added back to the token’s representation, it changes it very little (it’s like adding zero). The token effectively skips the mixing step in that layer, preserving its distinct information. This allows the model to dynamically control how much mixing occurs at each layer for each token, preventing the representations from becoming a blurry mess.

Implications on Sparse Attention

Attention Sinks directly constrain how we design sparse masks, as they are the most fundamental pattern to be handled. Many sparse methods like pure sliding windows or aggressive early-token eviction remove the first few tokens as the sequence grows. This unintentionally deletes the “do-nothing” path attention heads rely on to avoid over‑mixing. When the BOS/initial tokens vanish, heads are forced to mix into non‑sink positions, accelerating rank collapse and causing quality drops.

As illustrated by StreamingLLM

Beyond Softmax

While attention sinks are functionally necessary for current Transformer architectures to prevent model collapse, they are computationally expensive and destructive for optimization. They are a mathematical side effect as opposed to a deliberate feature. Softmax is traditionally used in Transformers to turn scores into a probability distribution, offering stability via dense gradients and a bounded norm. However, its sum-to-one normalization requires tokens that have no semantic relationship to any previous tokens (e.g., “The) to still allocate 100% attention somewhere, thus creating attention sinks. Primitive alternatives include: removing normalization with sigmoid (sinks return once normalization is reintroduced), adding trainable biases to Q/K/V (benefits fade in deeper models), or tweaking softmax with custom optimizers to tame outliers. One of the more effective solutions is Softpick

This led to “dying” heads (too many zeros) and still did not remove sinks; negatives had no gradient. The final Softpick version uses an absolute-valued denominator:

\[\text{Softpick}(\mathbf{x})_i = \frac{\text{ReLU}(e^{x_i} - 1)}{\sum_{j=1}^{N} |e^{x_j} - 1|}\]This allows gradient flow for negative inputs and breaks the sum-to-one constraint, mitigating sinks. But this introduces the problem of “underscoring” in long contexts: many negative tokens inflate the denominator and shrink scores, hurting retrieval.

Computational Overhead and Training Stability

Unlike a naive softmax backward pass that only requires retaining the outputs, Softpick’s derivative necessitates keeping or recomputing the inputs. Because the specific math functions used in Softpick (ReLU and absolute value) are easy to break down, the algorithm can process everything in one go without eating up memory. This means it works seamlessly with efficient hardware tools like FlashAttention.

In terms of training stability, Softpick alters the model’s dynamics by generating significantly higher initial gradient norm peaks compared to standard softmax. While standard gradient clipping can prevent immediate collapse, deeper scalability issues remain. For instance, attempting to fix the aforementioned “underscoring” issue by introducing learnable temperature coefficients or sequence length dependent scaling factors (e.g., Scalable Softpick) introduces new hyperparameters that can lead to training instability and ultimately worsen downstream performance. Furthermore, at larger scales (e.g., 1.8B parameters), models trained with Softpick suffer from an increased proportion of permanently “dead” or dormant attention heads, which reduces the effective capacity of the model.

Static Patterns of Attention

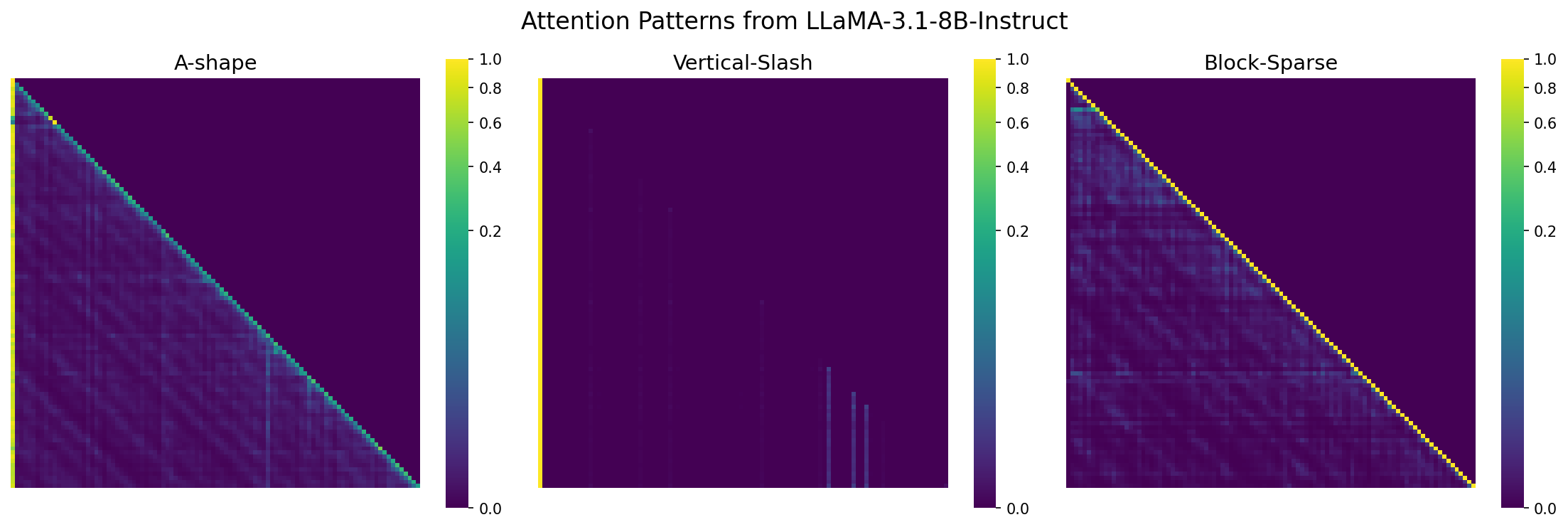

It may seem as if each input should have it’s unique attention pattern, however empirical evidence suggests that models consistently converge on a limited set of geometric archetypes. These recurring structures manifest primarily as diagonals, vertical lines, and dense blocks. By exploiting these predictable motifs, frameworks like MInference

Figure 4: Common sparsity patterns observed in attention matrices: A-shape (combining local and global attention), Vertical-slash (fixed-stride or dilated attention) and Block-sparse (sliding window with global blocks).

A-shape

The “A-shape” pattern is a method for selecting which token interactions are most important. It gets its name from the shape it forms when you visualize the sparse attention matrix. This structure often combines local and global information. For example, tokens may focus completely on their nearby neighbors, creating the wide base of the “A,” while also linking to a few carefully chosen “summary” tokens or positions earlier in the context, forming the peak. This “A” structure effectively connects detailed local context with a sparse but important long-range signal, without requiring a full quadratic computation.

Vertical-slash

The “vertical-slash” pattern offers another useful method. It gets its name from the way it looks in the attention matrix. This pattern often represents a “fixed-stride” or “dilated” attention mechanism. Instead of focusing on every previous token, which is too costly, or just a local window, which misses the bigger picture, a vertical-slash pattern may have each token attend to every nth token. This approach lets the model sample the entire context, regardless of its length. It creates a basic structure of long-range dependencies that is very efficient to compute.

Block-sparse

Perhaps the most intuitive of these patterns is block-sparse attention. Imagine the full, $N \times N$ attention matrix as a massive grid. Instead of calculating the entire grid, block-sparse attention only computes small, specific blocks within it. Most commonly, this includes a sliding window which consists of the blocks along the main diagonal. In this setup, each token only looks at its immediate neighbors. This approach is great for capturing local context. To avoid losing sight of the long-range view, this pattern is often improved by computing a few fixed global blocks. These blocks allow certain groups of tokens to communicate even if they are far apart in the sequence.

These pattern observations further allow us to refine our approaches in optimizing sparse attention methods.

MInference

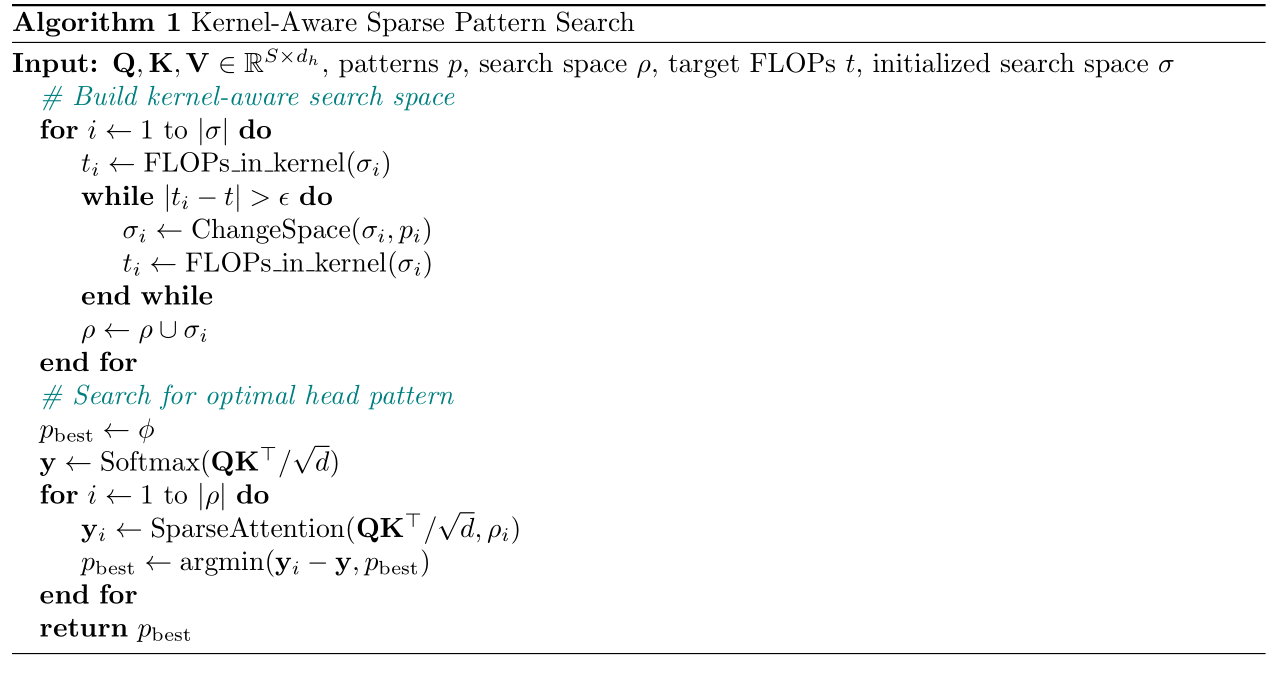

Their introduced methodology lead to a 95% decrease in FLOPs during attention computation. The first step is to assign the correct sparsity pattern to each attention head. MInference

Figure 5: From the paper MInference 1.0: Accelerating Pre-filling for Long-Context LLMs via Dynamic Sparse Attention

Once patterns are assigned, MInference distinguishes between static and dynamic execution strategies.

Offline (A-Shape): The A-shape pattern relies on local windows (neighboring tokens) and initial tokens (attention sinks). Since these indices depend only on relative positions and fixed starting points, the sparse mask is static. Therefore, no online approximation is needed; the mask is pre-determined offline, incurring zero overhead during inference.

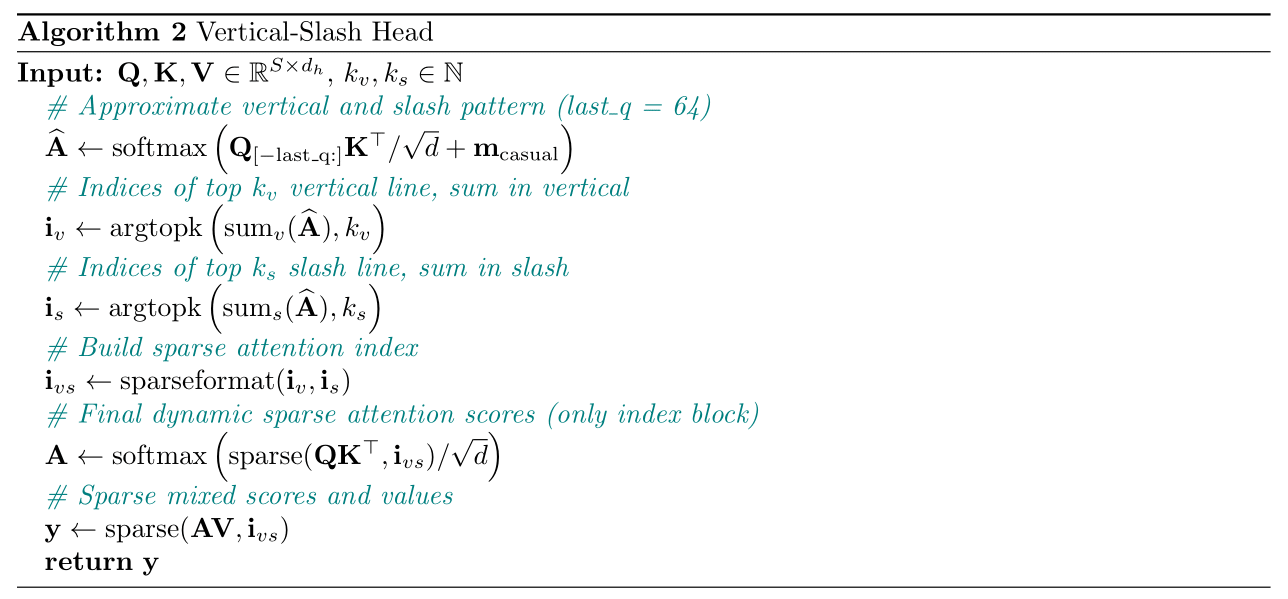

Online (Vertical-Slash & Block-Sparse): In contrast, the Vertical-Slash and Block-Sparse patterns are highly dynamic. A token might attend to specific “heavy hitter” tokens (vertical) or semantic clusters (blocks) that vary significantly depending on the input prompt. Consequently, MInference approximates these indices on the fly (online) for every new input.

For heads exhibiting the Vertical-Slash pattern, attention is concentrated on specific vertical lines (tokens attended by many others) and slash lines (periodic attention). To find these dynamically, MInference uses the continuity of these lines to its advantage. Instead of calculating the full matrix, it computes a small estimate using only the last few query vectors ($Q_{[-\text{lastQ}:]}$) and the full Key matrix as shown in Algorithm 2. The indices with the highest summed weights in this estimate determine the vertical and slash lines for the entire matrix.

Figure 6: From the paper MInference 1.0: Accelerating Pre-filling for Long-Context LLMs via Dynamic Sparse Attention

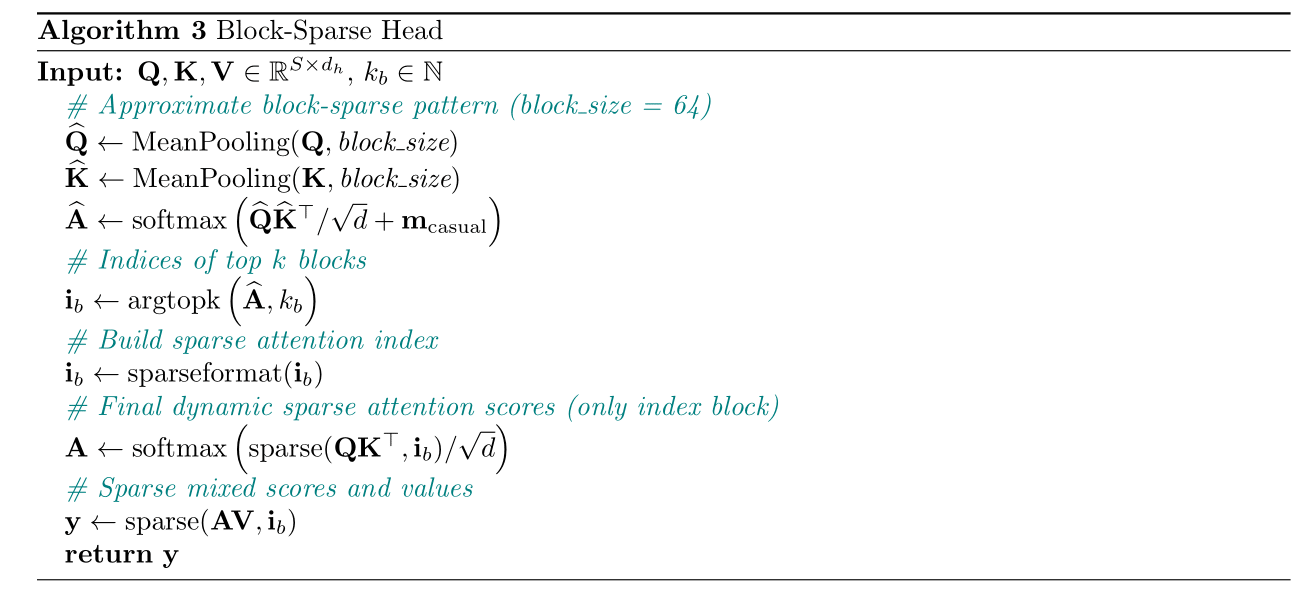

For the Block-Sparse pattern, where attention is spatially clustered, MInference uses a “low-resolution” view of the attention matrix as shown in Algorithm 3. It applies Mean Pooling to compress the Query and Key matrices into smaller blocks (e.g., 64×64). It then multiplies these compressed matrices to estimate block-level attention weights. This efficiently highlights the most important regions (blocks), allowing the model to compute full attention only for those specific high-value blocks.

Figure 7: From the paper MInference 1.0: Accelerating Pre-filling for Long-Context LLMs via Dynamic Sparse Attention

XAttention

Instead of treating these patterns as distinct categories, XAttention

Validation via Learned Gates

Unlike methods that enforce a shape from the start, SeerAttention

Sliding Window Attention

The most intuitive example of this class is Sliding Window Attention (SWA). Here, the attention matrix is restricted to a band along the diagonal: each query token $q_i$ only attends to a fixed-size window of key tokens $k_j$ where $\lvert i-j \rvert \le w$. This effectively relies on the “locality” heuristic that a token’s most relevant information is its immediate neighbors.

Methods like SWA, dilated attention, and fixed-block patterns share a key characteristic:

Regularity. Because the sparsity pattern is known ahead of time (e.g., $i \pm w$), indices can be pre-computed and memory access can be coalesced. This allows for efficient implementation using custom GPU kernels, minimizing the overhead of indirect addressing that plagues unstructured sparsity. However, strict locality introduces a challenge. In SWA, direct interaction between distant tokens (where $\lvert i-j \rvert \gg w$) is prohibited within a single layer. Information must “hop” from block to block across multiple layers to travel the length of the sequence. This multi-hop path causes signal attenuation and hinders the model’s ability to capture long-range dependencies effectively.

Star Attention: A method that mitigates this propagation issue while maintaining the efficiency of neighborhood-based methods

- Local Block: Its immediate neighbors (Standard SWA).

- Anchor Block: A shared global summarizer (e.g., the first block) that acts as a hub in a star-graph topology, also handles the problem of attention sinks.

This two-phase approach allows any query to access a summarized version of global information immediately, effectively eliminating the need for multi-hop propagation. This leads to a 11x speedup, as compared to normal self-attention.

Dynamic Sparsity

The limitation of the methods above is that they are structurally fixed. They apply the same geometric rule regardless of the text. So we look at Dynamic Sparsity, content‑aware masks that reveal patterns less geometric and more temporal. Think of it as rhythms over shapes, the propagation of attention across layers follows consistent trends, even as the mask adapts to the input. Formally, the attention pattern $S$ becomes a function of the input: $S=f(Q,K,V)$, letting the model allocate computation to the most relevant interactions for that specific sequence.

Tidal Decode

An implemented example of this is Tidal Decode

Through attention heatmaps like for Llama 3.1 8B Instruct in fig. 1, we observe that high attention scores are usually localized in two regions: the beginning and in this case, the middle. So, token selection layers are placed in these regions to sample the top-k most significant tokens. This significantly optimizes not only the attention computation but also the sampling process, leading to a 2.1x reduction in inference latency.

The Heavy Hitter Hypothesis

Attention scores follow a Power-Law distribution, meaning a small subset of “Heavy Hitter” tokens contribute the vast majority of value

Eviction Policy: The retention strategy is formulated as a dynamic submodular problem. At each decoding step $i$, the algorithm calculates a normalized score based on the current cache:

\[o_i := D_{i-1} \cdot \exp(Q_{i,:} (KS_{i,:})^\top)\]In this equation, $S_i$ denotes the subset of token indices currently retained in the KV cache constrained by budget $k$, and $D_i$ is the scalar normalization factor computed by summing the exponential attention scores of the keys present in $S_i$.

The algorithm applies a greedy policy without accessing future tokens:

- Cache Not Full: The current token is simply added to the set.

- Cache Full: The algorithm evicts the token $u$ with the lowest accumulated attention score to make room.

Theoretical Guarantees: This method is backed by a theoretical bound proving it is not just a heuristic. It guarantees performance relative to an optimal policy (one with infinite resources):

\[f(S_i) \geq (1-\alpha)(1-1/e) \cdot opt_i - \beta\]This indicates the policy achieves at least $\sim 63\%$ (i.e., $1-1/e$) of the optimal value, adjusted for the accumulated error between decoding steps ($1-\alpha$).

Dynamic KV Cache Management

KV Caching

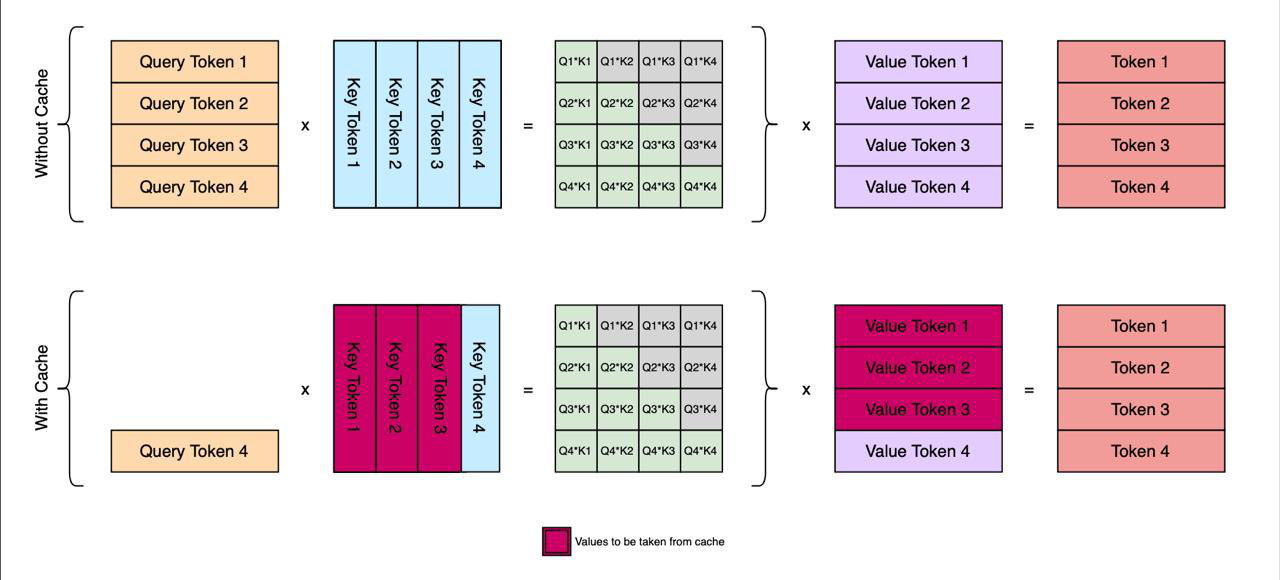

In normal autoregressive generation (predicting one token at a time), re-computing the Key and Value matrices for the entire history at every step is inefficient

where $d$ is the head dimension and $m_\text{causal}$ enforces causality. KV caching removes the need to recompute $K,V$ for tokens $1\ldots t-1$, but the cache itself grows linearly with $t$ across heads and layers

for keys and values, which becomes a bottleneck for long contexts. This is why later sections focus on pruning, clustering, or episodic reuse of cached states rather than storing everything. Additionally, methods like EPIC

Figure 8: A comparison of attention computation showing how KV Caching (bottom) improves efficiency by storing previous Key and Value states (highlighted in dark pink) for reuse. Unlike the standard approach (top) which re-calculates all interactions, the cached approach allows the model to only process the newest query (Query Token 4) against the stored history to generate the next token.

Optimizing KV Cache

In classical Transformers, while computing self-attention scores during the prefilling stage, the norm is to store the KV cache to enable efficient inference, i.e, reducing redundant calculations

- The cache grows linearly with context length. In the above case, the cache grows significantly, leading to out of memory errors (OOMs).

- As originally observed, in any context, the attention pattern is sparse, leading us to conclude that for a particular query, parts of the context are entirely irrelevant.

This implies that an Optimal Policy (or Oracle) exists that would retain only this tiny, critical fraction of the context and discard the rest without losing accuracy. Since we cannot predict the future to know exactly which tokens the “Optimal Policy” would choose, methods like H2O (Heavy-Hitter Oracle) replicate this behavior by using past attention as a proxy for future importance. The intuition is that tokens which are important once tend to remain important. H2O dynamically retains tokens that have accumulated high attention scores over time (the “Heavy Hitters”) and evicts those that do not.

These types of dynamic approaches ensure that while the total memory usage remains constant (avoiding OOMs), the cache effectively “evolves” to contain the most relevant information for the ongoing conversation.

Squeezed Attention

This method targets fixed-document contexts with user queries

Because centroid lookups add overhead, retrieval scales via hierarchical clustering: first form fine‑grained clusters (Level 2), then cluster those into coarse groups (Level 1). At inference, score Level 1, select a subset to meet a target sparsity (e.g., 90%), then refine within Level 2 to pick final keys.

\[S_l^{(2)} = \frac{\exp \left(q C_l^{(2)\top}\right)}{\sum_m N_m^{(2)} \cdot \exp \left(q C_m^{(2)\top}\right)}\]However, the squeezed attention method again introduces reliance on hyperparameter tuning, which is dependent on the input context and varies across any dataset with multiple samples. A further optimisation that could be done is to automate the hyperparameter tuning process. Additionally, the clustering process is very slow, rendering the method ineffective when context is provided online in real-time. Further work can also be done to address this limitation, thereby removing the fixed context requirement.

Epicache

While Squeezed Attention offers an effective solution for static, long-context documents, it runs into a critical limitation: it relies on “post-prefill” processing. The model must first load the entire context into memory to cluster the keys, leading to unbounded peak memory usage. Which proves to be problematic. Epicache

Methodology

First we partition the conversation $H={u_1,\dots,u_{N_u}}$ into $K$ segments of size $w_{embed}$:

\[K = \left\lceil \frac{N_u}{w_{embed}} \right\rceil\]Encode segments to embeddings ${e_k}$ and cluster into $E$ topical episodes:

\[C(\{e_k\}_{k=1}^K) \rightarrow \{\mathcal{E}_1, \dots, \mathcal{E}_E\}\]Use the medoid of each episode (closest to its centroid) as a patched prompt for scoring.

The second step is to prefill block‑wise per episode: process blocks of size $M$, append the patched prompt, and score tokens by the maximum attention they receive from prompt queries:

\[s_i^{max} = \max_{t \in [n+1, n+p]} Attn(x_t \to x_i)\]Allocate the global budget by layer using sensitivity $\sigma_l$ (cosine similarity between full‑context and block‑prefill keys). With $s_l = 1 - \sigma_l$ and sharpness $\alpha$:

\[M_l^{alloc} = \frac{s_l^\alpha}{\sum_{l'} s_{l'}^\alpha} (L \cdot M), \qquad \sigma_l = \frac{1}{H N} \sum_{h=1}^{H} \sum_{i=1}^{N} \cos \big( \mathbf{k}_{full,i}^{(l,h)}, \mathbf{k}_{block,i}^{(l,h)} \big)\]This prioritizes layers most perturbed by compression; $\alpha$ controls how sharply budget concentrates (e.g., 1.1 for Llama, 1.3 for Qwen).

And finally during decoding, the query is embedded into the same vector space as the clusters, where a similarity search is performed to identify the most relevant episodes. The system then retrieves the pre-computed episodic caches, which are used to generate the output.

Emperical Evaluation of the Speed-Accuracy Trade-off

While the theoretical efficiency of these geometric and algorithmic sparse patterns is clear, practical deployment requires balancing acceleration with the “retrieval tax” i.e, the slight degradation in accuracy when we rely on the hueristics and the patterns mentioned above. To provide a unified comparison, the Table 1 evaluates these methods (all numbers were taken directly from the papers) across standard long-context benchmarks (such as LongBench, RULER, and Needle-In-A-Haystack).

As shown below, static geometric patterns excel at massive pre-fill acceleration, while dynamic and KV-cache methods provide steady gains during the decoding phase with near-lossless memory retention. Note that all methods can be made compatible with Flash Attention, as long as the neccessary kernels are written to handle sparse positional ID’s.

| Framework | Max Speedup | Inference Phase | Memory/Cache Reduction | Accuracy Trade-off |

|---|---|---|---|---|

| X-Attention | 13.5x | Attention | Cache is of same size | within 1-2% of the dense full-attention baseline on RULER |

| MInference | 10x | Pre-fill | 95% FLOPs decrease | 100% on NIAH (128k); ~1.2 points drop on LongBench |

| Star Attention | 11x | Overall | Cache is of same size | 96-99% retention on LongBench tasks |

| SeerAttention | 7.3x | Attention | Cache is of same size | ~0.41% drop on RULER, near-lossless on PG19 (32k) |

| TidalDecode | 2.1x | Decoding | 99.5% token sparsity | 100% on NIAH (100k); within 0.3 points on LongBench |

| H2O | 1.9x-3x | Throughput | Up to 20x reduction | Retains ≥95% performance; <1% drop in zero-shot accuracy |

| Squeezed Attention | >4x | Prefill & Decode | User-defined (e.g. 90%) | <0.11% drop at 70% sparsity on Longbench |

| EpiCache | 2.4x | Decoding | 3.5x peak reduction | <0.5% perplexity degradation, ≤1.5% drop on LongBench |

Table 1: Comparison of Inference Frameworks by Speedup, Inference Phase, Memory Reduction, and Accuracy Trade-offs

Conclusion

Transformers underperform not because they can’t reason, but because dense attention scales quadratically. The way through isn’t “more compute”, it’s embracing the fact that models rarely need to attend everywhere at once. Sparsity turns this observation into a principle: spend compute where it matters, skip where it doesn’t. Across the post, three threads converge:

- Mathematical Reformulation: Rethink normalization (e.g., Softpick) to reduce sink-driven outliers while keeping gradients healthy.

- Geometric Patterns: Lean on recurrent shapes (A‑shape, verticals, blocks) to prune decisively with minimal loss.

- Dynamic Memory: Treat KV as a living library where we prioritize heavy hitters, cluster contexts, and cache episodes, not raw logs.

Put together, these ideas sketch a practical blueprint for long‑context systems:

- Keep anchors: Always retain initial tokens/sinks to preserve skip‑mix stability.

- Mix local + global: Pair diagonal windows with sparse global links (A‑shape/star) to avoid multi‑hop dilution.

- Select, don’t store: Use thresholds, clustering, and episodic caches to bound memory while preserving recall.

The outcome is a transformer that thinks in long horizons without drowning in its own history. As tooling and kernels improve, expect “sparse‑first” stacks where attention masks, cache policies, and retrieval all cooperate in a fast, stable, and precise manner at scale.

PLACEHOLDER FOR ACADEMIC ATTRIBUTION

BibTeX citation

PLACEHOLDER FOR BIBTEX