In-context learning of representations can be explained by induction circuits

Park et al., 2025 find that when large language models process random walks on a graph in-context, the geometry of their token representations comes to mirror the graph's connectivity structure. This suggests a two-step mechanism: the model first reshapes its representations to reflect the graph structure, then operates over them to predict valid next steps. We offer a simpler mechanistic explanation. The in-context graph tracing task can be solved by induction circuits, which implement a simple rule: if token B followed token A earlier in the sequence, predict B the next time A appears. Since consecutive tokens in a random walk are always graph neighbors, this rule naturally produces valid steps. Ablating the attention heads that comprise induction circuits substantially degrades task performance, compared to ablating random heads. As for the geometric structure of representations, we propose that induction circuits can account for this too: their previous-token heads mix each token's representation with that of its predecessor, always a graph neighbor in a random walk. We show that a single round of such 'neighbor mixing' applied to random embeddings is sufficient to recreate the graph-like PCA structure. These results suggest that the observed representation geometry is a byproduct of how the model solves the task, not the means by which it does so.

Park et al., 2025

Recapitulation and reproduction of Park et al., 2025

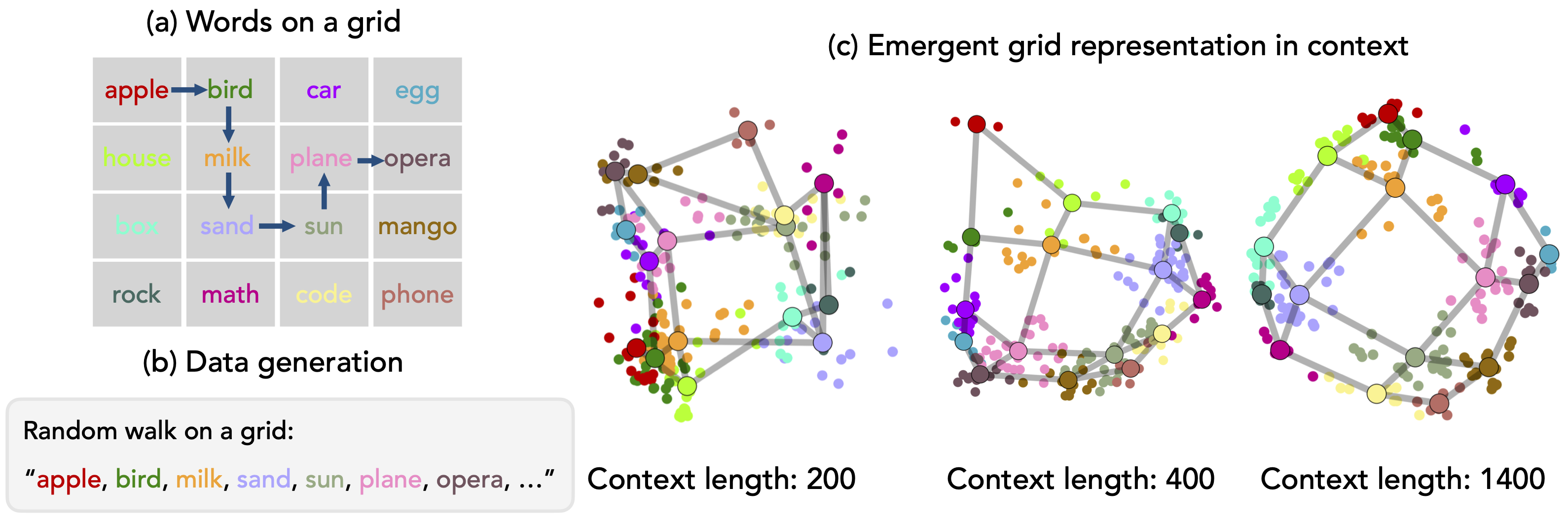

We begin by describing the experimental setup of Park et al., 2025

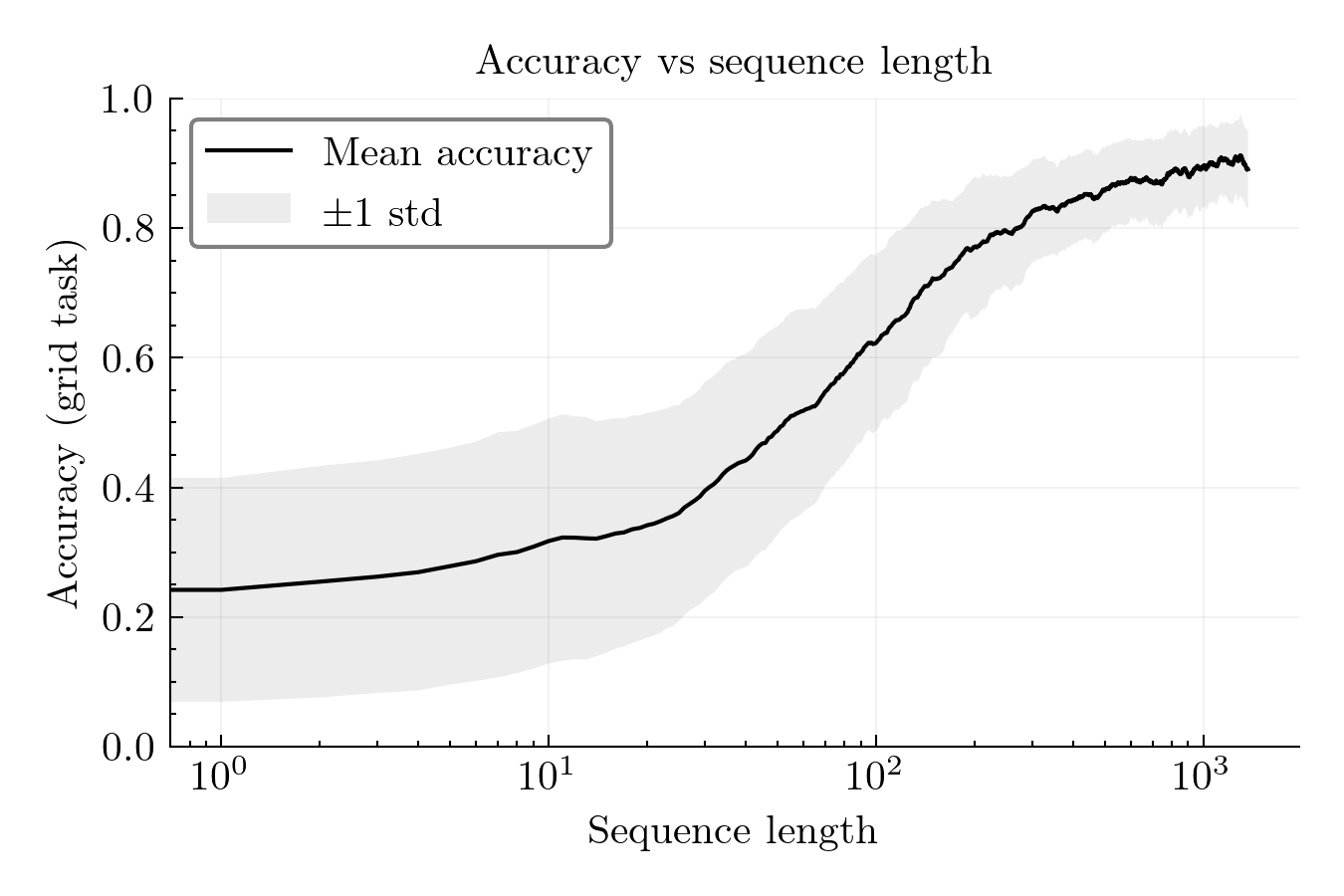

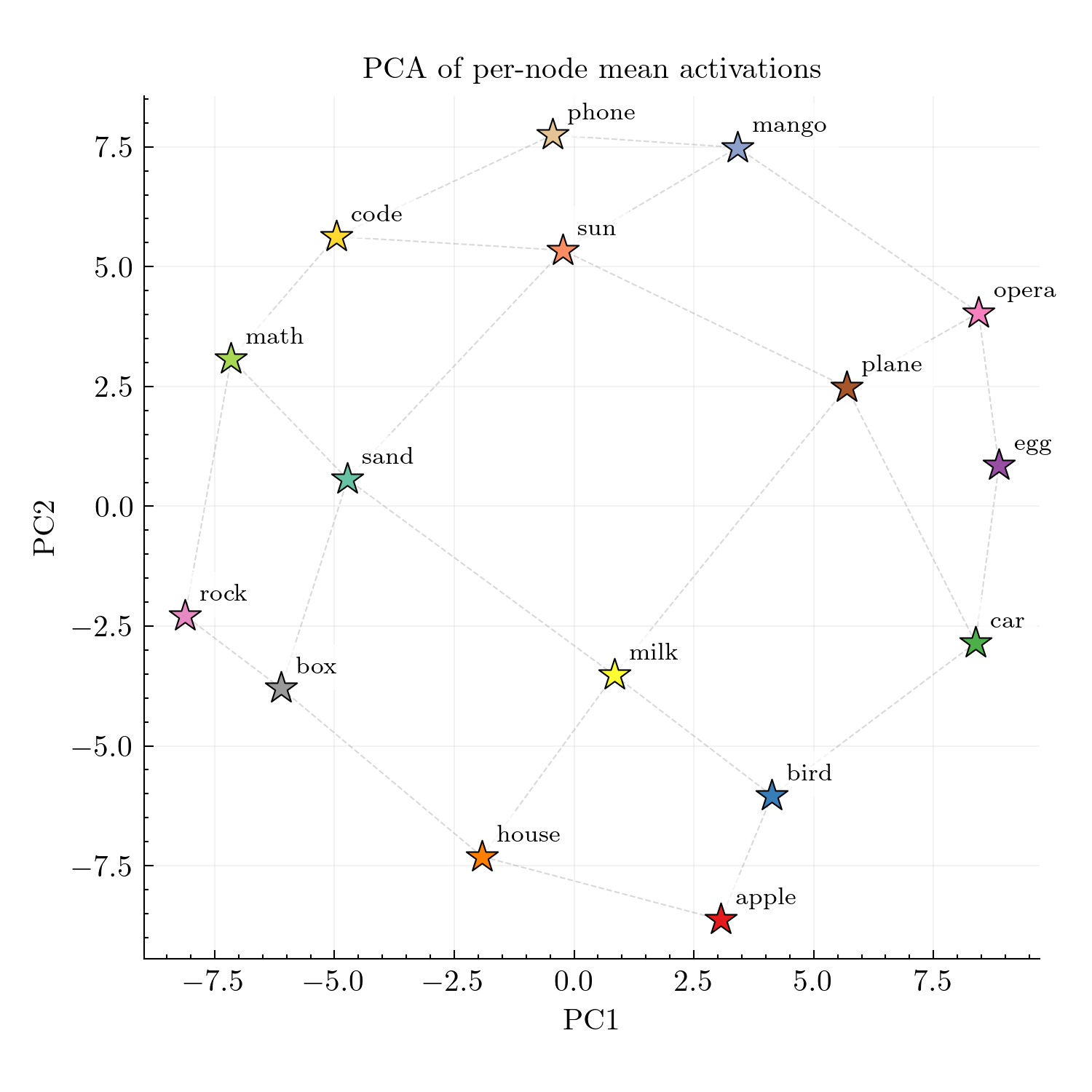

apple bird milk sand sun plane opera ...) where consecutive words are always neighbors. As the sequence length grows, the model begins to predict valid next words based on the graph structure. (c) Surprisingly, the geometry of the model's effective token representations mirrors that of the grid structure: the model comes to represent each node adjacent to its neighbor in activation space. Figure reproduced from Park et al.

The grid tracing task

Park et al. introduce the in-context graph tracing task. The task involves a predefined graph \(\mathcal{G} = (\mathcal{T}, E)\) where nodes \(\mathcal{T} = \{\tau_1, \tau_2, \ldots, \tau_n\}\) are referenced via tokens (e.g., apple, bird, math, etc.). The graph’s connectivity structure \(E\) is defined independently of any semantic relationships between the tokens. The model is provided with traces of random walks on this graph as context and must predict valid next nodes based on the learned connectivity structure. While Park et al. study graph tracing on three different graph structures, we focus exclusively on their \(4{\times}4\) square grid setting (Figure 1). We provide details of the experimental setup below; our methodology always follows Park et al. except when otherwise noted.

Grid structure. The task uses a \(4{\times}4\) grid of 16 distinct word tokens: apple, bird, car, egg, house, milk, plane, opera, box, sand, sun, mango, rock, math, code, phone. apple is a single token). Sequences are tokenized with a leading space before the first word, ensuring single-token-per-word encoding.

Random walk generation. Sequences are generated by random walks on this grid: starting from a random position, the walk moves to a uniformly random neighbor at each step. This produces sequences like apple bird milk sand sun plane opera ... where consecutive words are always grid neighbors. Following Park et al., we use sequence lengths of 1400 tokens.

Measuring accuracy. At timestep \(t\), the walk is at node \(w_t\) with neighbors \(\mathcal{N}(w_t)\), and the model outputs a distribution \(p_{\theta}(\cdot \mid w_{1:t})\) over vocabulary tokens. Following Park et al., we define “rule following accuracy” as the total probability mass assigned to valid next nodes:

\[\text{acc}_t \;=\; \sum_{w \in \mathcal{N}(w_t)} p_{\theta}(w \mid w_{1:t}).\]PCA visualization. To assess whether the model’s representations come to resemble the grid structure, we extract activations from a late layer (layer 26 out of 32). For each of the 16 words, we compute a class-mean activation by averaging over all occurrences in the final 200 positions of the sequence. We then project these 16 class-mean vectors onto their first two principal components for visualization. If the representation geometry reflects the grid, neighboring tokens should appear nearby in this projection.

Reproduction and Park et al.’s interpretation

Figure 2 shows our reproduction of Park et al.’s main results on Llama-3.1-8B.

Park et al. interpret these findings as evidence that the geometric reorganization plays a functional role in task performance: the model learns the graph structure in its representations, and this learned structure is what enables accurate next-node predictions.

“We see once a critical amount of context is seen by the model, accuracy starts to rapidly improve. We find this point in fact closely matches when Dirichlet energy

Dirichlet energy measures how much a signal varies across graph edges. Low energy means neighboring nodes have similar representations, so Park et al. use it to quantify how well the model's representations respect the graph structure. reaches its minimum value: energy is minimized shortly before the rapid increase in in-context task accuracy, suggesting that the structure of the data is correctly learned before the model can make valid predictions. This leads us to the claim that as the amount of context is scaled, there is an emergent re-organization of representations that allows the model to perform well on our in-context graph tracing task.”— Park et al. (Section 4.1; emphasis in original)

A simpler explanation: induction circuits

We propose that the grid tracing task can be solved by a much simpler mechanism than the in-context representation reorganization posited by Park et al.: induction circuits

An induction circuit consists of two types of attention heads working together. Previous-token heads attend from position \(t\) to position \(t{-}1\), copying information about the previous token into the current position’s residual stream. Induction heads then attend to positions that follow previous occurrences of the current token. Together, they implement in-context bigram recall: “if \(B\) followed \(A\) before, predict \(B\) when seeing \(A\) again.”

In the grid task, if the model has seen the bigram apple bird earlier in the sequence, then upon encountering apple again, the induction circuit can retrieve and predict bird. Since consecutive tokens in a random walk are always grid neighbors, every recalled successor is guaranteed to be a valid next step. With enough context, the model will have observed multiple successors for each token, and can aggregate over these to assign probability mass to all valid neighbors. apple bird and apple house, it can distribute probability across both bird and house when predicting the next token after apple.

Testing the induction hypothesis

If the model relies on induction circuits to solve the task, then ablating the heads that comprise them should substantially degrade task performance. We test this via zero ablation: setting targeted attention heads’ outputs to zero and measuring the causal impact on both task accuracy and in-context representations.

Head identification. Following Olsson et al., 2022

Ablation procedure. For each head type, we ablate the top-\(k\) heads for \(k \in \{1, 2, 4, 8, 16, 32\}\) and measure impact on task accuracy and representation geometry. As a control, we ablate random heads sampled from all heads excluding the top 32 induction and top 32 previous-token heads. All accuracy curves are averaged over 16 random walk sequences (one per grid starting position). The random head control additionally averages over 4 independent sets of 32 heads.

Results

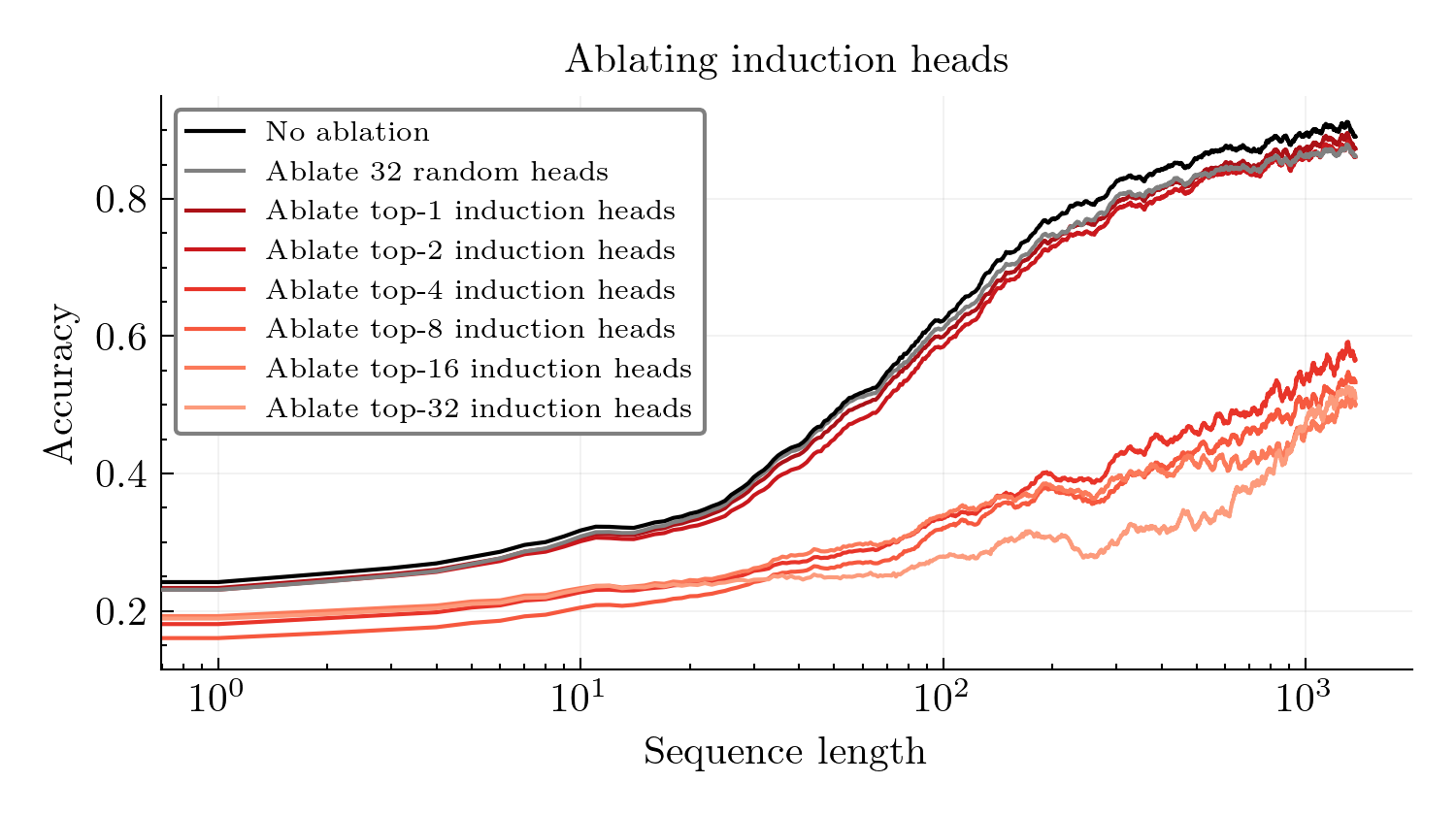

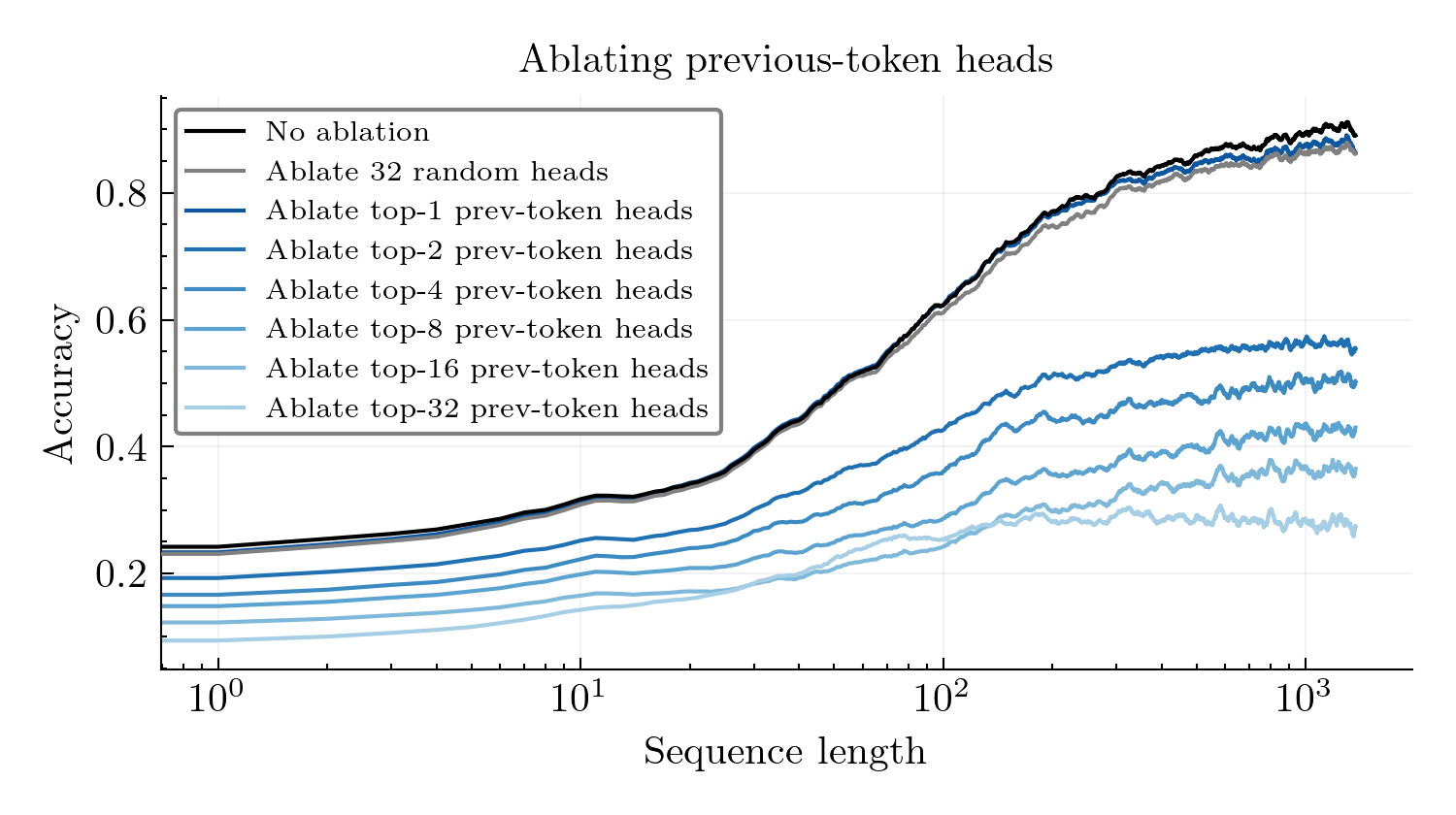

Both induction heads and previous-token heads are critical to task performance. Figure 3 shows task accuracy under head ablations. Ablating the top-4 induction heads causes accuracy to drop from \({\sim}0.90\) to \({\sim}0.60\), and ablating the top-32 drops accuracy all the way to \({\sim}0.40\). Ablating just the top-2 previous-token heads reduces accuracy to below \(0.60\), and ablating the top-32 previous-token heads further drops accuracy to \({\sim}0.30\).

In contrast, ablating \(k\) random heads causes only minor degradation (accuracy remains at \({\sim}0.85\)), suggesting that induction and previous-token heads are particularly important for task performance.

While both head types are important for task performance, their ablations have qualitatively different effects on in-context learning dynamics. Ablating induction heads degrades performance, but accuracy continues to ascend as context length increases. In contrast, ablating previous-token heads causes accuracy to plateau entirely.

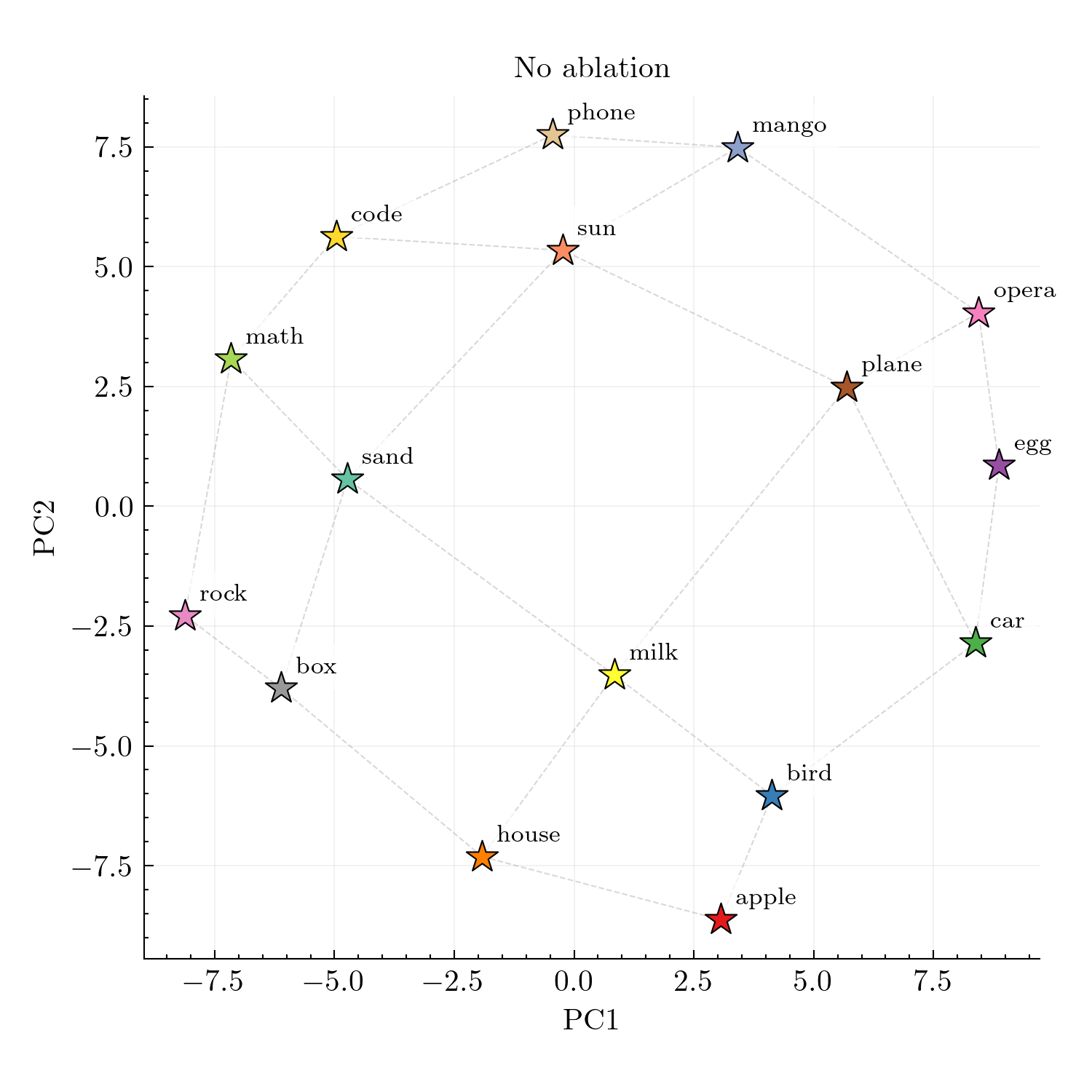

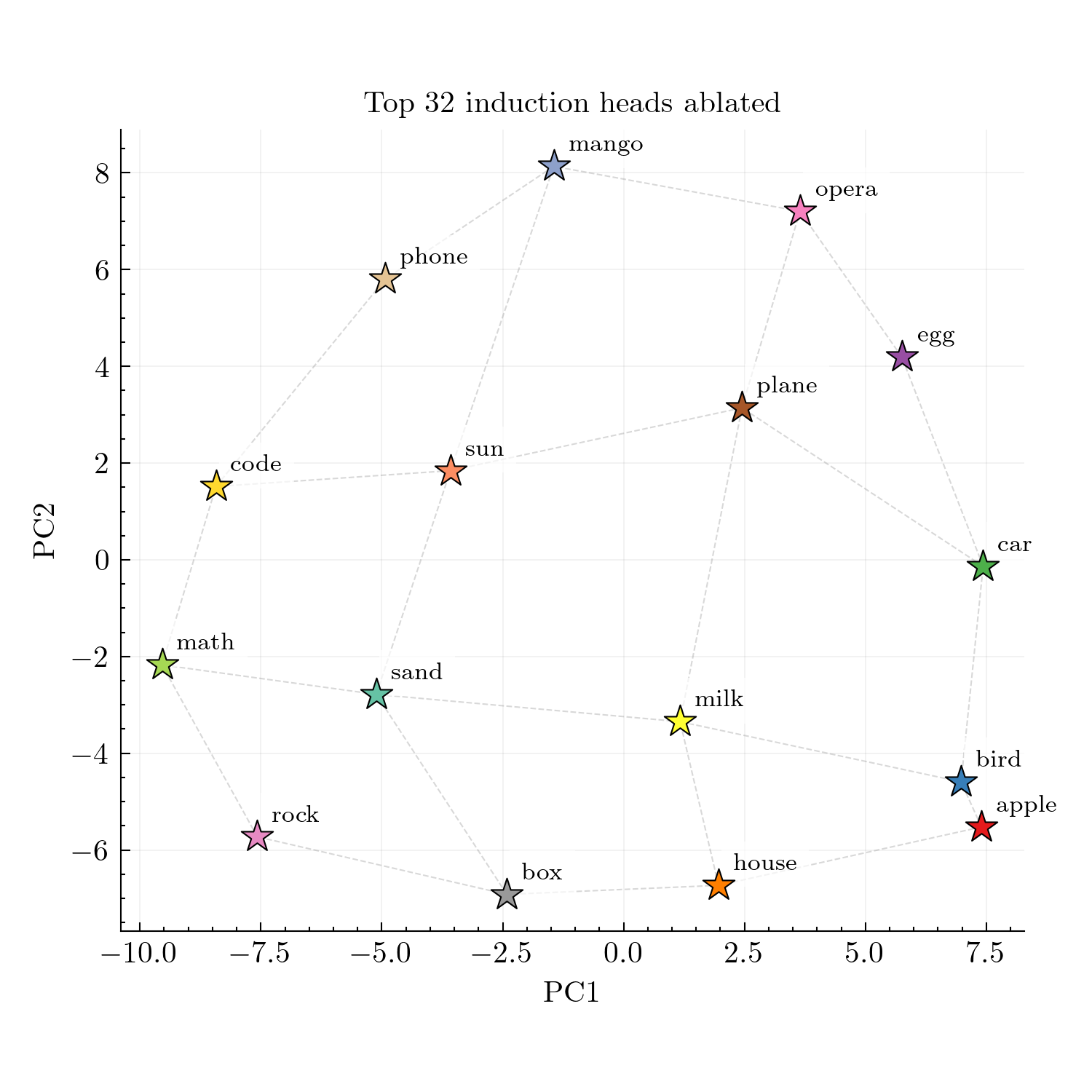

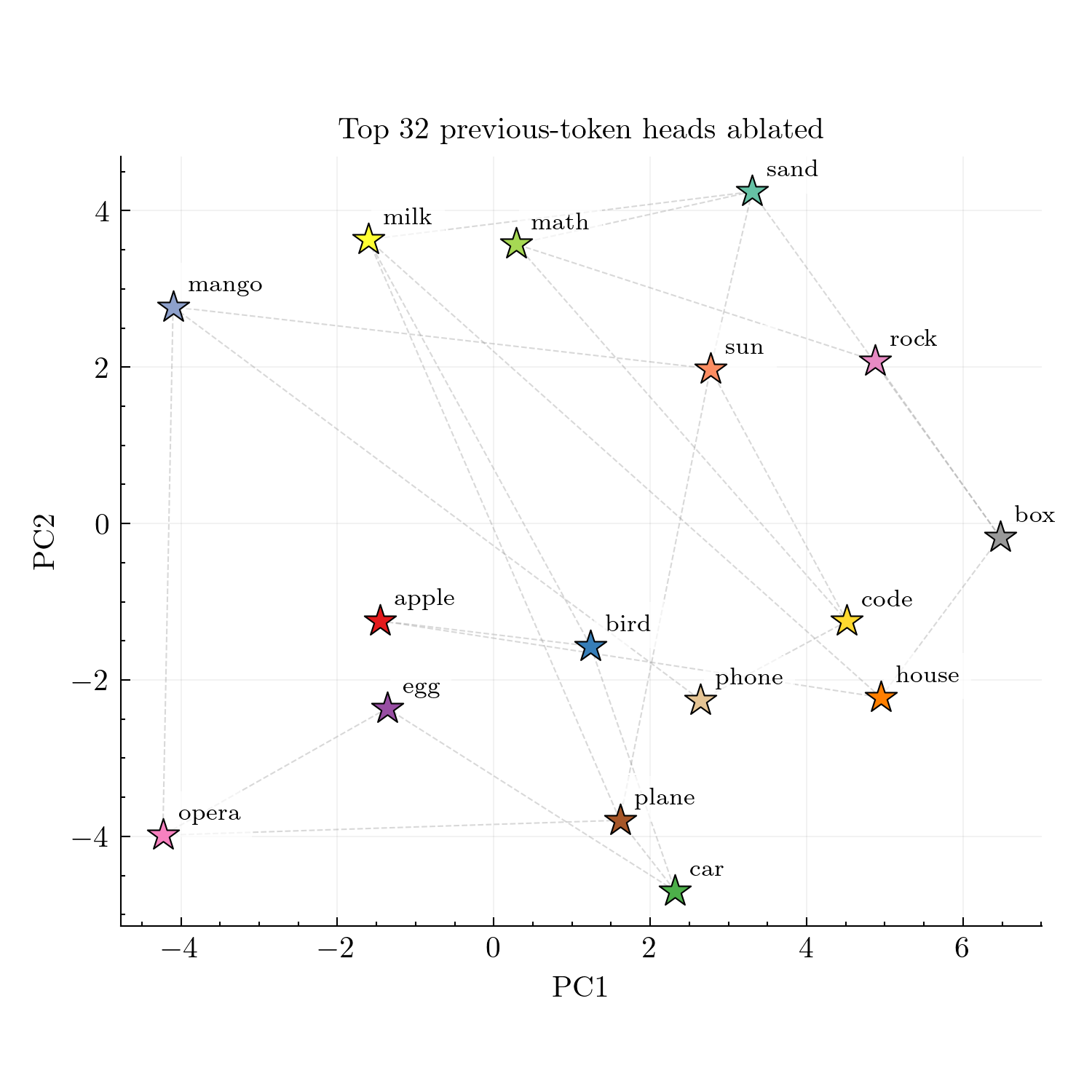

Ablating previous-token heads disrupts representation geometry. While both head types are important for accuracy, they seem to have different effects on representation geometry. Figure 4 shows that ablating induction heads preserves the grid-like geometric structure in PCA visualizations, as the 2D projections still resemble the spatial grid. However, ablating previous-token heads disrupts this structure, causing representations to lose their apparent spatial organization.

Previous-token mixing can account for representation geometry

In the previous section, we studied task performance and argued that the model achieves high task accuracy by using induction circuits. We now study the representation geometry, and attempt to explain the grid-like PCA plots. We will argue that this structure is plausibly a byproduct of “token mixing” performed by previous-token heads.

The neighbor-mixing hypothesis

Figure 4 shows that ablating previous-token heads disrupts the grid structure, while ablating induction heads preserves it. This suggests that previous-token heads are somehow necessary for the geometric organization. But what mechanism could link previous-token heads to spatial structure?

Previous-token heads mix information from position \(t-1\) into position \(t\). In a random walk, the token at \(t-1\) is always a grid neighbor of the token at \(t\). So each token’s representation gets mixed with a neighbor’s. When we compute the class mean for word \(c\), we average over all positions where \(c\) appears, each of which has been mixed with whichever neighbor preceded it. Over many occurrences, \(c\) is preceded by each of its neighbors roughly equally, so the class mean for \(c\) roughly encodes \(c\) plus an average of its neighbors.

To test whether neighbor-mixing alone can create the observed geometry, we construct a minimal toy model.

A toy model of previous-token mixing

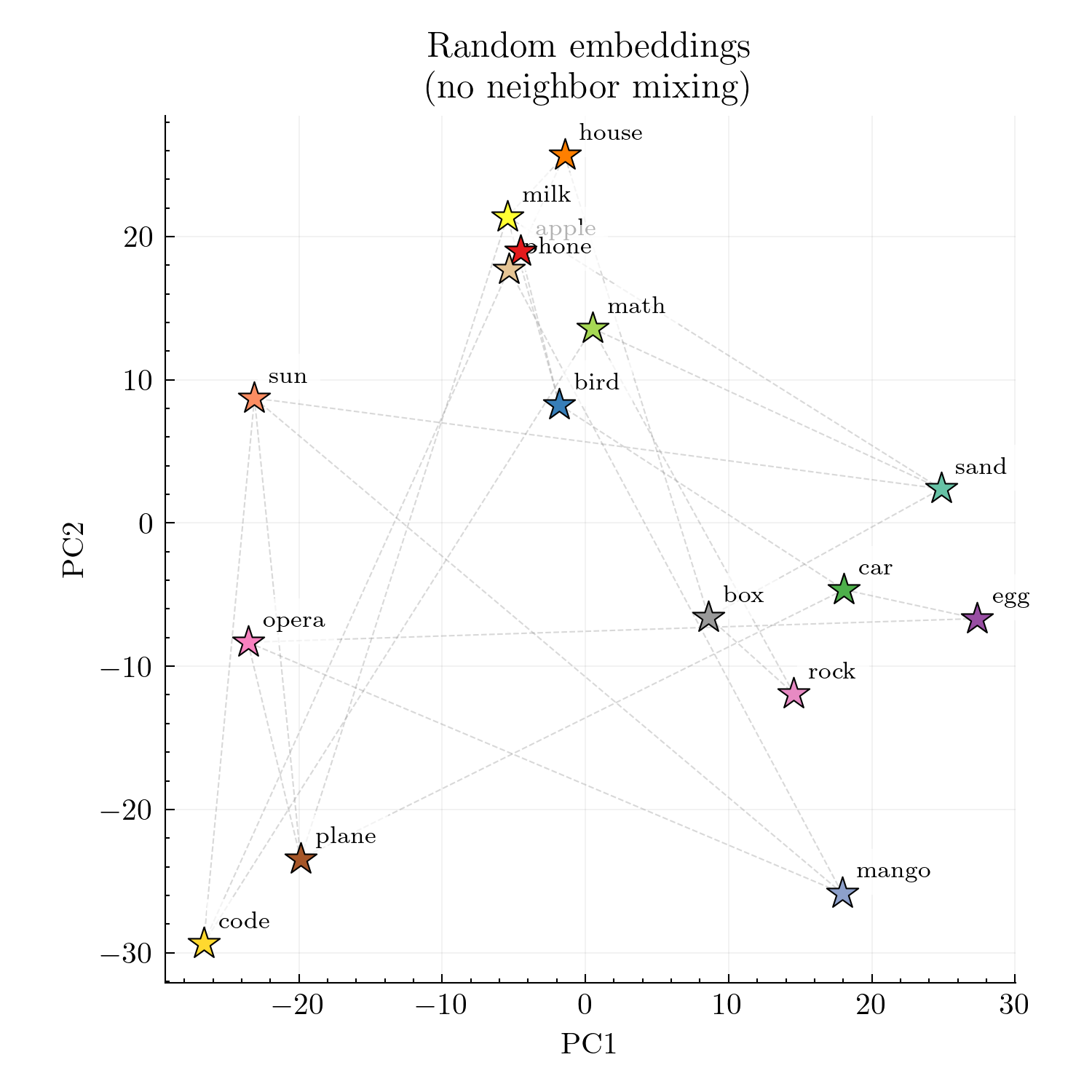

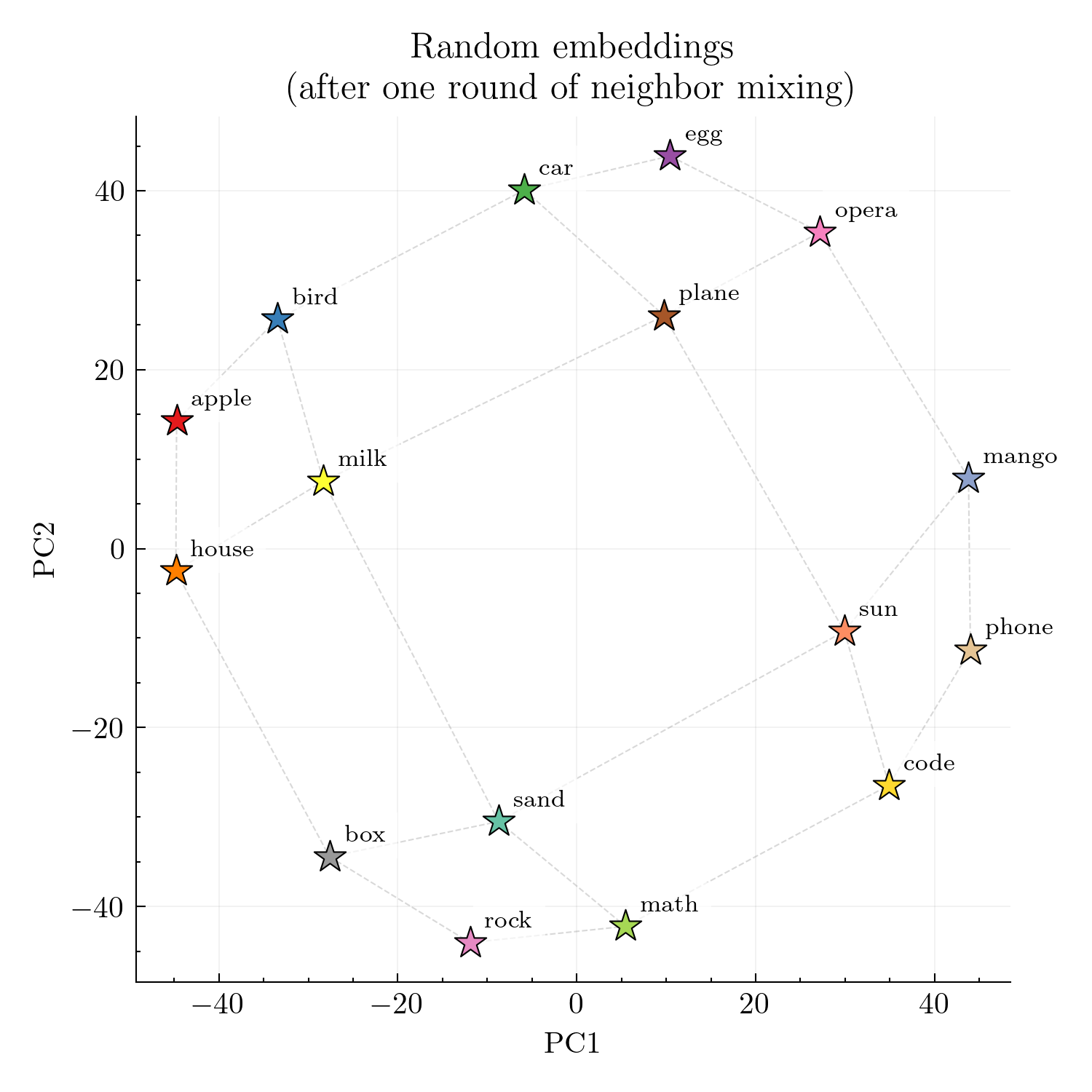

We work directly in a 16-token space indexed by the \(4{\times}4\) grid nodes. Each node \(i\) is assigned an initial random vector \(\mathbf{e}_i \in \mathbb{R}^{4096}\), sampled i.i.d. from \(\mathcal{N}(0,I)\). PCA of just the raw embeddings \(\{\mathbf{e}_i\}\) produces an essentially unstructured cloud: there is no visible trace of the grid.

We then apply a single, “neighbor mixing” step:

\[\tilde{\mathbf{e}}_i \;=\; \mathbf{e}_i \;+\; \frac{1}{|\mathcal{N}(i)|} \sum_{j \in \mathcal{N}(i)} \mathbf{e}_j,\]where \(\mathcal{N}(i)\) denotes the set of neighbors of node \(i\).

After this one step, PCA of the 16 mixed vectors \(\{\tilde{\mathbf{e}}_i\}\) recovers a clear \(4{\times}4\) grid: neighbors are close in the 2D projection and non-neighbors are far (Figure 5).

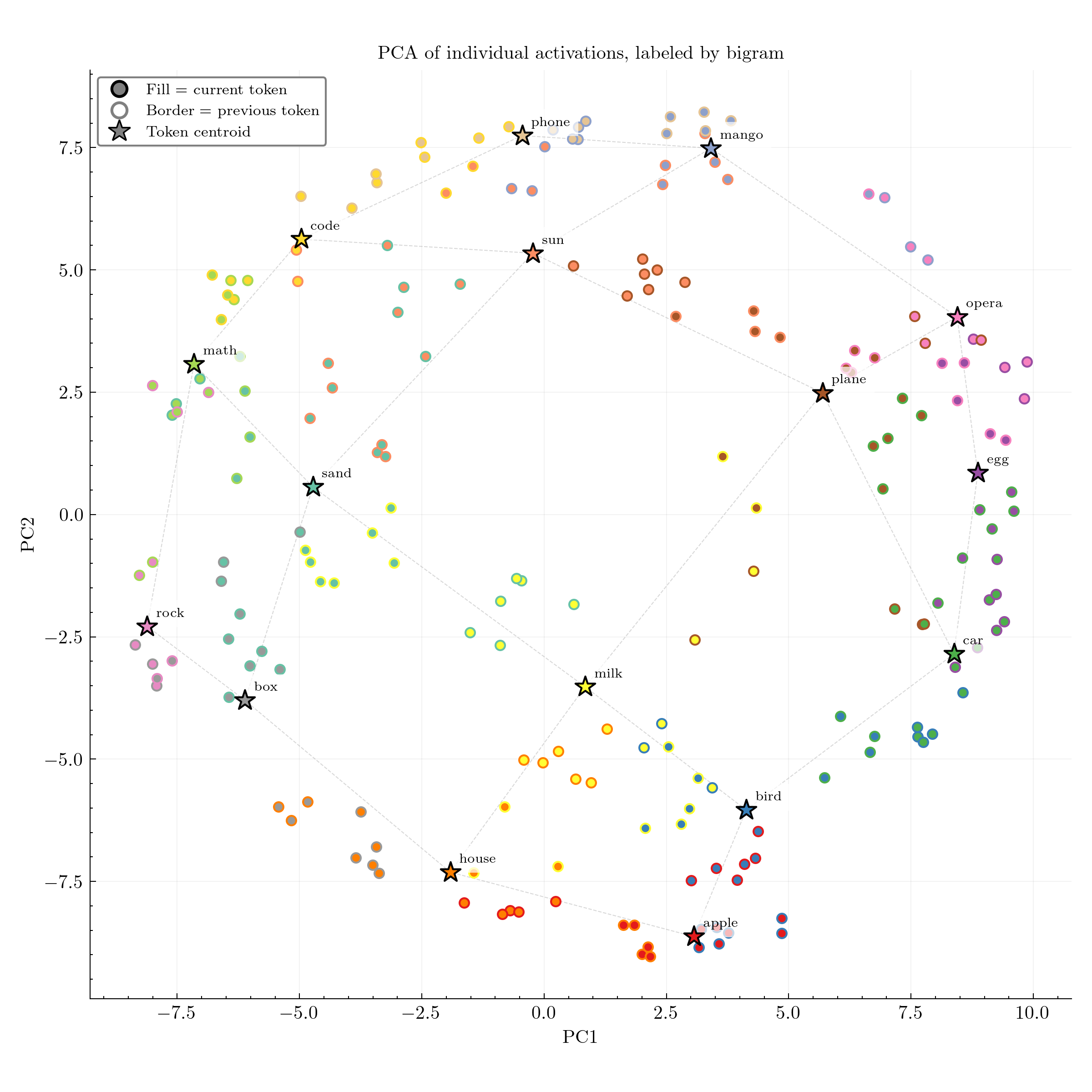

Evidence of neighbor mixing in individual model activations

The neighbor-mixing hypothesis makes a further prediction: individual activations should reflect not just the current token, but also its predecessor.

Instead of collapsing each word into a single class mean, we take the final 200 positions of a length-1400 random-walk sequence and project all 200 residual-stream vectors into the same 2D PCA space used for the class means. Each point now corresponds to a specific activation. For each point, we display bigram information: the center color indicates the current token \(w_t\) and the border color indicates the previous token \(w_{t-1}\).

Individual activations seem to bear the fingerprint of previous-token mixing (Figure 6). For example, activations at positions where the bigram plane math occurred tend to lie between the plane and math centroids, and positions where egg math occurred tend to lie between the egg and math centroids. We see similar “in-between” behavior for all other bigrams. This is what one would expect if the representation of \(w_t\) contains something like a mixture of “self” and “previous token” rather than depending only on the current word.

Limitations

Our experiments point toward a simple explanation: the model performs in-context graph tracing via induction circuits, and the grid-like PCA geometry is a byproduct of previous-token mixing. However, our understanding remains incomplete in important ways.

The toy model is a significant simplification. Our neighbor-mixing rule assumes that previous-token heads simply add the previous token’s activation \(\mathbf{h}_{t-1}\) to the current token’s activation \(\mathbf{h}_t\). In reality, attention heads apply value and output projections: they add \(W_O W_V \mathbf{h}_{t-1}\), where \(W_O W_V \in \mathbb{R}^{d_{\text{model}} \times d_{\text{model}}}\) is a low-rank matrix (rank \(\leq d_{\text{head}}\)). This projection could substantially transform the information being mixed, and notably cannot implement the identity mapping (with a single head, at least) since it is low-rank. We also model everything as a single mixing step on static vectors, whereas the actual network has many attention heads, MLP blocks, and multiple layers that repeatedly transform the residual stream.

Why does the grid structure emerge late in the sequence? Previous-token heads are active from the start of the sequence, yet the grid-like PCA structure only becomes clearly visible after many tokens have been processed. If neighbor-mixing were the whole story, we might expect the geometric structure to appear earlier. Yang et al.

Limited to the in-context grid tracing task. Our analysis is limited to the \(4{\times}4\) grid random walk task from Park et al., where bigram copying suffices for next-token prediction. Lepori et al., 2026

Conclusion

We have argued that the phenomena observed by Park et al.

These findings suggest that the “representation reorganization” observed by Park et al. may not reflect a sophisticated in-context learning strategy, but rather an artifact of previous-token head behavior.

Appendix A

A: Code availability

All code and experiments are available at github.com/andyrdt/iclr_induction.

Appendix B

B: Head detection methodology

We identify induction heads and previous-token heads using attention pattern analysis on synthetic repeated sequences, following the approach of Olsson et al., 2022

B.1: Test sequence construction

We construct a test sequence by repeating a random sequence of tokens:

For layer

B.2: Induction score

Induction heads exhibit a characteristic pattern: for a repeated token at position

We compute the induction score as the average attention along this offset-31 diagonal:

This measures how strongly the head attends to "the token that followed the previous occurrence of the current token."

B.3: Previous-token score

Previous-token heads implement a simpler pattern: at each position, they attend primarily to the immediately preceding token.

We compute the previous-token score as the average attention along the offset-1 diagonal:

B.4: Head ranking

We average scores across the batch of 32 sequences, then rank all 1024 heads (32 layers

PLACEHOLDER FOR ACADEMIC ATTRIBUTION

BibTeX citation

PLACEHOLDER FOR BIBTEX