Dissecting Non-Determinism in Large Language Models

The Large Language Models (LLMs) evolve into the backbone of complex decision-making systems, their inherent non-deterministic nature poses a significant threat to the validity of experimental results. This blog explores the impact of stochasticity, prompt brittleness, and LLM-as-a-Judge during both response generation and evaluation. We conclude that understanding these dynamics is essential to prevent misleading conclusions, advocating for consistency oriented practices that treat non-determinism as a critical variable in rigorous experimentation.

In scientific research, reproducibility is the currency. If an experiment cannot be replicated, its validity crumbles. However, in the era of Large Language Models (LLMs), we face a fundamental contradiction: we are attempting to build deterministic science upon inherently stochastic tools. So, how do we ensure that our results are not merely the product of chance?

To address this, we must first dissect the nature of the problem. In this post, we will begin by exploring the non-deterministic nature of LLMs. We will see that this variability is not a “bug,” but rather a “feature” essential for simulating creativity and human fluency

However, determinism does not shield us from Prompt Brittleness, a phenomenon where a minuscule change in the input can drastically alter the output probability distribution. This makes prompt engineering notoriously fragile, as semantically insignificant perturbations in the prompt lead to significant variations in performance

Finally, we will address the LLM-as-a-Judge paradigm. If the judge itself varies due to its non-deterministic nature, can we trust its verdict? We will analyze how this variance affects evaluation metrics and the dangerous implications of using these models as arbitrary evaluators.

We conclude that understanding these dynamics in LLM experimentation can help us avoid misleading conclusions and ensure the integrity of our evaluation sets. In light of our findings, we suggest the imperative need to adopt consistency-oriented practices, recognizing non-determinism and high sensitivity as a critical variables that must be managed in any rigorous experiment.

Non-Determinism as a Crisis of Reproducibility

The bedrock of any scientific claim or rigorous engineering system is reproducibility

There is a fundamental tension between the perceptual quality of text and the computational stability required for rigorous software engineering. While intuition suggests that a model should always select the most probable sequence of words to maximize quality, empirical evidence demonstrates that deterministic decoding strategies lead to severe text degradation

The Probabilistic Engine: Why Maximization Fails

Although LLM text generation is formally based on left-to-right probability decomposition, the deterministic search for the most probable sequence proves counterproductive. This approach leads to “neural text degeneration,” a phenomenon where models produce generic, incoherent content or get stuck in infinite loops instead of natural discourse

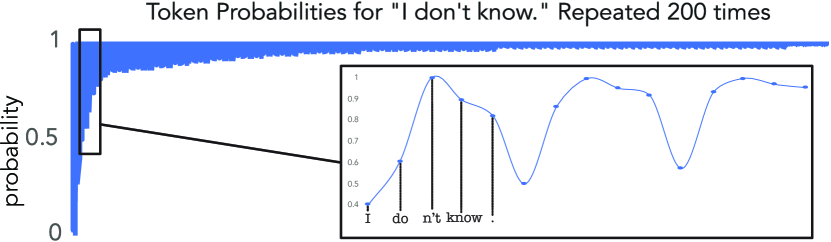

The reason for this failure is not a search error, but an intrinsic property of human language: natural text rarely remains in a “high probability zone” for multiple consecutive steps, instead fluctuating towards lower probability but higher information tokens, as illustrated in Figure 2. This occurs because humans optimize their speech to be informative, actively avoiding stating the obvious, whereas a model that consistently selects the most probable token favors the “lowest common denominator”

Therefore, to generate text indistinguishable from humans, it is imperative to reintroduce variance through stochastic sampling strategies, accepting that $Output(Input_A) \neq Output(Input_A)$ in subsequent runs.

The Role of Sampling Strategies

The selection of the next token, despite a clear probability distribution computed by the LLMs, is determined by the chosen decoding algorithm. These strategies control the balance between coherence and creativity

To introduce human-like diversity, Stochastic Decoding Methods are employed. Pure Sampling selects the next token directly from the full probability distribution, but this can be refined using Sampling with Temperature $(T)$, which reshapes the distribution by scaling the logits before the Softmax function

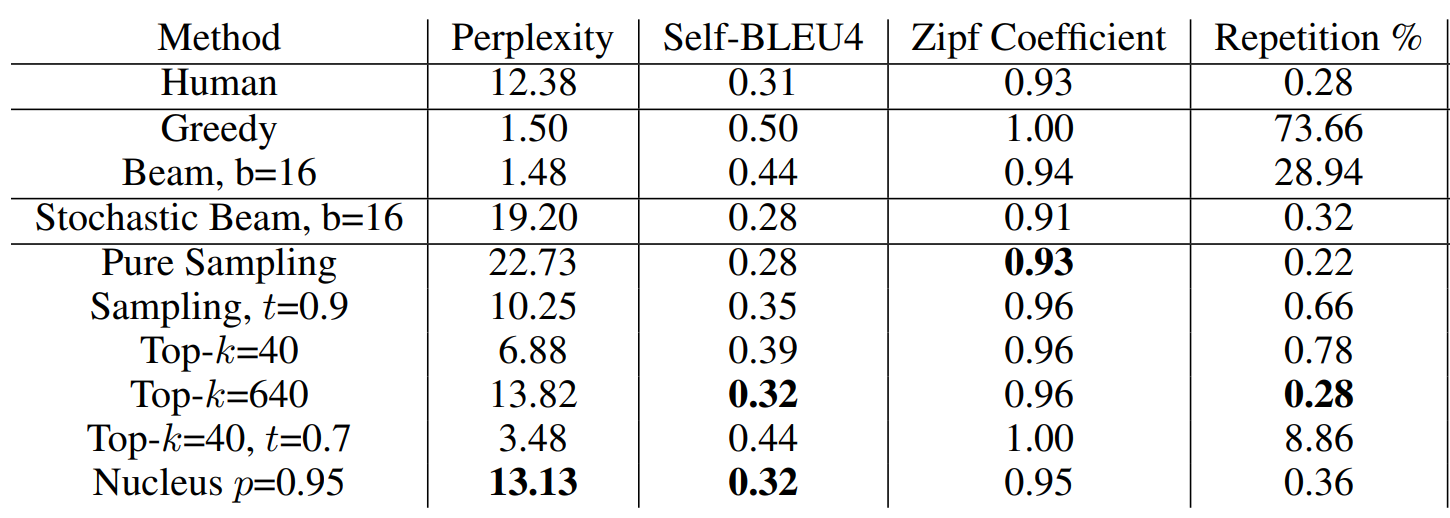

According to Table 1, Nucleus Sampling $(p=0.95)$ is the best overall decoding strategy. It achieves a perplexity score of $13.13$, which is remarkably close to that of human text $(12.38)$. In stark contrast, maximization-based methods like Greedy and Beam Search exhibit unnaturally low perplexity (around 1.50) and suffer from significantly higher repetition rates.

The Myth of Temperature Zero

A prevailing misconception in the engineering of LLMs is that non-determinism is merely a configurable setting. The logic assumes that if the sampling temperature is set to 0.0 (utilizing greedy sampling), the model will purely select the most probable token, thereby rendering the output fully deterministic. However, as noted by Ouyang et al. (2025)

“is contrary to many people’s belief… that setting the temperature to 0 can make ChatGPT deterministic… because… the model applies greedy sampling which should indicate full determinism”.

Research into code generation using ChatGPT challenges the assumption that setting the temperature to 0 guarantees deterministic results. While decreasing the temperature reduces randomness compared to the default setting ($T=1$), empirical evidence demonstrates that it does not eliminate it entirely. Even with the temperature set to 0, a significant ratio of problems persists where the model produces inconsistent results across identical requests

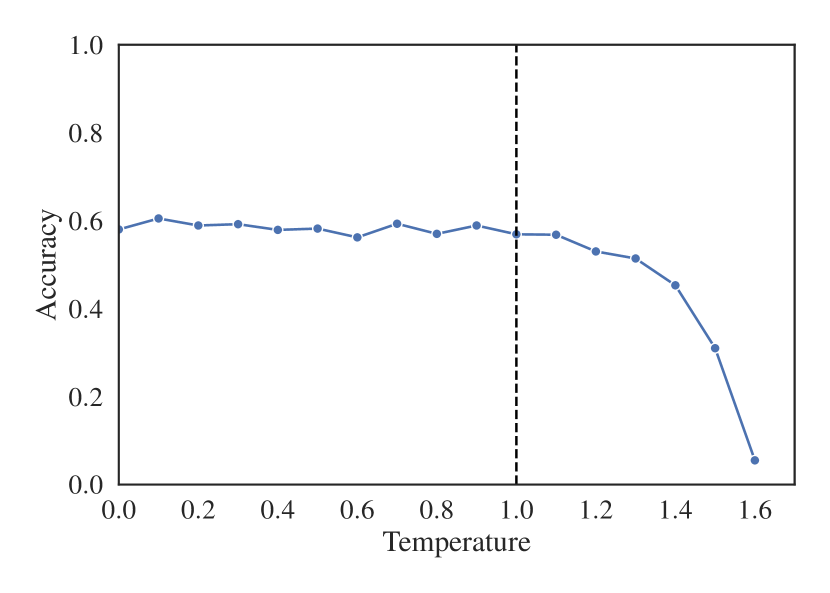

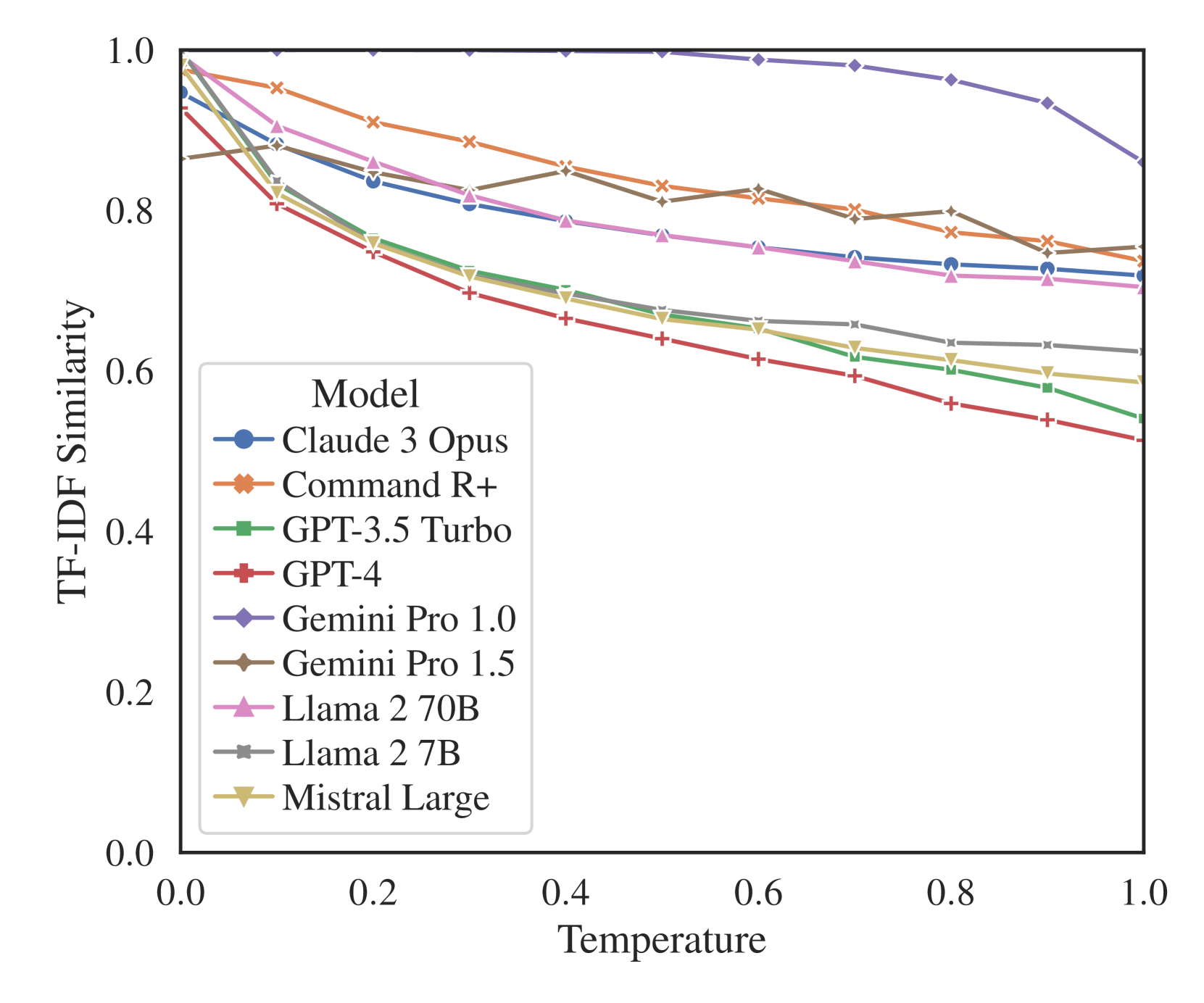

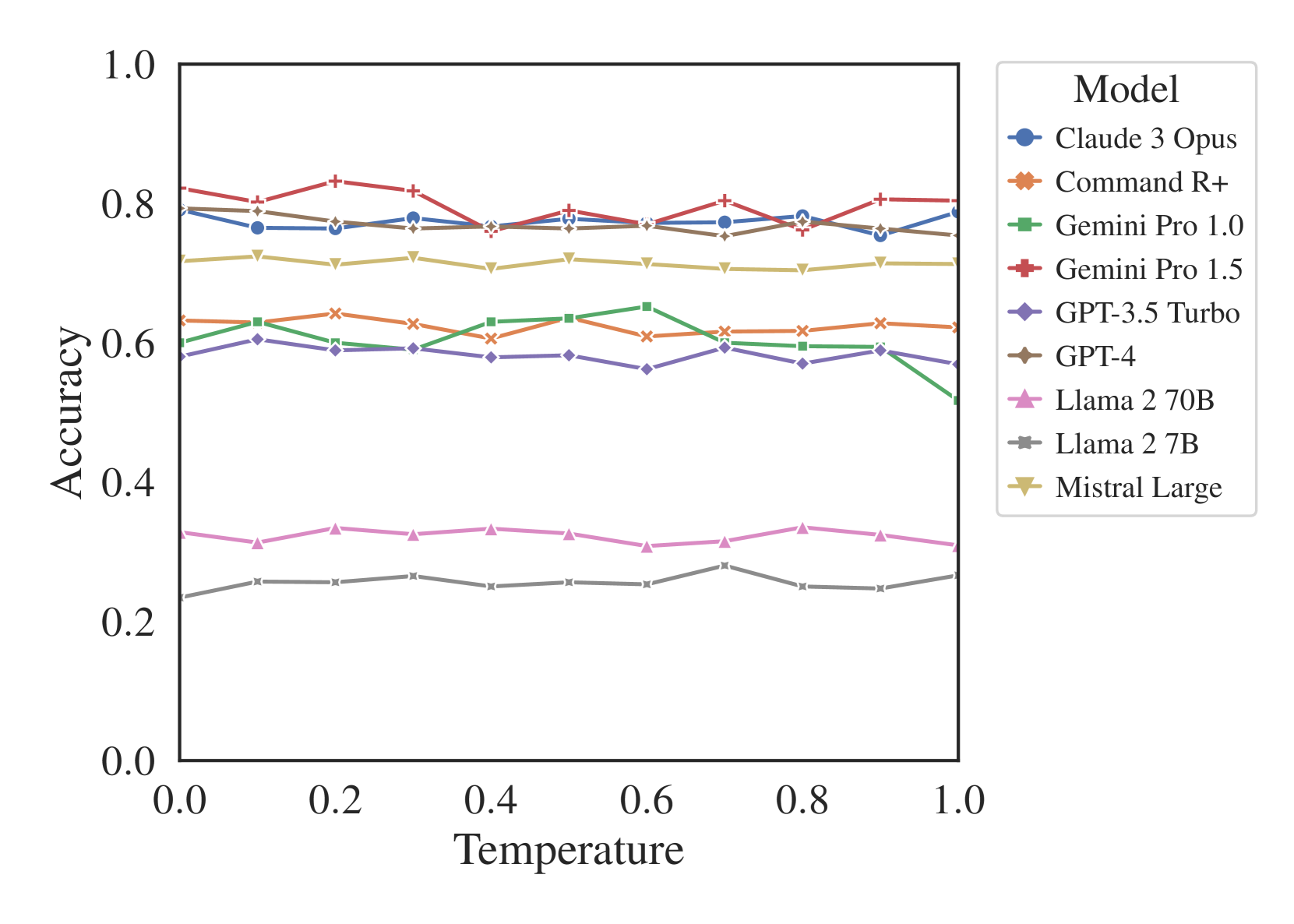

Within this stability range (0.0 - 1.0), an interesting trade-off between precision and creativity emerges. Although accuracy remains flat, text similarity metrics decrease as temperature rises in Figure 4 (a), confirming that higher temperatures produce more diverse outputs without necessarily compromising the correctness of the answer. This stability trend generalizes across most major models, including GPT-4, Claude 3 Opus, and Gemini Pro as show in the Figure 4 (b)

(a)

(b)

Finally, the impact of temperature is strongly mediated by the prompting strategy used. In general problem solving, accuracy stability remains constant regardless of whether Chain-of-Thought (CoT), domain expertise, or self-recitation is used, although CoT generally outperforms others in absolute accuracy

The Root Cause: Infrastructure-Level Chaos

Even when the temperature is set to zero $(T=0)$, LLM outputs often remain non-deterministic. Research into deep learning infrastructure reveals that this instability stems from the collision between physical hardware limitations and software optimization strategies. According to Riach et al. (2019) atomicAdd. Since the order in which these threads finish is random (a race condition), the sequence of summation changes from run to run, causing rounding errors to accumulate differently and leading to bitwise differences in the final result

However, while floating-point instability provides the mathematical potential for error, Horace He et al. (2025)

The Cost of Truth

We can address these inconsistencies, but doing so imposes a significant “Determinism Tax.” Achieving bitwise reproducibility requires ensuring that every operation occurs in a fixed order, yet implementing these fixes places tangible penalties on system performance and complexity. According to He et al. (2025)

To pay this tax, developers must enforce strict controls across the entire software stack. This begins with fixing random seeds across all libraries, including Python, NumPy, and PyTorch or TensorFlow, to ensure that weights and stochastic operations initialize identically. Beyond initialization, we must force the hardware to avoid non-deterministic optimizations. Measures such as setting TF_CUDNN_DETERMINISTIC=true or enabling torch.use_deterministic_algorithms(True) are necessary to disable faster, non-deterministic atomic operations and specific convolution algorithms, trading speed for consistency.

This operational overhead forces a difficult question: Is reproducibility a requirement or a luxury? For creative assistants, the cost is likely unjustified, as variance often functions as a feature that emulates human-like creativity. However, for scientific research or safety-critical applications, the current state of “probabilistic reliability” is unacceptable. Riach et al. (2019)

The Brittleness of Prompt Engineering

Prompt Brittleness refers to the phenomenon where minor and apparently marginal changes in format or structure are made to a LLM input prompt leads to a variation, sometimes drastically, in its result performance

For researchers conducting comparative evaluations, this fragility creates a crisis of reproducibility. If a system works for one test case but fails for a nearly identical one due to a minor prompt variation, the system is effectively non-deterministic from the user’s perspective. Not even large and instruction-tuned models escapes from this sensitivity to “spurious” features

Testing which format or ordering yields the best performance is not an easy task, as considering the full space of prompts formats makes the task an intractable problem, as computational cost increase linearly with the number of possible formats

Consequently, “brittleness” is not a single issue but it manifests in lots of distinct dimensions of the input context. We highlight three of these dimensions below:

(a)

(b)

(c)

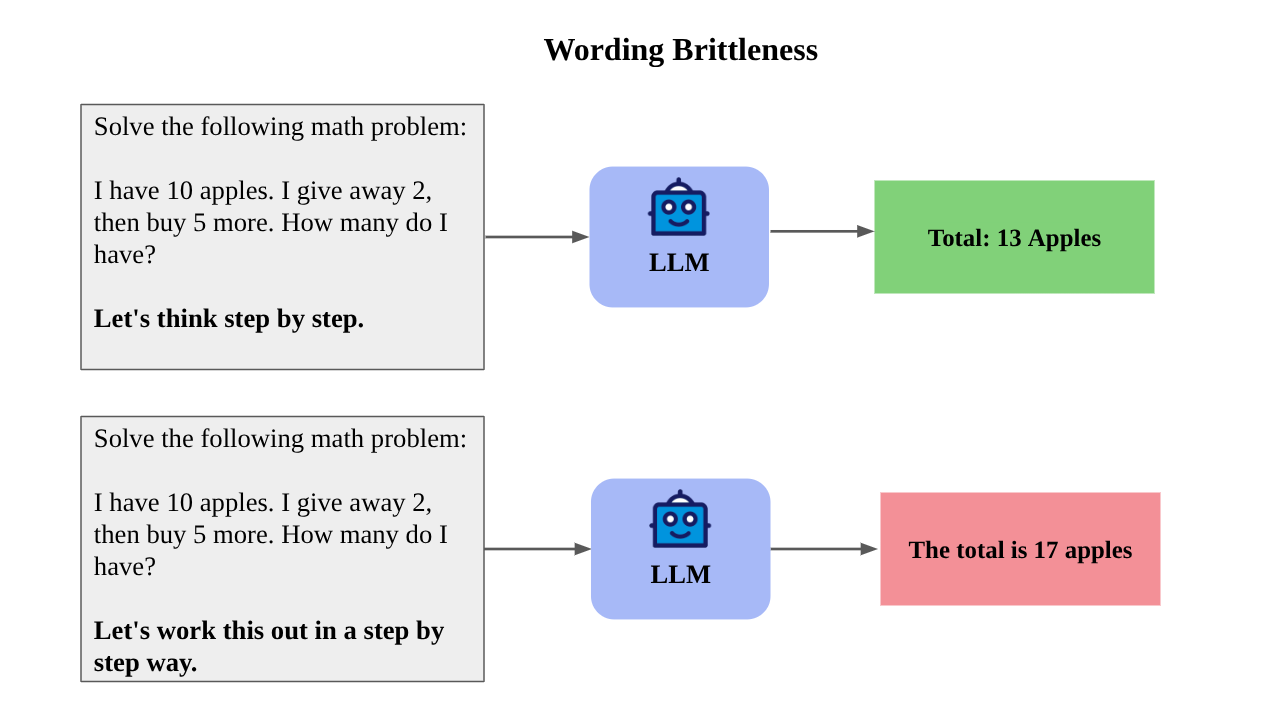

Wording Brittleness

One of the forms that “brittle” manifest is from changes in specific phrasing of instructions, like prefixes and suffixes. Small semantic changes (e.g., “Let’s think step by step” vs. “Let’s work this out in a step by step way”) can trigger significantly different reasoning paths and performance outcomes

This can be framed as an optimisation problem where human intuition is often insufficient to find these “magic words”, and the engineering in charge of the prompt writing is trapped in a “prompt vibing” cage. This also has implication for the final end user, where a system might work perfectly for one user but fail for another who simply types differently, even if their intent is identical.

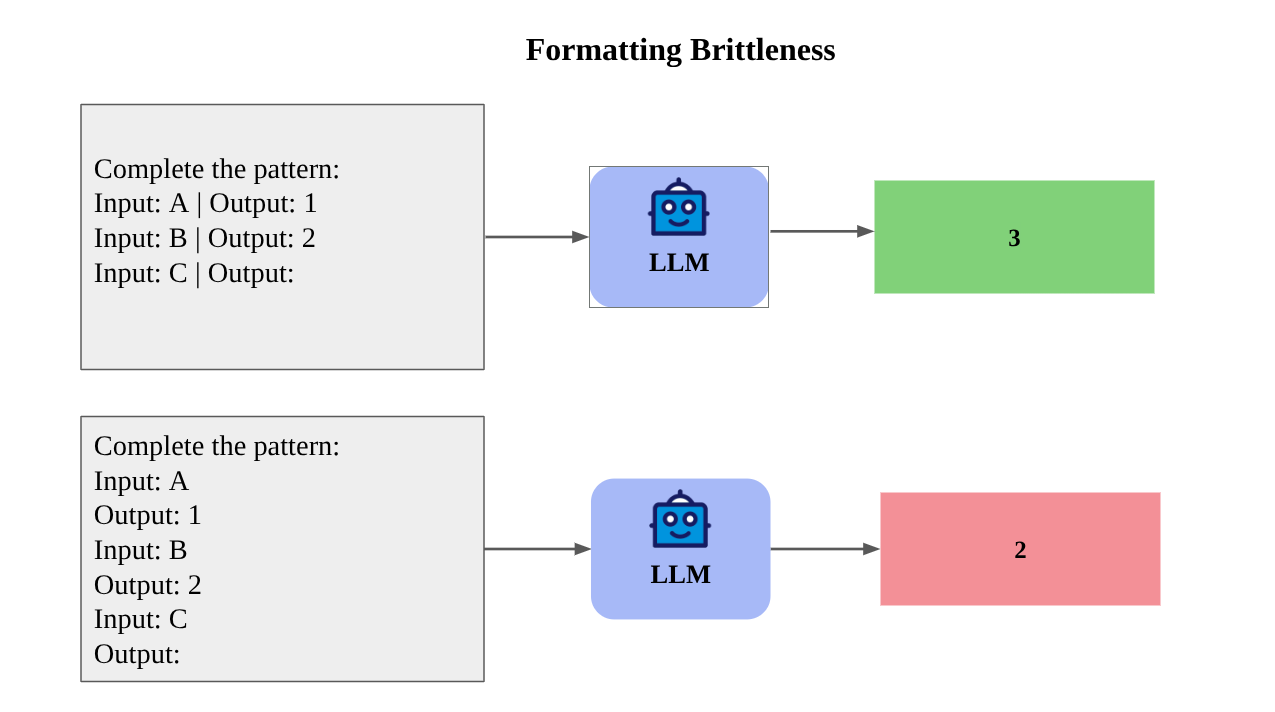

Formatting Brittleness

Models are also sensitive to non-semantic modification. Spurious features like whitespace, capitalisation, or the choice of brackets (e.g., [Input] vs. Input:) can cause accuracy to fluctuate by up to 76%

The authors of

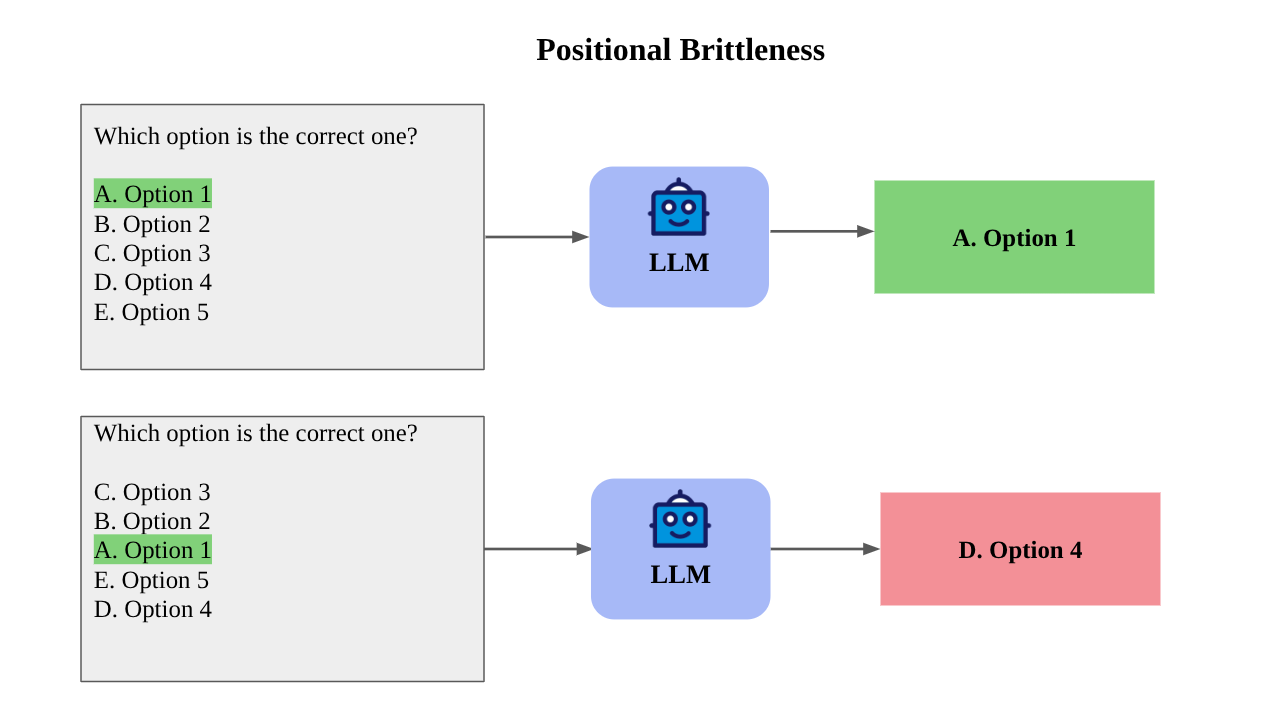

Positional Brittleness

Models are sensitive to where information is located. Liu et al. (2023)

This study lead to the “Lost in the middle” phenomenon, when models are sensitive to where information is located. They exhibit a U-shaped performance curve, favouring information at the very beginning (primacy) or very end (recency) of the context while ignoring equally relevant information in the middle. This indicates a failure to treat the context window as a uniformly accessible memory space. As shown by Pezeshkpour and Hruschka (2024)

So retrieving more documents (e.g., in a RAG system) can harm performance if the relevant answer gets pushed to the middle of the context window. Thus system reliability often decreases as you provide more data, which is counter-intuitive for traditional software systems.

How can be measured

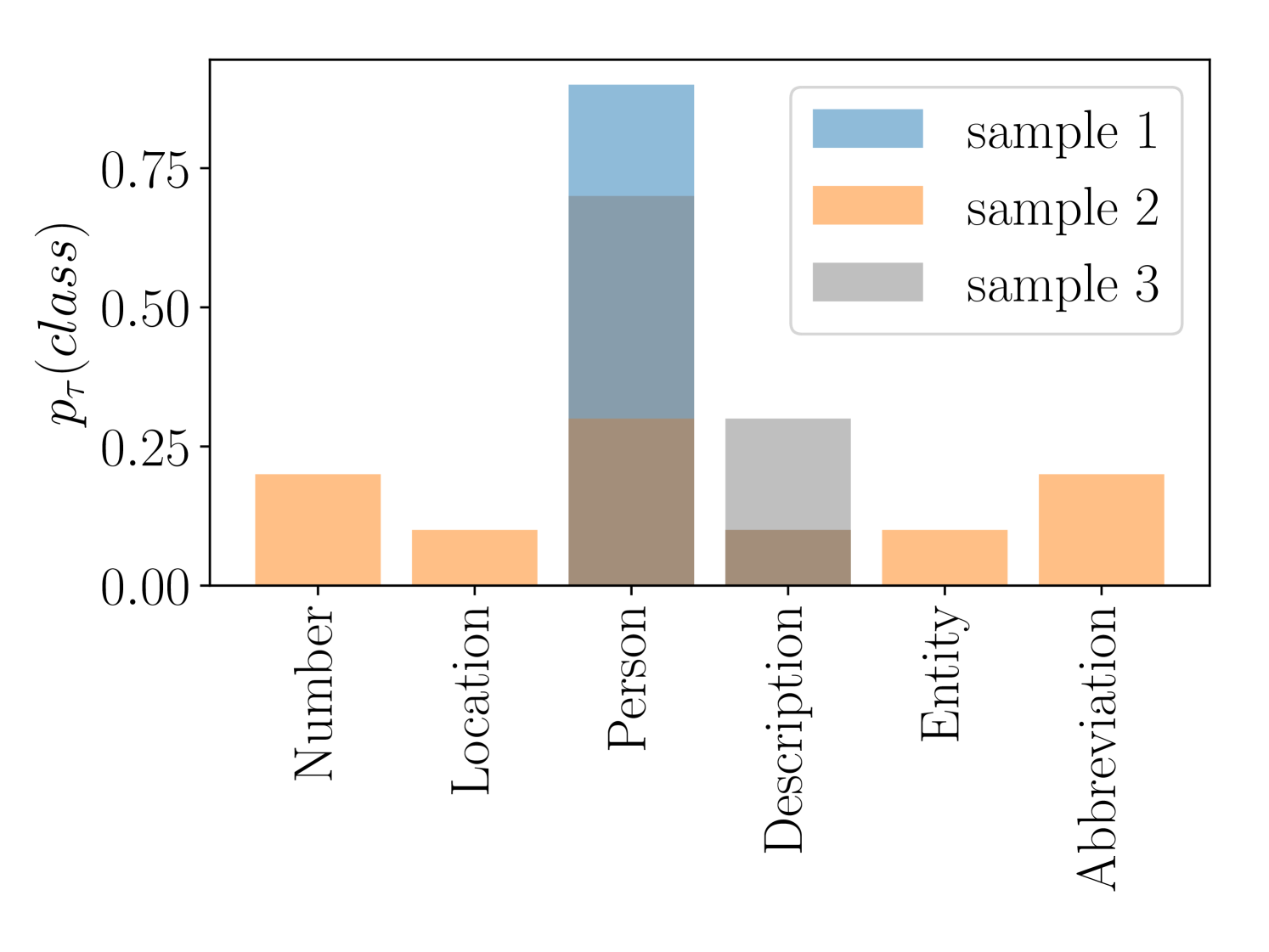

A researcher might assume a model is reliable based on its high performance on standard benchmarks, believing the results generalize, only to discover that its performance degrades significantly when evaluated on prompts that are “out-of-distribution”. This poses the question, “how can we quantify the sensitivity of an LLM to variations of the prompt?” To quantify style-induced Prompt Brittleness, Ngweta et al. (2025)

Despite of the fact that Spread is able to be used with other metrics besides accuracy, they are works that focuses in other types of measurements to quantify reliability. Errica et al. (2024)

- Sensitivity: measures how much the model’s predictions change when the prompt is rephrased. A key feature is that you don’t need the “correct” answers (ground-truth labels) to calculate this, making it useful for real-world debugging.

- Consistency: This metric measures how much the model’s predictions vary for different items that all belong to the same class when the prompt is rephrased.

A model’s accuracy and its sensitivity (how easily it is confused by rephrasing) are not strictly correlated, gaining new insights on how and where a failure might happen. You cannot “optimize away” brittleness simply by chasing higher accuracy scores. A high-accuracy model might still fail catastrophically if the user phrases a request in a way the model didn’t expect. A system with low consistency is unsafe for production because its behaviour is unpredictable. Although it is a metric that for now only work in classification tasks, the authors argue that low sensitivity and high consistency are actually more important than raw accuracy for building trustworthy systems.

In their study, the authors demonstrate how they enhanced prompt performance on a classification task by analyzing queries with low sensitivity. They identified a systematic error where date queries were failing to be classified as numbers. By refining the prompt to explicitly account for this edge case, they successfully improved overall model performance.

While calculating some of these metrics entails significant computational costs due to the volume of LLM calls required, they remain indispensable. Ensuring reliability in LLM-based systems is paramount, particularly for complex software integrations and high-stakes applications

How can be mitigated

This main objective of reducing the impact of brittleness on LLM systems comprises in two distinct sub-problems: minimising prompt sensitivity to brittleness and maximising overall task performance.

Mixture of Formats

Automatic Prompt Engineer

Moreover, these studies shows that prompts are bound to the model they were generated for. They do not transfer well. If the underlying model changes (e.g., GPT-4 to Mistral), the optimised prompts from APE or the safe formats from Mixture of Formats might no longer be valid, requiring a complete re-optimisation of the system to maintain reliability. Yet, it is desirable to have models that are robust to semantically equivalent variations of the initial prompt.

Semantic vs Non-Semantic Brittleness

Human evaluators also exhibit “brittleness” when instructions are varied and some sensitivity is an inherent part of language understanding rather than a machine-specific bug. Li et al. (2025)

If humans, the “gold” standard, vary their answers based on how a question is phrased, then zero variance in LLMs might actually be unnatural or indicative of rigid overfitting rather than true understanding. So, is it brittleness a shared feature? The key distinction seems to lie in what kind changes are made to the prompt. While both humans and LLMs are sensitive to semantic changes, LLMs remain uniquely sensitive to these syntactic changes, like noise, typographical errors and label ordering

The Cost of Brittleness

The fundamental opacity of LLMs creates a paradox where semantically identical prompts yield vastly different results, effectively turning prompt engineering into a “black-box optimization” problem. While we can empirically identify which inputs succeed, we lack the theory to explain why highly specific phrasing is required. If we cannot explain the strict requirements of the input, we cannot fully account for the robustness in the output.

Context position can also be viewed as a hidden variable in every explanation. A model might demonstrate good results in a short context, yet fail to perform in a longer one. One of the most damaging fact draw from this is that semantically equivalent inputs do not yield consistent outputs, being a downside for reliability of the system created using LLMs.

Furthermore, this sensitivity raises serious questions regarding reproducibility and methodological validity of current evaluations. It suggests that some “improvements” reported in literature may be artifacts of prompt engineering, essentially overfitting to a specific format, rather than genuine architectural gains. Also, the underlying mechanics can be exploited to discover malicious jailbreaking prompts through high-dimensional search processes

To address this, reliability metrics may need to be re-weighted to distinguish between harmful instability, such as high sensitivity to typos, and natural ambiguity. Ultimately, as prompt engineering shifts toward automated, high-dimensional search, we must weigh the computational costs against the reality that we still do not understand why one prompt outperforms another.

LLM-as-a-Judge

The rapid proliferation of LLM-based systems has necessitated the development of alternatives to human evaluation. Traditional human evaluation is a slow and costly process, often unable to keep pace with the accelerated rate of model advancement. Consequently, the paradigm of “LLM-as-a-Judge” has emerged, where LLMs are employed as evaluators for complex tasks. This approach has gained popularity due to its ability to process diverse data types and provide scalable and flexible assessments

Currently, static benchmarks such as MMLU

Although this facilitated scalable leaderboards, it steered the field away from objective measurement into a recursive loop of subjective preference. Their popularity also led to a situation where achieving high win rates on these benchmarks can significantly boost the promotional impact of newly released language models

Taxonomy of LLM evaluation bias

Pezeshkpour and Hruschka (2024)

- Position Bias: It refers to the tendency of LLM evaluators to favor candidate responses located in specific positions within the prompt (e.g., first vs. second position).

- Compassion-fade Bias: This bias occurs when evaluators are influenced by explicit model names, favoring recognized models over the actual quality of the content.

- Style Bias: It describes the evaluator’s preference for specific writing styles, visual formatting, or emotional tones, regardless of the content’s validity.

- Length Bias: Also known as verbosity bias, this refers to the tendency to favor responses of a particular length, typically preferring more verbose answers.

- Concreteness Bias: This reflects a preference for responses containing specific details, such as citations, numbers, or complex terminology, even when those details may be factually incorrect.

In the next section, we present examples illustrating how these biases manifest in specific evaluation settings. Furthermore, we examine cases involving Position Bias and Self-Preference Bias.

Self-Preference Bias

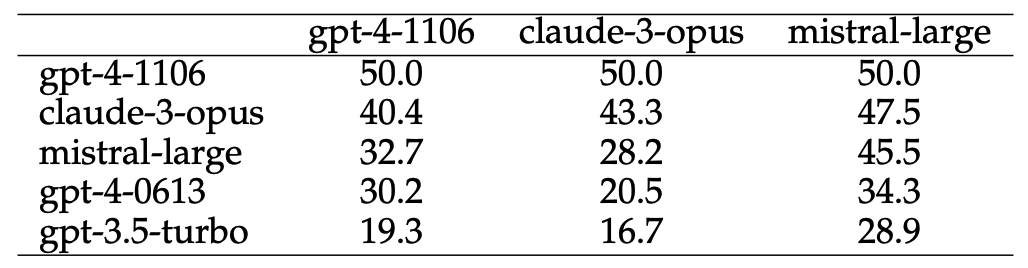

Given the presence of various types of bias, these biases are reflected in the evaluation of model responses. For example, in AlpacaEval 2.0

This phenomenon is explicable by the inverse correlation between the awarded score and the perplexity of the evaluated text. Wataoka et al. (2025)

Position Bias

Analysis of Position Bias within MT-Bench

Conclusion

The unpredictability of LLMs is not merely a configurable setting but a systemic limitation that pits semantic creativity against scientific reproducibility. While techniques like Nucleus Sampling enhance human-like quality, the belief that zero temperature guarantees stability is a fallacy debunked by the asynchronous nature of hardware optimizations and inference batching. Consequently, until we are willing to pay the performance cost for deterministic execution, we must accept that our AI systems are not logic machines, but “statistical engines” prone to chaotic fluctuations.

On the other hand, Prompt Brittleness exposes a fragile reality beneath the impressive capabilities of LLMs. It forces a paradigm shift in how we evaluate systems, ensuring we distinguish genuine architectural gains from mere overfitting to specific prompts. The paradox where semantically identical inputs yield inconsistent outputs confirms that reliability cannot be measured by accuracy alone. Consequently, we require robust metrics to quantify sensitivity at various levels, alongside frameworks and tools to actively mitigate these brittle effects.

While the LLM-as-a-Judge paradigm provides an effective evaluation mechanism, it is susceptible to various forms of bias. However, an analysis of proposed methodologies suggests that these biases can be successfully mitigated through rigorous implementation, provided that the strategies are carefully tailored to the specific requirements of the target application.

As LLMs might appear as inherently unreliable in ways that are difficult to predict or mitigate, we need to be specially aware of what they are capable, and what are the mainly problems when design new systems based on them. Achieving reliability requires building robust wrapper systems (like automated prompt optimizers, evaluation benchmarks, etc) to mitigate the model’s inherent problems. By adopting more rigorous statistical evaluations and maintaining a keen awareness of how brittleness affects our metrics, we can transition from deploying fragile models to engineering robust systems. While the underlying models may remain inherently probabilistic, disciplined engineering and targeted constraints can render these errors statistically negligible, even more closed domain cases

Acknowledgments

This Blog was sponsored by Petróleo Brasileiro S.A. (PETROBRAS) as part of the project ‘Application of Large Language Models (LLMs) for online monitoring of industrial processes,’ developed in collaboration with the University of Campinas [01-P-34480/2024 - 62208].

PLACEHOLDER FOR ACADEMIC ATTRIBUTION

BibTeX citation

PLACEHOLDER FOR BIBTEX